Abstract

In many poster designs, an image usually will be used as a back-ground image, and text and picture will be carried out on the background image later. For intelligent layout design, cropping a suitable background image should be the first problem to be solved. In this paper, through eye movement experiments, ground truth saliency maps of the posters are obtained. Then, the characteristics of the saliency maps of background images are summarized. The characteristics are mainly the rules of the location and size of the salient areas in the background image. The research found that the salient areas of the poster background images are more concentrated in the upper and middle of the poster image, and they are distributed in an inverted triangle. These rules can cut a more suitable background image for typesetting.

You have full access to this open access chapter, Download conference paper PDF

Similar content being viewed by others

Keywords

1 Background

With the rapid development of deep learning in the field of images processing, intelligent design has played an increasingly important role in contemporary design activities. Computers can replace manual work to complete more complicated work, thereby liberating designers and enabling designers to do more creative work. For example, the Luban developed by Alibaba’s Intelligent Design Lab has changed the traditional design mode, and automatically generates visual image designs that meet requirements and standards through user input of styles and sizes.

Many existing automatic layout generation technologies use photographic images as the background in their typesetting process. However, their technologies mainly focus on how to select the suitable font size of the text, the reasonable position of the text, and so on. Few scholars have studied how to better cut images with a specific role, for example, as a background in typographic design. For the existing automatic layout process, the cutting of the background image is the first step of the entire process, which is inconspicuous but important. Although there are mature techniques for image cropping, the image cropping seems to be the last step in related research. The main purpose of these studies is to cut the content of an image intact, or to cut it to have an aesthetic sense. However, from the view of a complete design activity, there may be other tasks after cutting the image. This is not considered in existing research. These methods are often applied to the cropping of photographic photos or thumbnails, but for layout design, images cropped with existing cropping techniques are not necessarily applicable.

When people watch an image or a real scene, they will identify areas of interest to their own, so that their brains will ignore areas they are not interested in during further advanced visual processing, reducing the complexity. This is the attention mechanism of the human. In the computer field, computers mimic human attention mechanisms to better detect visual saliency. The study of visual saliency is the basis of other computer vision problems. Saliency detection has a wide range of applications. After successfully detecting saliency, the computer can further identify the content in the image or scene, and then complete some more intelligent tasks, such as image segmentation, text detection, face recognition, image cropping and so on.

As a background image, what should be its visual saliency? How do people pay attention to background images in typography? This is the main problem of the research in this article. Secondly, this article also hopes to summarize some rules. By applying these rules to the cutting of background images in typography, the images that are more suitable for typography can be cut out. Computers can serve more specific designs in the future. By researching more suitable cutting methods, the automatically generated layout design will produce better results.

The research of visual saliency and the application of visual saliency in cutting images are introduced in Sect. 2. Section 3 describes the specific experimental process in detail. Section 4 analysis the data and certain rules are obtained. The conclusion is summarized in Sect. 5. Finally, the future plan is discussed in Sect. 6.

2 Related Works

2.1 Development and Application of Visual Saliency

Visual saliency is the ability of the visual system (whether human or machine) to select a subset of visual information for further processing. This mechanism serves as a filter to select interesting information related to the current behavior or task and ignore extraneous information [1].

Human visual attention models can be divided into “bottom-up” and “top-down” models. The “bottom-up” saliency model is data-driven and affected by the contrast of image elements such as pixels and blocks with neighboring areas. Also, a “bottom-up” saliency mode considers the uniqueness of each block in overall image [2]. While “top-down” saliency models are task-driven and require the prior knowledge. And human complex inferential cognitive processes, psychological activities and subjective emotions are all needed. Many researches in related fields of psychology study “top-down” saliency models.

The earliest saliency models can be traced back to the work of Itti et al. [3]. Their models combined the cognitive theory of psychology with the early computational models, which triggered the first wave of research on visual saliency. Later, more scholars began to study how to build saliency models to predict gaze points in order to better understand the human visual attention mechanism. The second boom originated from the research by Liu et al. [4] and Achanta et al. [5], who considered the saliency detection as a binary segmentation problem with foreground pixels 1 and background pixels 0, and thus the saliency detection was opened the boundary with computer vision research [6].

In fact, visual saliency has already been applied in the design field. Bylinskii et al. [7] proposed a saliency calculation model for image design and data visualization through research, and analyzed the application possibility of the saliency model, including applying the saliency model to interactive design applications that can be fed back in real time, to generating thumbnails and to the redesign of charts and so on. Jahanian et al. [8] analyzed the saliency of the cover image and adjusted the layout of the cover text to better achieve the visual balance. Ali et al. [9] calculated the features affecting visual balance by collecting tens of thousands of high-quality aesthetic photos.

2.2 Application of Visual Saliency in Cutting

The existing methods for automatically cutting images can be divided into two categories, one is based on attention, and the other is based on aesthetics. The aesthetic-based method is more in line with the photographer’s composition principle. Therefore, the current mainstream method is aesthetic-based cutting.

The main idea of attention-based method is to keep the most salient areas in the image, that is, the most relevant parts in the photo, and crop out other unrelated parts. The importance of each pixel in the image is determined by the saliency. The saliency of the image mainly comes from the saliency distribution map, human eye tracking. Chen et al. [10] studied the problem of image cropping earlier and proposed an image adaptive method based on user’s attention to facilitate users to view images on different displays. This model was based on the three attributes of region of interest, attention value, and minimal perceptible size. They used the Itti model to calculate the pixel saliency value combined with the face and text detection and finally generates a salient map. Suh et al. [11] later used the sum of saliency values within the clipping rectangle to determine the optimal clipping position and generated a thumbnail. Santella [12] obtained saliency maps by acquiring user fixation data, combining image segmentation results, identifying important image content, and calculating the optimal cutting amount. Marchesotti et al. [13] performed image cropping by training a classifier using a labeled image saliency database. Chen et al. [14] explored different search algorithms for optimal image cropping based on saliency frames.

Aesthetic-based image cropping method improve the aesthetic value of images by cutting method. The most important point of this type of method is to train a classifier to determine the score of the cropped image or the label of “beauty” or “not beautiful” by extracting the features of the image. Cutting is the first step of this type of method. After generating candidate regions, a classifier or search algorithm is used to find the optimal cropping region.

However, for cutting background image of the layout design, its aesthetic degree is not the main purpose of the cut. Due to the requirements that the background image need to be typeset, the image as a background may have its own characteristics. Existing image cutting techniques uses the composition rules and aesthetic principles of traditional photography, but the results are often independent and complete, which do not may meet the requirements of the background. Therefore, in this paper, the salient characteristics of the background image are mainly discussed. The characteristics obtained from the study then will be used as the main basis for cutting the background image.

2.3 “Figure-Ground” Relationship

The “figure-ground” relationship is a basic visual phenomenon that comes from Gestalt perception theory. Edgar Rubin started the study of the relationship from the perspective of psychology. In his research, he pointed out that people tend to highlight a part of the observed things as a same object as the “figure” and the rest as the ground. Rudolf Arnheim then systematically applied it to the visual arts. He believed that the “figure” often appears as a closed, smaller area in “Art and Visual Perception”. British scholar E.H. Gonbrich in his book “Art and Illusion” proposed that people pay attention to certain graphics due to their own experience. The “figure” in image often has the characteristics of “representation”, “complete”, “small area”, “clear outline” and so on. The “ground” in a picture often has the characteristics of “neglected” and “fuzzy appearance”.

The relationship of the “figure-ground” in the traditional sense and in this study may not be exactly the same. In this article, this concept is used to distinguish the background image in the poster from some elements arranged on the background image better. However, the above-explained “figure-ground” relationship is more described from the perspective of human visual cognition and psychology. In this article, the “figure-ground” relationship is mainly understood from the operational level of typographic design activity.

3 Experimental

Many scholars have done experiments related to visual saliency. Borji et al. [15] asked the subjects to manually select and segment more significant objects to finally obtain a ground true saliency map. Xu et al. [16] labeled the salient objects in the picture, and then analyzed the impact of high-level information (objects, semantics) on attention. Koehler et al. [17] divided the experiment into three parts, allowing the subjects to freely watch, search for significance, and prompt the search of objects.

The experiment is designed to obtain the ground true salient map of the poster. In this study, posters are collected from various fields as test samples for the layout design background map of this study. Its content includes electronics, food, cosmetics, stationery, daily chemical, etc. to exclude the top-down interference caused by the participants due to different experiences and habits. In addition, based on the life experience of the participants, the text of the control test posters is all in Chinese.

Then, the posters more match the study were selected. The screening requirements are: 1. Posters should have obvious “figure-ground” relationships, that is, posters with complex effects or inconspicuous background images should be discarded. 2. The background of the poster should not be a solid color or an inconspicuous gradient or a regular shading. 3. The posters should be fully designed, that is, posters with logos in the corners of the layout, which only reflect the image content, should be discarded. Because the cutting of such poster background images is the same as the cutting of pure images. After screening, there are 185 qualified posters.

Then the eye-movement experiments were performed on the screened posters to obtain the areas of interest and fixation points. Each picture was tested by 15 participants, and the test poster was divided into 4 groups. A total of 60 participants were tested. In the course of the experiment, the subjects browsed at will without any action, and the test poster was automatically played on the screen. Participants were 18–25 years old. During the experiment, adjust the resolution of all test samples to 1280 * 1024, and fill the blank area with a dark gray bottom. In this study, an eye tracker model Tobii T60 was used to perform eye movement experiments in a bright room 60 cm from the screen. Each test sample was stared at by the subject for 3 s.

4 Data Analysis

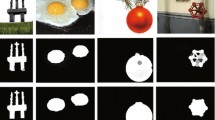

All the 185 test samples can be divided into 2 categories according to the function of the background image. The type 1 of background image contains the main information of the poster, such as the main product, publicity portrait, etc., the area containing the content is more important, and generally cannot be blocked. The type 2 of background image mainly plays the role of accentuating the atmosphere. There is no main content in the background image. Most of them are landscape scenes. The type 2 of poster is more in line with the concept of “ground” in traditional understanding. Figure 1 shows these two different categories.

4.1 The Saliency Map of Background Image

From the “figure-ground” relationship, the saliency on the figure is always stronger than on the ground. In a similar way, in the layout design, the saliency on the text and icons is always stronger than the on the background image. By calculating the average value of RGB of each pixel of each poster, the average saliency map of the poster shown can be obtained in Fig. 2. Comparing Fig. 2(a)(b), it can be found that the saliency area in (a) is larger and stronger. A vertical white line can be found in (a). This means that the posters have consecutive saliency in the vertical direction. This may be due to the fact that most of the posters in the experiment are in vertical composition, and many layouts have obvious text arrangements in the vertical direction, which draws more attention from the participants. In general, the saliency of the “figure” in a poster is indeed stronger than that of the “ground”. According to the above classification of the poster, it can be seen that the saliency of type 2 posters is much weaker than that of type 1 posters. All saliency maps in Fig. 2 show that when people watching a poster, their attention is always concentrated in the center of the image. There may be two reasons to explain this phenomenon. One is that when designers design posters, they often place important content in the center of the image, whether it is a “figure” or the important content on the background image (for type1). Second is the visual characteristics produced by human experience. Human vision is often more sensitive to the center of the image.

In order to further study how to use the saliency of the image to reasonably cut the background image, the following research will focus on the two points of saliency on the background image. 1. Study the location characteristics of salient areas of the background image. 2. Study the salient area of the background image.

4.2 Position of the Center of the Salient Region

The study uses Tobii Studio analysis software to analyze the eye movement data. The software can automatically form the subject’s interest area according to the subject’s fixation point, and form salient regions of different sizes on the test poster, as shown in Fig. 3(b). In order to study the influence of the “figure” on the saliency map of the background images, the positions and areas of the “figures” in each poster are also drawn in the study. The region of the “figure” manually divided by orange wireframe are shown in Fig. 3(c). The barycenter coordinates of each multi-deformation are calculated here to represent the position of each salient region in further study. There are three kinds of regions should be calculated. As shown in Fig. 3(c), the first kind of region is divided by orange line, which indicates the “figures” on the poster, including text, icons, product pictures, photos, etc. (It is represented by SS in the following table.) The second kind of the region, divided by the green line, indicates the salient region formed on the “figure” of the poster. (It is represented by S-SS in the following table.) And the third kind of the region, as shown in Fig. 3(c), indicates the salient region formed on the background with orange line. (It is represented by S-BG in the following table.)

The formula for calculating the barycentric coordinates of a polygon is shown in (1). The polygon X can be divided into n finite simple figures (the simple figures here use triangles to calculate) \( {\text{X}}1,{\text{ X}}2, \ldots {\text{ Xn}} \). Each X has its barycentric coordinates Cn and its area An. The coordinates of the center of gravity (Cx, Cy) of this polygon can be obtained from these two quantities. If the vertices of each polygon are n, the number of triangles is n − 2. If the three vertices of each triangle are (X1, Y1), (X2, Y2), (X3, Y3), according to the formula (2), the barycentric coordinates (X, Y) of each triangle can be calculated. And the area of each triangle also can get by formula (3). Finally, the barycentric coordinates of each divided region can be obtained.

After calculation, the barycentric coordinates of the three kinds of regions are shown in Fig. 4. The image sizes in Fig. 4 have selected the average size of all posters, which is 860 * 1010 pixels. It can be seen from Fig. 4(a) that the salient region on the background image is concentrated on the center of the poster and is oval in shape. Figure 4(b) shows the position of barycentric coordinates of the salient region on the “figures”. It is rectangular in shape. Figure 4(c) shows the barycentric coordinates of the “figures”. The position has a clear cross shape, which reflects a certain layout alignment rule. What should be worth noting is that the barycentric coordinates of all the salient regions marked here, is no difference in saliency strength.

4.3 Position of the Salient Region on the Background Image

The study of the positional characteristics of salient regions on the background image better helps to place the cropping frame in an appropriate position.

More Salient Region on the Background Image

In the experiment, the eye tracker records the fixation points of each participant on the poster. The software automatically generates the area of interest, that is, the salient region on the poster, based on all participants’ fixation points. The region being watched by more participants is more salient. In the software, 0–100 is used to indicate the saliency of each region. Compare all the posters to get the absolute saliency values, as shown in Fig. 5(a) (b) (c) show the positions of saliency values ≤50, >50, >90 respectively. An inverted triangle can be found from the Fig. 5(a) to (c) gradually. (d) shows the location of the most salient region on the background image in each poster, which can better represent the characteristic of the salient position on each poster. And it can be observed that the distribution of the position coordinates is a clear inverted triangle. The position distribution of the salient regions with weaker saliency is usually closer to the center and downward, and the diffusion to the surroundings is more uniform. while the position distribution of the salient regions with stronger saliency is closer to the picture. Comparing the horizontal axis of the picture, it can be found that the position of the region with stronger saliency is more concentrated in the center.

In general, in posters, the more salient areas in the background image are often concentrated in the center of the poster. The closer the position of the saliency position distributes on middle of the horizontal axis, the larger it spread over on the vertical axis. And the most fundamental is that the closer the position of the saliency position to the center of the poster, the higher the probability is. The most salient region location coordinates in each poster will be used to do more analysis.

Distribution Characteristics of the Salient Region on the Background

To study the characteristics of the salient region of the background image in the entire poster layout, four variables can be proposed here for research. 1. The degree of deviation of the most salient region on the background from the center of the poster. 2. The degree of the most salient region on the background from the “figures”. 3. The position of barycentric coordinates on the horizontal axis of the most salient region on the background image. 4. The position of barycentric coordinates on the vertical axis of the most salient region on the background image. After deleting the samples that did not form a saliency region on the background image, there are 165 samples. The analysis shows that these variables all conform to the normal distribution.

The degree of deviation of the most salient region on the background from the center of the poster calculates the distance from the most salient region to the center, the degree of the most salient region on the background from the “figures” calculates the average distance from the most salient region to the “figures”. Comparing these two variables shows that the latter is much longer than the former.

Although the distance from the salient region on the background image to the center of poster and the “figures” well describes the remote situation of the salient region on the background image, it cannot be further used in actual operations. Therefore, the position of barycentric coordinates on the horizontal axis and the vertical axis of the most salient region on the background image should be analyzed. All x and y coordinate are normalized. According to the above qualitative analysis, it can be found that when the x coordinate is determined in different region segments, the distribution of the y coordinate is different. So here the x-axis is divided into 5 segments, which are 0–0.2, 0.2–0.4, 0.4–0.6, 0.6–0.8, 0.8–1.0. The distribution curve of the y-coordinates in the intervals of 0.2–0.4, 0.4–0.6, and 0.6–0.8 is shown in Fig. 6 (x has almost no distribution in the intervals of 0–0.2, 0.8–1.0, so it is not shown in the figure) It can be seen from Fig. 6 that when the x coordinate is determined in a certain interval, the distribution probability of the y coordinate is significantly different. Especially the skewness value, when x is in the interval of 0.2–0.4 and 0.6–0.8, the distribution of y coordinate shifts to the left obviously. The skewness of the x and y coordinates are slightly greater than 0, that is, the entire background salient region has a tendency to shift to the upper left.

Typographical Factors that Influence the Location of Salient Region on Background

In a layout design, there are many factors that affect the location of the salient regions on the background image. The following six factors are listed here:

-

The length of the poster.

-

The width of the poster.

-

The number of “figures” on the poster.

-

The area ratio of the “figures” on the poster.

-

The layout category.

-

The role of the back-ground images.

According to the role of the background image, the posters can be divided into 2 categories, as shown in Fig. 1. Here raises another classification of the poster according to the typographic category to consider the influencing factors more comprehensively. The layout category has something to do with the text in the poster. It categorizes posters based on the number of texts. As shown in Fig. 7, there is almost no text logos or other elements in the type 1, only one line of text or one text segment in the type 2, and no less than two text segments in the type 3.

After analysis of variance, it is found that different layout categories have no effect on the position of salient region on background. However, different role of different background images in the poster will have different effects on the position of salient region on the background image. According to the one-way analysis of variance, it can be seen that the average distance (211.47) from the “figures” of the type 1 is significantly lower than the average distance (408.47) from the “figures” of the type 2. In addition, under this category, the average distance between the salient region on the background image and the center of the poster in type 1 is lower too. The comparison can be seen in Fig. 8(a).

Thereafter, Pearson correlation analysis was performed on the above six layout factors and the positional variables of the background image salient region. It can be found that there are two factors that have a greater impact on the position of the salient region on background image. One is the “figures” area in the poster. Another is the different role of background image in the poster. The study found that only the area ratio of the “figures” in the posters and the different role of background image effects on all the positional variables of the background image salient region. In addition, the area ratio of the “figures” has a strong negative correlation with the x-coordinate of salient region on the background. (The correlation coefficient is −0.646).

In general, when there is main content in the background image, such as main promotional products, portraits, the salient region on the background image is farther from the center of the poster or the “figures”. Because the “figures” in the poster has strongly saliency. While the content the background image also attracted significant attention of the participants, so they need to stay away from each other and not interfere with each other. When the background image in the poster mainly serves as a foil, the salient regions on the background image will be closer to the “figure” and the center of the poster. The area of the “figure” in the poster has a strong negative correlation with the location of the salient region on the background image. That is, when the area occupied by the “figures” in the poster is larger, the salient regions on the background is often placed on the left side of the poster.

(a) Comparison of the average distance between the salient region on the background from the center of the poster and the “figures” under different role of the background image. The distance here is relative to the 860 * 1010 poster size. (b) Comparison of the area ratio of the “figures” in the poster (%), the area ratio of the salient region on the “figures” (%), and the area ratio of the salient region on the back-ground image (%) under different role of the background image.

4.4 Area of the Salient Region on the Background Image

The area characteristics of the salient region on the background image are studied in order to better scale the image. The salient region on the image should be scaled to a reasonable size before cropping.

The Area Characteristics of the Most Salient Region in the Background Image

Three types of regions have been divided in the study, as shown in Fig. 3(c), which are the region division of the “figure” in the poster, the salient region division on the “figure” in the poster, and the salient region division on the background image in the poster. Tobii Studio analysis software can be used to obtain the area ratio of these regions in the respective poster. It can be found that the area ratio that attracted the attention of the subjects on the “figure” is almost similar to the area ratio of the salient region formed on the background image. It shows that the area formed by the fixation point is almost uniform on the “figure” or the “ground”. If others want to study the relationship between “figure” and “ground” in the poster through eye movement data in an experiment, they can choose to look at the time or the order of the fixation points.

Layout Factors that Affect the Area of the Background Salient Region

Pearson correlation analysis was performed using the 6 layout factors mentioned above and the area of background salient region. It is found that the area of the “figure” and the number of “figures” in the poster have a strong negative correlation with the area of the salient region on the background image. In a poster, the larger the area of the “figure”, the smaller the area of salient region on the background image in the poster (the correlation coefficient is −0.696). And to some extent, the layout category is also classified based on the number of the text in the poster, so the layout category also has a certain negative correlation with the area of the salient region on the background image.

The different role of background images in the poster have different effects on the area of salient region on the background image too. See Fig. 8(b). The average area ratio of the salient region on the background image in type1 (0.64) is significantly lower than the average area ratio in type 2(1.80). The samples in different function of background all show consistency in the salient region on the “figure”.

4.5 Cut the Background Image with the Saliency Characteristics

After research, it is found that in the poster, the position and coordinate distribution of the salient region on the background image has a negative correlation with the area of the “figure” in the poster, and the negative correlation with the x coordinate is stronger. And the area of the salient region on the background image also have a negative correlation with the area of the “figure” in the poster. In addition, when the background image plays a different role in the poster, it will also have different effects on the position and area of the salient region on the background image. Although many influencing factors have appeared, a simple linear model is not enough to complete the coordinates of the salient region on the background image (the area of the “figure” in the poster can only explain about 23% of the coordinates of the salient region on the background image), its internal relationship still needs further study. Therefore, in this paper, the x-coordinate distribution characteristics of the salient region on the background are used to generate random x-coordinate, and according to the x-coordinates, the y-coordinates that meet the distribution characteristics are randomly generated later. Next, the size of the salient area in the background image is decided and finally uses these saliency characteristics to cut the image.

This research uses the Montecarlo algorithm to randomly generate x-coordinates that conform to the distribution rule. This method first needs to generate 2 random numbers and the probability that these 2 random numbers will be selected. If the probability of the first random number is greater than the probability of the second random number, the first random number can be used. The distribution characteristics of the x coordinate are shown in Fig. 6. When the coordinate value of x is determined, the y coordinate is randomly generated according to the distribution characteristics of y corresponding to x in different intervals. Both the x and y coordinates are related to the area of the salient region on the background image. The x and y coordinates are positively correlated with the area of the significant region. It is learned from the correlation that in a poster, from the upper left corner to the lower right corner, the area of the background salient region is gradually increase.

Here, the x-coordinate ratio and y-coordinate ratio are used as independent variables, and the S-BG area is used as the dependent variable for linear regression analysis to obtain a simple linear model. The R2 value of this model is 0.239. The model formula is, the area ratio of the salient region on the background image = 0.194 + 3.309 * x coordinate - 0.549 * y coordinate (the x coordinate and y coordinate have been standardized here). And the DW value was near the number 2, which indicated that the model did not have autocorrelation. There is no correlation between them, and the model is better. Figure 9 shows some sets of generated coordinate points and areas on the pictures with the size of 1000 * 1000 pixels. Ten sets of data were generated in each picture.

The randomly generated picture can simply become a cutting suggestion map. Based on the salient map of each image, it displays several cropping suggestions for the image, including where the image’s salient position should be and how the image should be scaled and cut. It is worth noting that the generated values are all ratios. In actual operation, the actual poster length and width required can be brought into the actual coordinate values and the area size of the salient region. As shown in Figs. 10(e) and 11(e) shows the effect of the cutting recommendation map under different specific sizes. Figures 10 and 11 completely show the process of cutting an image by using the generated cutting suggestion map, and then making a layout design. The cropped images and their saliency map used here comes from a model to predict human eye gaze points constructed by M Cornia et al. [18].

5 Conclusion

By studying the salient characteristic of the background image in the poster, this article aims to confirm that using the saliency of an image can reasonably cut it as a background image for typographic design. In this paper’s research on the saliency of poster background images, it is explained that the saliency of background images in poster design has its own characteristics in terms of its location distribution and area of salient region. As a background image in a poster, the location distribution of its salient area will show an inverted triangle, and the probability density at the center of the poster is higher. A simple linear model is also established in this article. The area of the salient region is roughly calculated from the known coordinates of the salient region. Analyzing the correlation, we know that the area of the salient region is gradually increase from the upper left corner to the lower right corner of the poster. Using the analyzed saliency rules in the background image, an image can be cut to make the cropped picture more consistent with the image as a background image in the layout design.

In the future, intelligent design will better serve the society, and it will also become a powerful assistant for designers. These studies will provide the basis for future automatic layout design. When the machine uses an image as the background, cutting the image will be an important step. Cutting an image that is more suitable for typesetting has also become a first step for the research. Although many previous studies have used the saliency to cut the images, the focus of these research is how to quickly find accurate regions of significant objects and the accuracy of border areas to ensure that important areas are retained. The use after cutting is not taken into account. The research in this article focuses on the saliency of special images as background images. By studying the saliency characteristics of this kind of image, they are used to cut out images suitable for a certain type of use. This provides the basis for the general direction of future intelligent typesetting.

6 Discussion and Future Work

This article mainly studies the characteristics of saliency of the background image, however one thing to note is that most of the posters in this study are vertical. This may have a bearing on the outcome. The characteristics in the horizontal composition posters may not be able to summarize well.

Many factors that affect the saliency of the background image have been proposed in this paper. The role of the background image and the area of the “figure” in the poster are the more influential factors in the existing research. The relationship between these influencing factors and saliency has not been further studied in this article. Some simple linear models may not explain these relationships. Among them, there may be more complicated non-linear relationships that are worthy of further in-depth study.

References

Borji, A., Cheng, M.-M., Jiang, H., et al.: Salient object detection: a benchmark. IEEE Trans. Image Process. 24, 5706–5722 (2015). https://doi.org/10.1109/TIP.2015.2487833

Zhao, R., Ouyang, W., Li, H., et al.: Saliency detection by multi-context deep learning. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 1265–1274 (2015). https://doi.org/10.1109/cvpr.2015.7298731

Itti, L., Koch, C., Niebur, E.: A model of saliency-based visual attention for rapid scene analysis. IEEE Trans. Pattern Anal. Mach. Intell. 20, 1254–1259 (1998). https://doi.org/10.1109/34.730558

Liu, T., Yuan, Z., Sun, J., et al.: Learning to detect a salient object. IEEE Trans. Pattern Anal. Mach. Intell. 33, 353–367 (2010). https://doi.org/10.1109/CVPR.2007.383047

Achanta, R., Hemami, S., Estrada, F., et al.: Frequency-tuned salient region detection. In: 2009 IEEE Conference on Computer Vision and Pattern Recognition, pp. 1597–1604. IEEE (2009). https://doi.org/10.1109/cvpr.2009.5206596

Borji, A.: What is a salient object? A dataset and a baseline model for salient object detection. IEEE Trans. Image Process. 24, 742–756 (2014). https://doi.org/10.1109/TIP.2014.2383320

Bylinskii, Z., Kim, N.W., O’Donovan, P., et al.: Learning visual importance for graphic designs and data visualizations. In: Proceedings of the 30th Annual ACM Symposium on User Interface Software and Technology, pp. 57–69 (2017). https://doi.org/10.1145/3126594.3126653

Jahanian, A., Liu, J., Tretter, D.R., et al.: Automatic design of magazine covers (2012). https://doi.org/10.1117/12.914596

Jahanian, A., Vishwanathan, S.V.N., Allebach, J.P.: Learning visual balance from large-scale datasets of aesthetically highly rated images. In: Proceedings of SPIE - The International Society for Optical Engineering, vol. 9394 (2015). https://doi.org/10.1117/12.2084548

Chen, L.-Q., Xie, X., Fan, X., et al.: A visual attention model for adapting images on small displays. Multimed. Syst. 9, 353–364 (2003). https://doi.org/10.1007/s00530-003-0105-4

Suh, B., Ling, H., Bederson, B.B., et al.: Automatic thumbnail cropping and its effectiveness. In: Proceedings of the 16th Annual ACM Symposium on User Interface Software and Technology, pp. 95–104 (2003). https://doi.org/10.1145/964696.964707

Santella, A., Agrawala, M., DeCarlo, D., et al.: Gaze-based interaction for semi-automatic photo cropping. In: Proceedings of the SIGCHI Conference on Human Factors in Computing Systems, pp. 771–780 (2006). https://doi.org/10.1145/1124772.1124886

Marchesotti, L., Cifarelli, C., Csurka, G.: A framework for visual saliency detection with applications to image thumbnailing. In: 2009 IEEE 12th International Conference on Computer Vision, pp. 2232–2239. IEEE (2009). https://doi.org/10.1109/iccv.2009.5459467

Chen, J., Bai, G., Liang, S., et al.: Automatic image cropping: a computational complexity study. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 507–515 (2016). https://doi.org/10.1109/cvpr.2016.61

Borji, A., Sihite, D.N., Itti, L.: What stands out in a scene? A study of human explicit saliency judgment. Vis. Res. 91, 62–77 (2013). https://doi.org/10.1016/j.visres.2013.07.016

Xu, J., Jiang, M., Wang, S., et al.: Predicting human gaze beyond pixels. J. Vis. 14, 28 (2014). https://doi.org/10.1167/14.1.28

Koehler, K., Guo, F., Zhang, S., et al.: What do saliency models predict? J. Vis. 14, 14 (2014). https://doi.org/10.1167/14.3.14

Cornia, M., Baraldi, L., Serra, G., et al.: Predicting human eye fixations via an LSTM-based saliency attentive model. IEEE Trans. Image Process. 27, 5142–5154 (2018). https://doi.org/10.1109/TIP.2018.2851672

Author information

Authors and Affiliations

Corresponding authors

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2020 Springer Nature Switzerland AG

About this paper

Cite this paper

Zhu, L., Cao, X., Fang, Y., Zhang, L., Li, X. (2020). Application of Visual Saliency in the Background Image Cutting for Layout Design. In: Meiselwitz, G. (eds) Social Computing and Social Media. Design, Ethics, User Behavior, and Social Network Analysis. HCII 2020. Lecture Notes in Computer Science(), vol 12194. Springer, Cham. https://doi.org/10.1007/978-3-030-49570-1_12

Download citation

DOI: https://doi.org/10.1007/978-3-030-49570-1_12

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-030-49569-5

Online ISBN: 978-3-030-49570-1

eBook Packages: Computer ScienceComputer Science (R0)