Abstract

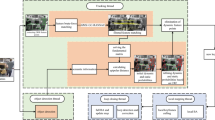

We present inertial safety maps (ISM), a novel scene representation designed for fast detection of obstacles in scenarios involving camera or scene motion, such as robot navigation and human-robot interaction. ISM is a motion-centric representation that encodes both scene geometry and motion; different camera motion results in different ISMs for the same scene. We show that ISM can be estimated with a two-camera stereo setup without explicitly recovering scene depths, by measuring differential changes in disparity over time. We develop an active, single-shot structured light-based approach for robustly measuring ISM in challenging scenarios with textureless objects and complex geometries. The proposed approach is computationally light-weight, and can detect intricate obstacles (e.g., thin wire fences) by processing high-resolution images at high-speeds with limited computational resources. ISM can be readily integrated with depth and range maps as a complementary scene representation, potentially enabling high-speed navigation and robotic manipulation in extreme environments, with minimal device complexity.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

Notes

- 1.

In contrast, a 3D map is a motion-invariant scene representation.

- 2.

The method is “single-shot” in that we compute N ISMs from \(N\,+\,1\) frames (single-shot except one initial frame).

- 3.

It is possible to recover absolute phase using a unit-frequency sinusoid, however at a considerably lower phase-recovery precision than high-frequency sinusoids.

References

Azevedo, S., McEwan, T.E.: Micropower impulse radar. IEEE Potentials 16(2), 15–20 (1997)

Bartels, J.R., Wang, J.: Agile depth sensing using triangulation light curtains. In: International Conference on Computer Vision (ICCV), pp. 7899–7907. IEEE (2019)

Čech, J., Sanchez-Riera, J., Horaud, R.: Scene flow estimation by growing correspondence seeds. In: IEEE Conference on Computer Vision and Pattern Recognition (CVPR), pp. 3129–3136. IEEE (2011)

Coombs, D., Herman, M., Hong, T.H., Nashman, M.: Real-time obstacle avoidance using central flow divergence, and peripheral flow. IEEE Trans. Robot. Autom. 14(1), 49–59 (1998)

Engel, J., Schöps, T., Cremers, D.: LSD-SLAM: large-scale direct monocular SLAM. In: Fleet, D., Pajdla, T., Schiele, B., Tuytelaars, T. (eds.) ECCV 2014. LNCS, vol. 8690, pp. 834–849. Springer, Cham (2014). https://doi.org/10.1007/978-3-319-10605-2_54

Fanello, S.R., et al.: HyperDepth: learning depth from structured light without matching. In: IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Las Vegas, NV, USA, pp. 5441–5450. IEEE, June 2016

Felzenszwalb, P.F., Huttenlocher, D.P.: Efficient belief propagation for early vision. Int. J. Comput. Vis. (IJCV) 70(1), 41–54 (2006)

Flacco, F., Kroger, T., De Luca, A., Khatib, O.: A depth space approach to human-robot collision avoidance. In: IEEE International Conference on Robotics and Automation (ICRA), Saint Paul, MN, pp. 338–345. IEEE, May 2012

Furukawa, R., Sagawa, R., Kawasaki, H.: Depth estimation using structured light flow — analysis of projected pattern flow on an object’s surface. In: IEEE International Conference on Computer Vision (ICCV), pp. 4650–4658. IEEE, October 2017

Gorthi, S.S., Rastogi, P.: Fringe projection techniques: whither we are? Opt. Lasers Eng. 48(2), 133–140 (2010)

Green, W.E., Oh, P.Y.: Optic-flow-based collision avoidance. IEEE Robot. Autom. Mag. 15(1), 96–103 (2008)

Grewal, H., Matthews, A., Tea, R., George, K.: LIDAR-based autonomous wheelchair. In: IEEE Sensors Applications Symposium (SAS), Glassboro, NJ, USA, pp. 1–6. IEEE (2017)

Gupta, M., Nayar, S.K.: Micro phase shifting. In: IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Providence, RI, pp. 813–820. IEEE, June 2012

Gupta, M., Yin, Q., Nayar, S.K.: Structured light in sunlight. In: IEEE International Conference on Computer Vision (ICCV), Sydney, Australia, pp. 545–552. IEEE, December 2013

Hansard, M., Lee, S., Choi, O., Horaud, R.: Time of Flight Cameras: Principles, Methods, and Applications. Springer, London (2012). https://doi.org/10.1007/978-1-4471-4658-2

Heide, F., Heidrich, W., Hullin, M., Wetzstein, G.: Doppler time-of-flight imaging. ACM Trans. Graph. 34(4), 1–11 (2015)

Heinzmann, J., Zelinsky, A.: Quantitative safety guarantees for physical human-robot interaction. Int. J. Robot. Res. 22(7–8), 479–504 (2003)

Hirschmuller, H.: Accurate and efficient stereo processing by semi-global matching and mutual information. In: IEEE Computer Society Conference on Computer Vision and Pattern Recognition (CVPR), vol. 2, pp. 807–814, San Diego, CA, USA. IEEE (2005)

Horn, B., Fang, Y.F.Y., Masaki, I.: Time to contact relative to a planar surface. In: IEEE Intelligent Vehicles Symposium, pp. 68–74. IEEE (2007)

Ikuta, K., Ishii, H., Nokata, M.: Safety evaluation method of design and control for human-care robots. In: International Symposium on Micromechatronics and Human Science (MHS), pp. 119–127. IEEE (2000)

Kawasaki, H., Furukawa, R., Sagawa, R., Yagi, Y.: Dynamic scene shape reconstruction using a single structured light pattern. In: IEEE Conference on Computer Vision and Pattern Recognition (CVPR), pp. 1–8, Anchorage, AK, USA. IEEE, June 2008

Lacevic, B., Rocco, P.: Kinetostatic danger field - a novel safety assessment for human-robot interaction. In: IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), Taipei, pp. 2169–2174. IEEE, October 2010

Zhang, L., Curless, B., Seitz, S.: Rapid shape acquisition using color structured light and multi-pass dynamic programming. In: 3D Data Processing Visualization and Transmission, pp. 24–36. IEEE Computur Society (2002)

Lin, J.F., Su, X.Y.: Two-dimensional Fourier transform profilometry for the automatic measurement of three-dimensional object shapes. Opt. Eng. 34(11), 3297 (1995)

Liu, C., Tomizuka, M.: Algorithmic safety measures for intelligent industrial co-robots. In: IEEE International Conference on Robotics and Automation (ICRA), Stockholm, Sweden, pp. 3095–3102. IEEE, May 2016

Lucas, B.D., Kanade, T.: An iterative image registration technique with an application to stereo vision. In: International Joint Conference on Artificial Intelligence (IJCAI), Vancouver, British Columbia, Canada, pp. 674–679 (1981)

Marvel, J.A.: Performance metrics of speed and separation monitoring in shared workspaces. IEEE Trans. Autom. Sci. Eng. 10(2), 405–414 (2013)

Muller, D., Pauli, J., Nunn, C., Gormer, S., Muller-Schneiders, S.: Time to contact estimation using interest points. In: International IEEE Conference on Intelligent Transportation Systems (ITSC), St. Louis, pp. 1–6. IEEE, October 2009

Mur-Artal, R., Tardos, J.D.: ORB-SLAM2: an open-source SLAM system for monocular, stereo, and RGB-D cameras. IEEE Trans. Robot. 33(5), 1255–1262 (2017)

Nayar, S.K., Krishnan, G., Grossberg, M.D., Raskar, R.: Fast separation of direct and global components of a scene using high frequency illumination. ACM Trans. Graph. 25(3), 935–944 (2006)

O’Toole, M., Achar, S., Narasimhan, S.G., Kutulakos, K.N.: Homogeneous codes for energy-efficient illumination and imaging. ACM Trans. Graph. 34(4), 1–13 (2015)

O’Toole, M., Mather, J., Kutulakos, K.N.: 3D shape and indirect appearance by structured light transport. In: IEEE Conference on Computer Vision and Pattern Recognition (CVPR), pp. 3246–3253. IEEE (2014)

Pagès, J., Salvi, J., Collewet, C., Forest, J.: Optimised De Bruijn patterns for one-shot shape acquisition. Image Vis. Comput. 23(8), 707–720 (2005)

Sagawa, R., Ota, Y., Yagi, Y., Furukawa, R., Asada, N., Kawasaki, H.: Dense 3D reconstruction method using a single pattern for fast moving object. In: IEEE International Conference on Computer Vision (ICCV), pp. 1779–1786. IEEE, September 2009

Min, S.D., Kim, J.K., Shin, H.S., Yun, Y.H., Lee, C.K., Lee, M.: Noncontact respiration rate measurement system using an ultrasonic proximity sensor. IEEE Sens. J. 10(11), 1732–1739 (2010)

Takeda, M., Ina, H., Kobayashi, S.: Fourier-transform method of fringe-pattern analysis for computer-based topography and interferometry. J. Opt. Soc. Am. 72(1), 156 (1982)

Takeda, M., Mutoh, K.: Fourier transform profilometry for the automatic measurement of 3-D object shapes. Appl. Opt. 22(24), 3977 (1983)

Van der Jeught, S., Dirckx, J.J.: Real-time structured light profilometry: a review. Opt. Lasers Engineering 87, 18–31 (2016)

Vo, M., Narasimhan, S.G., Sheikh, Y.: Separating texture and illumination for single-shot structured light reconstruction. In: IEEE Conference on Computer Vision and Pattern Recognition Workshops, pp. 433–440. IEEE, June 2014

Wang, J., Bartels, J., Whittaker, W., Sankaranarayanan, A.C., Narasimhan, S.G.: Programmable triangulation light curtains. In: Ferrari, V., Hebert, M., Sminchisescu, C., Weiss, Y. (eds.) ECCV 2018. LNCS, vol. 11207, pp. 20–35. Springer, Cham (2018). https://doi.org/10.1007/978-3-030-01219-9_2

Watanabe, Y., Sakaue, F., Sato, J.: Time-to-contact from image intensity. In: IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Boston, MA, USA, pp. 4176–4183. IEEE, June 2015

Yang, Q., Wang, L., Ahuja, N.: A constant-space belief propagation algorithm for stereo matching. In: IEEE Computer Society Conference on Computer Vision and Pattern Recognition (CVPR). San Francisco, CA, USA, pp. 1458–1465. IEEE, June 2010

Zhang, Z.: Microsoft kinect sensor and its effect. IEEE Multimedia 19(2), 4–10 (2012)

Zinn, M., Khatib, O., Roth, B., Salisbury, J.: Playing it safe. IEEE Robot. Autom. Mag. 11(2), 12–21 (2004)

Acknowledgement

This research is supported in part by the DARPA REVEAL program and a Wisconsin Alumni Research Foundation (WARF) Fall Competition award.

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

1 Electronic supplementary material

Below is the link to the electronic supplementary material.

Rights and permissions

Copyright information

© 2020 Springer Nature Switzerland AG

About this paper

Cite this paper

Ma, S., Gupta, M. (2020). Inertial Safety from Structured Light. In: Vedaldi, A., Bischof, H., Brox, T., Frahm, JM. (eds) Computer Vision – ECCV 2020. ECCV 2020. Lecture Notes in Computer Science(), vol 12368. Springer, Cham. https://doi.org/10.1007/978-3-030-58592-1_44

Download citation

DOI: https://doi.org/10.1007/978-3-030-58592-1_44

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-030-58591-4

Online ISBN: 978-3-030-58592-1

eBook Packages: Computer ScienceComputer Science (R0)