Abstract

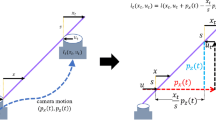

To study the impact of the camera motion speed for image/video based tasks, we propose an extendable synthetic dataset based on real image sequences. In our dataset, image sequences with different camera speeds featuring the same scene and the same camera trajectory. To synthesize a photo-realistic image sequence with fast camera motions, we propose an image blur synthesis method that generates blurry images by their sharp images, camera motions and the reconstructed 3D scene model. Experiments show that our synthetic blurry images are more realistic than the ones synthesized by existing methods. Based on our synthetic dataset, one can study the performance of an algorithm in different camera motions. In this paper, we evaluate several mainstream methods of two relevant tasks: visual SLAM and image deblurring. Through our evaluations, we draw some conclusions about the robustness of these methods in the face of different camera speeds and image motion blur.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

References

Besl, P.J., McKay, N.D.: Method for registration of 3-D shapes. In: Sensor fusion IV: control paradigms and data structures, vol. 1611, pp. 586–606. International Society for Optics and Photonics (1992)

Burri, M., et al.: The euroc micro aerial vehicle datasets. Int. J. Robot. Res. 35(10), 1157–1163 (2016)

Dai, A., Chang, A.X., Savva, M., Halber, M., Funkhouser, T., Nießner, M.: ScanNet: richly-annotated 3D reconstructions of indoor scenes. In: Proceedings of Computer Vision and Pattern Recognition (CVPR). IEEE (2017)

Dai, A., Nießner, M., Zollhöfer, M., Izadi, S., Theobalt, C.: BundleFusion: Real-time globally consistent 3D reconstruction using on-the-fly surface reintegration. ACM Trans. Graph. (ToG) 36(3), 24 (2017)

Engel, J., Koltun, V., Cremers, D.: Direct sparse odometry. IEEE Trans. Pattern Anal. Mach. Intell. 40(3), 611–625 (2017)

Engel, J., Schöps, T., Cremers, D.: LSD-SLAM: large-scale direct monocular SLAM. In: Fleet, D., Pajdla, T., Schiele, B., Tuytelaars, T. (eds.) ECCV 2014. LNCS, vol. 8690, pp. 834–849. Springer, Cham (2014). https://doi.org/10.1007/978-3-319-10605-2_54

Fergus, R., Singh, B., Hertzmann, A., Roweis, S.T., Freeman, W.T.: Removing camera shake from a single photograph. In: ACM SIGGRAPH 2006 Papers, pp. 787–794 (2006)

Forster, C., Pizzoli, M., Scaramuzza, D.: SVO: fast semi-direct monocular visual odometry. In: 2014 IEEE international Conference on Robotics and Automation (ICRA), pp. 15–22. IEEE (2014)

Gauglitz, S., Höllerer, T., Turk, M.: Evaluation of interest point detectors and feature descriptors for visual tracking. Int. J. Comput. Vis. 94(3), 335–360 (2011)

Handa, A., Newcombe, R.A., Angeli, A., Davison, A.J.: Real-time camera tracking: when is high frame-rate best? In: Fitzgibbon, A., Lazebnik, S., Perona, P., Sato, Y., Schmid, C. (eds.) ECCV 2012. LNCS, vol. 7578, pp. 222–235. Springer, Heidelberg (2012). https://doi.org/10.1007/978-3-642-33786-4_17

Handa, A., Whelan, T., McDonald, J., Davison, A.: A benchmark for RGB-D visual odometry, 3D reconstruction and SLAM. In: IEEE International Conference on Robotics and Automation, ICRA, Hong Kong, China, May 2014

Kahler, O., Prisacariu, V.A., Ren, C.Y., Sun, X., Torr, P.H.S., Murray, D.W.: Very high frame rate volumetric integration of depth images on mobile device. IEEE Trans. Vis. Comput. Graph. 22(11), 1241–1250 (2015)

Kim, T.H., Lee, K.M.: Segmentation-free dynamic scene deblurring. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 2766–2773 (2014)

Krishnan, D., Tay, T., Fergus, R.: Blind deconvolution using a normalized sparsity measure. In: CVPR 2011, pp. 233–240. IEEE (2011)

Lai, W.S., Huang, J.B., Hu, Z., Ahuja, N., Yang, M.H.: A comparative study for single image blind deblurring. In: IEEE Conferene on Computer Vision and Pattern Recognition (2016)

Levin, A., Weiss, Y., Durand, F., Freeman, W.T.: Understanding and evaluating blind deconvolution algorithms. In: 2009 IEEE Conference on Computer Vision and Pattern Recognition, pp. 1964–1971. IEEE (2009)

Levin, A., Weiss, Y., Durand, F., Freeman, W.T.: Efficient marginal likelihood optimization in blind deconvolution. In: CVPR 2011, pp. 2657–2664. IEEE (2011)

Mur-Artal, R., Tardós, J.D.: ORB-SLAM2: an open-source SLAM system for monocular, stereo, and RGB-D cameras. IEEE Trans. Rob. 33(5), 1255–1262 (2017)

Nah, S., Kim, T.H., Lee, K.M.: Deep multi-scale convolutional neural network for dynamic scene deblurring. In: 2017 IEEE Conference on Computer Vision and Pattern Recognition (CVPR) (2017)

Noroozi, M., Chandramouli, P., Favaro, P.: Motion deblurring in the wild. In: Roth, V., Vetter, T. (eds.) GCPR 2017. LNCS, vol. 10496, pp. 65–77. Springer, Cham (2017). https://doi.org/10.1007/978-3-319-66709-6_6

Qin, T., Li, P., Shen, S.: VINS-mono: a robust and versatile monocular visual-inertial state estimator. IEEE Trans. Rob. 34(4), 1004–1020 (2018)

Sellent, A., Rother, C., Roth, S.: Stereo video deblurring. In: Leibe, B., Matas, J., Sebe, N., Welling, M. (eds.) ECCV 2016. LNCS, vol. 9906, pp. 558–575. Springer, Cham (2016). https://doi.org/10.1007/978-3-319-46475-6_35

Shan, Q., Jia, J., Agarwala, A.: High-quality motion deblurring from a single image. ACM Trans. Graph. (TOG) 27(3), 1–10 (2008)

Shen, Z., et al.: Human-aware motion deblurring. In: Proceedings of the IEEE International Conference on Computer Vision, pp. 5572–5581 (2019)

Sturm, J., Engelhard, N., Endres, F., Burgard, W., Cremers, D.: A benchmark for the evaluation of RGB-D slam systems. In: Proceedings of of the International Conference on Intelligent Robot Systems (IROS), October 2012

Su, S., Delbracio, M., Wang, J., Sapiro, G., Heidrich, W., Wang, O.: Deep video deblurring for hand-held cameras. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 1279–1288 (2017)

Sun, J., Cao, W., Xu, Z., Ponce, J.: Learning a convolutional neural network for non-uniform motion blur removal. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 769–777 (2015)

Sun, L., Cho, S., Wang, J., Hays, J.: Edge-based blur kernel estimation using patch priors. In: IEEE International Conference on Computational Photography (ICCP), pp. 1–8. IEEE (2013)

Whelan, T., Salas-Moreno, R.F., Glocker, B., Davison, A.J., Leutenegger, S.: ElasticFusion: real-time dense slam and light source estimation. Int. J. Robot. Res. 35(14), 1697–1716 (2016)

Whyte, O., Sivic, J., Zisserman, A., Ponce, J.: Non-uniform deblurring for shaken images. Int. J. Comput. Vis. 98(2), 168–186 (2012)

Xu, L., Jia, J.: Two-phase kernel estimation for robust motion deblurring. In: European Conference on Computer Vision, pp. 236–252. Springer (2014)

Xu, L., Zheng, S., Jia, J.: Unnatural l0 sparse representation for natural image deblurring. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 1107–1114 (2013)

Zhu, Z., Xu, F., Yan, C., Hao, X., Ji, X., Zhang, Y., Dai, Q.: Real-time indoor scene reconstruction with RGBD and inertial input. In: 2019 IEEE International Conference on Multimedia and Expo (ICME), pp. 7–12. IEEE (2019)

Acknowledgement

Thiswork was funded by National Science Foundation of China (61931008, 61671196, 61701149, 61801157, 61971268, 61901145, 61901150, 61972123), National Science Major Foundation of Research Instrumentation of PR China under Grants 61427808, Zhejiang Province NatureScienceFoundationofChina (R17F030006, Q19F010030), Higher Education Discipline Innovation Project 111 Project D17019.

Author information

Authors and Affiliations

Corresponding authors

Editor information

Editors and Affiliations

1 Electronic supplementary material

Below is the link to the electronic supplementary material.

Rights and permissions

Copyright information

© 2020 Springer Nature Switzerland AG

About this paper

Cite this paper

Zhu, Z., Xu, F., Li, M., Wang, Z., Yan, C. (2020). Challenges from Fast Camera Motion and Image Blur: Dataset and Evaluation. In: Bartoli, A., Fusiello, A. (eds) Computer Vision – ECCV 2020 Workshops. ECCV 2020. Lecture Notes in Computer Science(), vol 12539. Springer, Cham. https://doi.org/10.1007/978-3-030-68238-5_16

Download citation

DOI: https://doi.org/10.1007/978-3-030-68238-5_16

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-030-68237-8

Online ISBN: 978-3-030-68238-5

eBook Packages: Computer ScienceComputer Science (R0)