Abstract

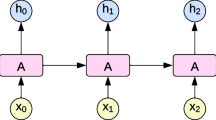

Hierarchical structure is a common characteristic of some kinds of videos (e.g., sports videos, game videos): the videos are composed of several actions hierarchically and there exists temporal dependencies among segments of different scales, where action labels can be enumerated. Our ideas are based on two intuition: First, the actions are the fundamental units for people to understand these videos. Second, the process of summarization is naturally one of observation and refinement, i.e., observing segments in video and hierarchically refining the boundaries of an important action according to video hierarchical structure. Based on above insights, we generate action proposals to exploit the structure and formulate the summarization process as a hierarchical refining process. We also train a hierarchical summarization network with deep Q-learning (HQSN) to achieve the refining process and explore temporal dependency. Besides, we collect a new dataset that consists of structured game videos with fine-grain actions and importance annotations. The experimental results demonstrate the effectiveness of our framework.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

References

Bettadapura, V., Pantofaru, C., Essa, I.: Leveraging contextual cues for generating basketball highlights. In: Proceedings of the 24th ACM international conference on Multimedia, pp. 908–917. ACM (2016)

Caba Heilbron, F., Carlos Niebles, J., Ghanem, B.: Fast temporal activity proposals for efficient detection of human actions in untrimmed videos. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 1914–1923 (2016)

Carreira, J., Zisserman, A.: Quo vadis, action recognition? a new model and the kinetics dataset. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 6299–6308 (2017)

Cho, K., et al.: Learning phrase representations using rnn encoder-decoder for statistical machine translation. In: Proceedings of the Empirical Methods in Natural Language Processing, pp. 1724–1734 (2014)

Chu, W.S., Song, Y., Jaimes, A.: Video co-summarization: video summarization by visual co-occurrence. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 3584–3592 (2015)

Escorcia, V., Caba Heilbron, F., Niebles, J.C., Ghanem, B.: DAPs: deep action proposals for action understanding. In: Leibe, B., Matas, J., Sebe, N., Welling, M. (eds.) ECCV 2016. LNCS, vol. 9907, pp. 768–784. Springer, Cham (2016). https://doi.org/10.1007/978-3-319-46487-9_47

Gao, J., Yang, Z., Chen, K., Sun, C., Nevatia, R.: Turn tap: temporal unit regression network for temporal action proposals. In: Proceedings of the IEEE International Conference on Computer Vision, pp. 3628–3636 (2017)

Gong, B., Chao, W.L., Grauman, K., Sha, F.: Diverse sequential subset selection for supervised video summarization. In: Proceedings of Advances in Neural Information Processing Systems, pp. 2069–2077 (2014)

Gygli, M., Grabner, H., Riemenschneider, H., Van Gool, L.: Creating summaries from user videos. In: Fleet, D., Pajdla, T., Schiele, B., Tuytelaars, T. (eds.) ECCV 2014. LNCS, vol. 8695, pp. 505–520. Springer, Cham (2014). https://doi.org/10.1007/978-3-319-10584-0_33

Gygli, M., Grabner, H., Van Gool, L.: Video summarization by learning submodular mixtures of objectives. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 3090–3098 (2015)

Jiang, Y., Cui, K., Peng, B., Xu, C.: Comprehensive video understanding: video summarization with content-based video recommender design. In: Proceedings of the IEEE International Conference on Computer Vision Workshops, pp. 1–8 (2019)

Kingma, D.P., Ba, J.: Adam: a method for stochastic optimization. In: Proceedings of the International Conference on Learning Representations, pp. 1–15 (2014)

Kulesza, A., Taskar, B., et al.: Determinantal point processes for machine learning. Found. Trends in Mach. Learn. 5(2–3), 123–286 (2012)

Kwon, H., Shim, W., Cho, M.: Temporal u-nets for video summarization with scene and action recognition. In: Proceedings of the IEEE International Conference on Computer Vision Workshops, pp. 1–4 (2019)

Lin, T., Zhao, X., Su, H., Wang, C., Yang, M.: Bsn: boundary sensitive network for temporal action proposal generation. In: Proceedings of the European Conference on Computer Vision, pp. 3–19 (2018)

Mathe, S., Pirinen, A., Sminchisescu, C.: Reinforcement learning for visual object detection. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 2894–2902 (2016)

Merler, M., et al.: Automatic curation of sports highlights using multimodal excitement features. IEEE Trans. Multimedia 21(5), 1147–1160 (2018)

Mnih, V., et al.: Playing atari with deep reinforcement learning. In: Neural Information Processing Systems Deep Learning Workshop, pp. 1–9 (2013)

Otani, M., Nakashima, Y., Rahtu, E., Heikkila, J.: Rethinking the evaluation of video summaries. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 7596–7604 (2019)

Park, J., Lee, J., Jeon, S., Sohn, K.: Video summarization by learning relationships between action and scene. In: Proceedings of the IEEE International Conference on Computer Vision Workshops, pp. 1–8 (2019)

Paszke, A., et al.: Pytorch: an imperative style, high-performance deep learning library. In: Advances in neural information processing systems, pp. 8026–8037 (2019)

Potapov, D., Douze, M., Harchaoui, Z., Schmid, C.: Category-specific video summarization. In: Fleet, D., Pajdla, T., Schiele, B., Tuytelaars, T. (eds.) ECCV 2014. LNCS, vol. 8694, pp. 540–555. Springer, Cham (2014). https://doi.org/10.1007/978-3-319-10599-4_35

Ren, Z., Wang, X., Zhang, N., Lv, X., Li, L.J.: Deep reinforcement learning-based image captioning with embedding reward. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 290–298 (2017)

Ringer, C., Nicolaou, M.A.: Deep unsupervised multi-view detection of video game stream highlights. In: Proceedings of the 13th International Conference on the Foundations of Digital Games, pp. 1–6. ACM (2018)

Rochan, M., Wang, Y.: Video summarization by learning from unpaired data. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 7902–7911 (2019)

Seong, H., Hyun, J., Kim, E.: Video multitask transformer network. In: Proceedings of the IEEE International Conference on Computer Vision Workshops, pp. 1–9 (2019)

Shou, Z., Wang, D., Chang, S.F.: Temporal action localization in untrimmed videos via multi-stage cnns. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 1049–1058 (2016)

Song, Y., Vallmitjana, J., Stent, A., Jaimes, A.: Tvsum: summarizing web videos using titles. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 5179–5187 (2015)

Wang, L., Qiao, Y., Tang, X.: Action recognition and detection by combining motion and appearance features. THUMOS14 Action Recogn. Challenge 1(2), 2 (2014)

Yuan, J., Ni, B., Yang, X., Kassim, A.A.: Temporal action localization with pyramid of score distribution features. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 3093–3102 (2016)

Yun, S., Choi, J., Yoo, Y., Yun, K., Young Choi, J.: Action-decision networks for visual tracking with deep reinforcement learning. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 2711–2720 (2017)

Zhang, K., Chao, W.L., Sha, F., Grauman, K.: Summary transfer: exemplar-based subset selection for video summarization. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 1059–1067 (2016)

Zhang, K., Chao, W.-L., Sha, F., Grauman, K.: Video summarization with long short-term memory. In: Leibe, B., Matas, J., Sebe, N., Welling, M. (eds.) ECCV 2016. LNCS, vol. 9911, pp. 766–782. Springer, Cham (2016). https://doi.org/10.1007/978-3-319-46478-7_47

Zhang, S., Zhu, Y., Roy-Chowdhury, A.K.: Context-aware surveillance video summarization. IEEE Trans. Image Proces. 25(11), 5469–5478 (2016)

Zhao, B., Li, X., Lu, X.: Hsa-rnn: hierarchical structure-adaptive rnn for video summarization. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 7405–7414 (2018)

Zhao, B., Xing, E.P.: Quasi real-time summarization for consumer videos. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 2513–2520 (2014)

Zhao, Y., Xiong, Y., Wang, L., Wu, Z., Tang, X., Lin, D.: Temporal action detection with structured segment networks. In: Proceedings of the IEEE International Conference on Computer Vision, pp. 2914–2923 (2017)

Zhou, K., Qiao, Y., Xiang, T.: Deep reinforcement learning for unsupervised video summarization with diversity-representativeness reward. In: Thirty-Second AAAI Conference on Artificial Intelligence, pp. 7582–7589 (2018)

Zhou, K., Xiang, T., Cavallaro, A.: Video summarisation by classification with deep reinforcement learning. In: Proceedings of the British Machine Vision Conference, pp. 1–13 (2018)

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2021 Springer Nature Switzerland AG

About this paper

Cite this paper

Li, W. et al. (2021). From Coarse to Fine: Hierarchical Structure-Aware Video Summarization. In: Del Bimbo, A., et al. Pattern Recognition. ICPR International Workshops and Challenges. ICPR 2021. Lecture Notes in Computer Science(), vol 12664. Springer, Cham. https://doi.org/10.1007/978-3-030-68799-1_6

Download citation

DOI: https://doi.org/10.1007/978-3-030-68799-1_6

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-030-68798-4

Online ISBN: 978-3-030-68799-1

eBook Packages: Computer ScienceComputer Science (R0)