Abstract

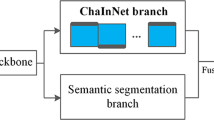

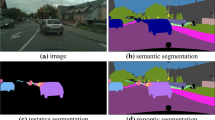

In this work we introduce a new Bounding-Box Free Network (BBFNet) for panoptic segmentation. Panoptic segmentation is an ideal problem for proposal-free methods as it already requires per-pixel semantic class labels. We use this observation to exploit class boundaries from off-the-shelf semantic segmentation networks and refine them to predict instance labels. Towards this goal BBFNet predicts coarse watershed levels and uses them to detect large instance candidates where boundaries are well defined. For smaller instances, whose boundaries are less reliable, BBFNet also predicts instance centers by means of Hough voting followed by mean-shift to reliably detect small objects. A novel triplet loss network helps merging fragmented instances while refining boundary pixels. Our approach is distinct from previous works in panoptic segmentation that rely on a combination of a semantic segmentation network with a computationally costly instance segmentation network based on bounding box proposals, such as Mask R-CNN, to guide the prediction of instance labels using a Mixture-of-Expert (MoE) approach. We benchmark our proposal-free method on Cityscapes and Microsoft COCO datasets and show competitive performance with other MoE based approaches while outperforming existing non-proposal based methods on the COCO dataset. We show the flexibility of our method using different semantic segmentation backbones and provide video results on challenging scenes in the wild in the supplementary material.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

Notes

- 1.

Source code available from https://github.com/uber-research/UPSNet.

References

Arnab, A., Torr, P.H.: Pixelwise instance segmentation with a dynamically instantiated network. In: IEEE Conference on Computer Vision and Pattern Recognition (CVPR) (2017)

Bai, M., Urtasun, R.: Deep watershed transform for instance segmentation. In: IEEE Conference on Computer Vision and Pattern Recognition (CVPR), pp. 2858–2866 (2017)

Ballard, D.H.: Generalizing the hough transform to detect arbitrary shapes. Pattern Recogn. 13(2), 111–122 (1981)

Brabandere, B.D., Neven, D., Gool, L.V.: Semantic instance segmentation with a discriminative loss function. arXiv preprint arXiv:1708.02551 (2017)

Cheng, B., Collins, M., Zhu, Y., Liu, T., Huang, T., Adam, H., Chen, L.: Panoptic-deeplab: a simple, strong, and fast baseline for bottom-up panoptic segmentation. In: IEEE Conference on Computer Vision and Pattern Recognition (CVPR) (2020)

Cheng, Y.: Mean shift, mode seeking, and clustering. IEEE Trans. Pattern Anal. Machine Intell. 17(8), 790–799 (1995)

Cordts, M., Omran, M., Ramos, S., Rehfeld, T., Enzweiler, M., Benenson, R., Franke, U., Roth, S., Schiele, B.: The cityscapes dataset for semantic urban scene understanding. In: IEEE Conf. on Computer Vision and Pattern Recognition (CVPR) (2016)

Dai, J., Qi, H., Xiong, Y., Li, Y., Zhang, G., Hu, H., Wei, Y.: Deformable convolutional networks. In: International Conference on Computer Vision (ICCV) (2017)

Deng, J., Dong, W., Socher, R., Li, L.J., Li, K., Fei-Fei, L.: Imagenet: a large-scale hierarchical image database. In: IEEE Conference on Computer Vision and Pattern Recognition (CVPR) (2009)

Forsyth, D., et al.: Finding pictures of objects in large collections of images. In: International Workshop on Object Representation in Computer Vision (1996)

Gao, N., et al.: SSAP: single-shot instance segmentation with affinity pyramid. In: International Conference on Computer Vision (ICCV) (2019)

de Geus, D., Meletis, P., Dubbelman, G.: Fast panoptic segmentation network. arXiv preprint arXiv:1910.03892 (2019)

He, K., Gkioxari, G., Dollár, P., Girshick, R.: Mask R-CNN. In: International Conference on Computer Vision (ICCV) (2017)

He, K., Zhang, X., Ren, S., Sun, J.: Deep residual learning for image recognition. In: IEEE Conference on Computer Vision and Pattern Recognition (CVPR) (2015)

Keuper, M., Levinkov, E., Bonneel, N., Lavoue, G., Brox, T., Andres, B.: Efficient decomposition of image and mesh graphs by lifted multicuts. In: International Conference on Computer Vision (ICCV) (2015)

Kirillov, A., Girshick, R., He, K., Dollár, P.: Panoptic feature pyramid networks. In: IEEE Conference on Computer Vision and Pattern Recognition (CVPR) (2019)

Kirillov, A., He, K., Girshick, R., Rother, C., Dollár, P.: Panoptic segmentation. In: IEEE Conference on Computer Vision and Pattern Recognition (CVPR) (2019)

Li, J., Raventos, A., Bhargava, A., Tagawa, T., Gaidon, A.: Learning to fuse things and stuff. arXiv preprint arXiv:1812.01192 (2019)

Li, Q., Arnab, A., Torr, P.H.S.: Weakly- and semi-supervised panoptic segmentation. In: Ferrari, V., Hebert, M., Sminchisescu, C., Weiss, Y. (eds.) ECCV 2018. LNCS, vol. 11219, pp. 106–124. Springer, Cham (2018). https://doi.org/10.1007/978-3-030-01267-0_7

Li, Q., Qi, X., Torr, P.: Unifying training and inference for panoptic segmentation. In: IEEE Conference on Computer Vision and Pattern Recognition (CVPR) (2020)

Li, Y., et al.: Attention-guided unified network for panoptic segmentation. In: IEEE Conference on Computer Vision and Pattern Recognition (CVPR) (2019)

Lin, T., Dollár, P., Girshick, R., He, K., Hariharan, B., Belongie, S.: Feature pyramid networks for object detection. In: IEEE Conference on Computer Vision and Pattern Recognition (CVPR) (2017)

Lin, T.-Y., et al.: Microsoft COCO: common objects in context. In: Fleet, D., Pajdla, T., Schiele, B., Tuytelaars, T. (eds.) ECCV 2014. LNCS, vol. 8693, pp. 740–755. Springer, Cham (2014). https://doi.org/10.1007/978-3-319-10602-1_48

Neuhold, G., Ollmann, T., Bulò, S.R., Kontschieder, P.: The Mapillary Vistas dataset for semantic understanding of street scenes. In: International Conference on Computer Vision (ICCV) (2017). https://www.mapillary.com/dataset/vistas

Neven, D., Brabandere, B.D., Proesmans, M., Gool, L.V.: Instance segmentation by jointly optimizing spatial embeddings and clustering bandwidth. In: IEEE Conference on Computer Vision and Pattern Recognition (CVPR) (2019)

Neven, D., Brabandere, B.D., Georgoulis, S., Proesmans, M., Gool, L.V.: Fast scene understanding for autonomous driving. arXiv preprint arXiv:1708.02550 (2017)

Porzi, L., Bulò, S.R., Colovic, A., Kontschieder, P.: Seamless scene segmentation. In: IEEE Conference on Computer Vision and Pattern Recognition (CVPR) (2019)

Redmon, J., Farhadi, A.: Yolov3: An incremental improvement. arXiv preprint arXiv:1804.02767 (2018)

Romera, E., Álvarez, J.M., Bergasa, L.M., Arroyo, R.: ErfNet: efficient residual factorized convnet for real-time semantic segmentation. IEEE Trans. Intell. Transp. Syst. 19, 263–272 (2018)

Sandler, M., Howard, A., Zhu, M., Zhmoginov, A., Chen, L.: Mobilenetv 2: inverted residuals and linear bottlenecks. In: IEEE Conference on Computer Vision and Pattern Recognition (CVPR) (2018)

Sofiiuk, K., Barinova, O., Konushin, A.: Adaptis: adaptive instance selection network. In: International Conference on Computer Vision (ICCV) (2019)

Tighe, J., Niethammer, M., Lazebnik, S.: Scene parsing with object instances and occlusion ordering. In: IEEE Conference on Computer Vision and Pattern Recognition (CVPR) (2014)

Uhrig, J., Cordts, M., Franke, U., Brox, T.: Pixel-level encoding and depth layering for instance-level semantic labeling. In: German Conference on Pattern Recognition (GCPR) (2016)

Weinberger, K.Q., Saul, L.K.: Distance metric learning for large margin nearest neighbor classification. J. Mach. Learn. Res. (2009)

Xiong, Y., et al.: UPSNet: a unified panoptic segmentation network. In: IEEE Conference on Computer Vision and Pattern Recognition (CVPR) (2019)

Yang, T., et al.: Deeperlab: single-shot image parser. arXiv preprint arXiv:1902.05093 (2019)

Yao, J., Fidler, S., Urtasun, R.: Describing the scene as a whole: joint object detection. In: IEEE Conference on Computer Vision and Pattern Recognition (CVPR) (2012)

Acknowledgment

We would like to thank Prof. Andrew Davison and Dr. Alexandre Morgand for their critical feedback during the course of this work.

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

1 Electronic supplementary material

Below is the link to the electronic supplementary material.

Supplementary material 2 (mp4 25265 KB)

Rights and permissions

Copyright information

© 2021 Springer Nature Switzerland AG

About this paper

Cite this paper

Bonde, U., Alcantarilla, P.F., Leutenegger, S. (2021). Towards Bounding-Box Free Panoptic Segmentation. In: Akata, Z., Geiger, A., Sattler, T. (eds) Pattern Recognition. DAGM GCPR 2020. Lecture Notes in Computer Science(), vol 12544. Springer, Cham. https://doi.org/10.1007/978-3-030-71278-5_23

Download citation

DOI: https://doi.org/10.1007/978-3-030-71278-5_23

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-030-71277-8

Online ISBN: 978-3-030-71278-5

eBook Packages: Computer ScienceComputer Science (R0)