Abstract

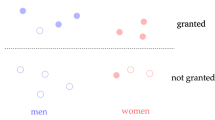

It is said that with great power comes great responsibility. Nowadays, we rely on machine learning systems to make decisions. Unfortunately these systems suffer from algorithmic biases; they often produce results that are systemically prejudiced due to erroneous assumptions in the machine learning process. Consequently these systems can contribute to increase biases in society and this is something we should avoid undoubtedly. The importance of the topic and the effect it has in the society has made it become an important research topic during the last years giving rise to different solutions. In this work, we selected three state-of-the-art techniques, decoupled classifiers, fairness constraints and adversarial learning, that claim to reduce bias in machine learning algorithms and compared their performance over different databases and fairness evaluation metrics. The obtained results show that there is no system performing the best in all aspects and databases but gives some hints to select the best option according to the objective.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

References

Beutel, A., Chen, J., Zhao, Z., Chi, E.H.: Data decisions and theoretical implications when adversarially learning fair representations (2017)

Bolukbasi, T., Chang, K.W., Zou, J.Y., Saligrama, V., Kalai, A.T.: Man is to computer programmer as woman is to homemaker? debiasing word embeddings. In: Lee, D.D., Sugiyama, M., Luxburg, U.V., Guyon, I., Garnett, R. (eds.) Advances in Neural Information Processing Systems 29, pp. 4349–4357. Curran Associates, Inc., Barcelona (2016), http://papers.nips.cc/paper/6228-man-is-to-computer-programmer-as-woman-is-to-homemaker-debiasing-word-embeddings.pdf

Buolamwini, J., Gebru, T.: Gender shades: Intersectional accuracy disparities in commercial gender classification. In: Friedler, S.A., Wilson, C. (eds.) Proceedings of the 1st Conference on Fairness, Accountability and Transparency. Proceedings of Machine Learning Research, 23–24 February 2018, vol. 81, pp. 77–91. PMLR, New York (2018), http://proceedings.mlr.press/v81/buolamwini18a.html

Celis, L.E., Huang, L., Keswani, V., Vishnoi, N.K.: Classification with fairness constraints: a meta-algorithm with provable guarantees. In: Proceedings of the Conference on Fairness, Accountability, and Transparency (FAT 2019), pp. 319–328. Association for Computing Machinery, New York (2019). https://doi.org/10.1145/3287560.3287586

Dwork, C., Immorlica, N., Kalai, A.T., Leiserson, M.: Decoupled classifiers for fair and efficient machine learning (2017)

Garg, N., Schiebinger, L., Jurafsky, D., Zou, J.: Word embeddings quantify 100 years of gender and ethnic stereotypes. Proc. Natl Acad. Sci. 115(16), E3635–E3644 (2018). https://doi.org/10.1073/pnas.1720347115, https://www.pnas.org/content/115/16/E3635

Gonen, H., Goldberg, Y.: Lipstick on a pig: debiasing methods cover up systematic gender biases in word embeddings but do not remove them (2019)

Hardt, M., Price, E., Price, E., Srebro, N.: Equality of opportunity in supervised learning. In: Lee, D.D., Sugiyama, M., Luxburg, U.V., Guyon, I., Garnett, R. (eds.) Advances in Neural Information Processing Systems 29, pp. 3315–3323. Curran Associates, Inc. (2016), http://papers.nips.cc/paper/6374-equality-of-opportunity-in-supervised-learning.pdf

Peters, M.E., Neumann, M., Iyyer, M., Gardner, M., Clark, C., Lee, K., Zettlemoyer, L.: Deep contextualized word representations (2018)

Slack, D., Friedler, S.A., Givental, E.: Fairness warnings and fair-MAMAl: learning fairly with minimal data. In: Proceedings of the 2020 Conference on Fairness, Accountability, and Transparency (FAT 2020), pp. 200–209. Association for Computing Machinery, New York (2020). https://doi.org/10.1145/3351095.3372839

Zafar, M.B., Valera, I., Gomez Rodriguez, M., Gummadi, K.P.: Fairness beyond disparate treatment & disparate impact: Learning classification without disparate mistreatment. In: Proceedings of the 26th International Conference on World Wide Web (WWW 2017) . pp. 1171–1180. International World Wide Web Conferences Steering Committee, Republic and Canton of Geneva, CHE (2017). https://doi.org/10.1145/3038912.3052660

Zafar, M.B., Valera, I., Rodriguez, M.G., Gummadi, K.P.: Fairness constraints: mechanisms for fair classification (2015)

Zhang, B.H., Lemoine, B., Mitchell, M.: Mitigating unwanted biases with adversarial learning. In: Proceedings of the 2018 AAAI/ACM Conference on AI, Ethics, and Society (AIES 2018). pp. 335–340. Association for Computing Machinery, New York (2018). https://doi.org/10.1145/3278721.3278779

Zhao, J., Wang, T., Yatskar, M., Cotterell, R., Ordonez, V., Chang, K.W.: Gender bias in contextualized word embeddings (2019)

Zhao, J., Wang, T., Yatskar, M., Ordonez, V., Chang, K.W.: Men also like shopping: Reducing gender bias amplification using corpus-level constraints (2017)

Acknowledgements

This work was funded by the Basque Government (ADIAN, IT-980-16; PRE-2013-1-887, BOPV/2013/128/3067); and by the Ministry of Economy and Competitiveness of the Spanish Government and the ERDF (PhysComp, TIN2017-85409-P).

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2021 Springer Nature Switzerland AG

About this paper

Cite this paper

Martinez-Eguiluz, M., Irazabal-Urrutia, O., Arbelaitz-Gallego, O. (2021). Towards Fairness in Classification: Comparison of Methods to Decrease Bias. In: Alba, E., et al. Advances in Artificial Intelligence. CAEPIA 2021. Lecture Notes in Computer Science(), vol 12882. Springer, Cham. https://doi.org/10.1007/978-3-030-85713-4_9

Download citation

DOI: https://doi.org/10.1007/978-3-030-85713-4_9

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-030-85712-7

Online ISBN: 978-3-030-85713-4

eBook Packages: Computer ScienceComputer Science (R0)