Abstract

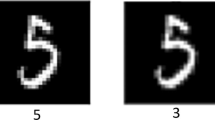

Due to the existence of adversarial attacks, various applications that employ deep neural networks (DNNs) have been under threat. Adversarial training enhances robustness of DNN-based systems by augmenting training data with adversarial samples. Projected gradient descent adversarial training (PGD AT), one of the promising defense methods, can resist strong attacks. We propose “free” adversarial training with layerwise heuristic learning (LHFAT) to remedy these problems. To reduce heavy computation cost, we couple model parameter updating with projected gradient descent (PGD) adversarial example updating while retraining the same mini-batch of data, where we “free” and unburden extra updates. Learning rate reflects weight updating speed. Weight gradient indicates weight updating efficiency. If weights are frequently updated towards opposite directions in one training epoch, then there are redundant updates. For higher level of weight updating efficiency, we design a new learning scheme, layerwise heuristic learning, which accelerates training convergence by restraining redundant weight updating and boosting efficient weight updating of layers according to weight gradient information. We demonstrate that LHFAT yields better defense performance on CIFAR-10 with approximately 8% GPU training time of PGD AT and LHFAT is also validated on ImageNet. We have released the code for our proposed method LHFAT at https://github.com/anonymous530/LHFAT.

This work is supported by the NSFC (under Grant 61876130).

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

References

Cao, Y., et al.: Adversarial sensor attack on lidar-based perception in autonomous driving. In: CCS, pp. 2267–2281. ACM (2019)

Carlini, N., et al.: On evaluating adversarial robustness. CoRR abs/1902.06705 (2019)

Deng, J., Dong, W., Socher, R., Li, L., Li, K., Li, F.: ImageNet: a large-scale hierarchical image database. In: CVPR, pp. 248–255. IEEE Computer Society (2009)

Goodfellow, I.J., Shlens, J., Szegedy, C.: Explaining and harnessing adversarial examples. In: ICLR (Poster) (2015)

Han, S., Mao, H., Dally, W.J.: Deep compression: compressing deep neural networks with pruning, trained quantization and Huffman coding. arXiv preprint arXiv:1510.00149 (2015)

He, Y., Zhang, X., Sun, J.: Channel pruning for accelerating very deep neural networks. In: ICCV, pp. 1398–1406. IEEE Computer Society (2017)

Hinton, G.E., Vinyals, O., Dean, J.: Distilling the knowledge in a neural network. CoRR abs/1503.02531 (2015)

Jia, J., Salem, A., Backes, M., Zhang, Y., Gong, N.Z.: MemGuard: defending against black-box membership inference attacks via adversarial examples. In: CCS, pp. 259–274. ACM (2019)

Kurakin, A., Goodfellow, I.J., Bengio, S.: Adversarial examples in the physical world. In: ICLR (Workshop). OpenReview.net (2017)

Kurakin, A., Goodfellow, I.J., Bengio, S.: Adversarial machine learning at scale. In: ICLR (Poster). OpenReview.net (2017)

Madry, A., Makelov, A., Schmidt, L., Tsipras, D., Vladu, A.: Towards deep learning models resistant to adversarial attacks. In: ICLR (Poster). OpenReview.net (2018)

Redmon, J., Farhadi, A.: Yolov3: an incremental improvement. CoRR abs/1804.02767 (2018)

Ruder, S.: An overview of gradient descent optimization algorithms. CoRR abs/1609.04747 (2016)

Shafahi, A., et al.: Adversarial training for free! In: NeurIPS, pp. 3353–3364 (2019)

Szegedy, C., et al.: Intriguing properties of neural networks. In: ICLR (Poster) (2014)

Tramèr, F., Kurakin, A., Papernot, N., Goodfellow, I.J., Boneh, D., McDaniel, P.D.: Ensemble adversarial training: attacks and defenses. In: ICLR (Poster). OpenReview.net (2018)

Wang, W., Sun, Y., Eriksson, B., Wang, W., Aggarwal, V.: Wide compression: tensor ring nets. In: CVPR, pp. 9329–9338. IEEE Computer Society (2018)

Wong, E., Rice, L., Kolter, J.Z.: Fast is better than free: Revisiting adversarial training. In: ICLR. OpenReview.net (2020)

Zhang, D., Zhang, T., Lu, Y., Zhu, Z., Dong, B.: You only propagate once: accelerating adversarial training via maximal principle. In: NeurIPS, pp. 227–238 (2019)

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2021 Springer Nature Switzerland AG

About this paper

Cite this paper

Zhang, H., Shi, Y., Dong, B., Han, Y., Li, Y., Kuang, X. (2021). Free Adversarial Training with Layerwise Heuristic Learning. In: Peng, Y., Hu, SM., Gabbouj, M., Zhou, K., Elad, M., Xu, K. (eds) Image and Graphics. ICIG 2021. Lecture Notes in Computer Science(), vol 12889. Springer, Cham. https://doi.org/10.1007/978-3-030-87358-5_10

Download citation

DOI: https://doi.org/10.1007/978-3-030-87358-5_10

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-030-87357-8

Online ISBN: 978-3-030-87358-5

eBook Packages: Computer ScienceComputer Science (R0)