Abstract

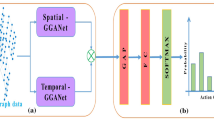

In the last several years, the graph convolutional networks (GCNs) have shown exceptional ability on skeleton-based action recognition. Currently used mainstream methods often include identifying the movements of a single skeleton and then fusing the features. But in this way, it will lose the interactive information of two skeletons. Moreover, since there are some interactions between people (such as handshake, high-five, hug, etc.), the loss will reduce the accuracy of skeleton-based action recognition. To address this issue, we propose a two-stream approach (SD-HGCN). On the basis of single-skeleton stream (S-HGCN), a dual-skeleton stream (D-HGCN) is added to recognizing actions with interactive information between skeletons. The model mainly includes a multi-branch inputs adaptive fusion module (MBAFM) and a skeleton perception module (SPM). MBAFM can make the input features more distinguishable through two GCNs and an attention module. SPM may identify relationships between skeletons and build topological knowledge about human skeletons, through adaptive learning of the hypergraph distribution matrix based on the semantic information in the skeleton sequence. The experimental results show that the D-HGCN consumes less time and has higher accuracy, which meets the real-time requirements. Our experiments demonstrate that our approach outperforms state-of-the-art methods on the NTU and Kinetics datasets.

Supported by organization the Artificial Intelligence Program of Shanghai under Grant 2019-RGZN-01077.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

References

Lei, W., Du, Q.H., Koniusz, P.: A comparative review of recent kinect-based action recognition algorithms. IEEE Trans. Image Process. 29, 15–28 (2019)

Hu, J.F., et al.: Jointly learning heterogeneous features for RGB-D activity recognition. In: 2015 IEEE Conference on Computer Vision and Pattern Recognition (CVPR). IEEE, (2015)

Vemulapalli, R., Arrate, F., Chellappa, R.: Human action recognition by representing 3D skeletons as points in a lie group. In: 2014 IEEE Conference on Computer Vision and Pattern Recognition (CVPR) IEEE Computer Society (2014)

Hussein, M.E., et al.: Human action recognition using a temporal hierarchy of covariance descriptors on 3D joint locations. In: International Joint Conference on Artificial Intelligence (2013)

Lev, G., Sadeh, G., Klein, B., Wolf, L.: RNN fisher vectors for action recognition and image annotation. In: Leibe, B., Matas, J., Sebe, N., Welling, M. (eds.) Computer Vision. LNCS, vol. 9910. Springer, Cham (2016). https://doi.org/10.1007/978-3-319-46466-4_50

Wang, H., and L. Wang.: IEEE 2017 conference on computer vision and pattern recognition (CVPR) - Honolulu, HI. In: 2017 IEEE Conference on Computer Vision and Pattern Recognition (CVPR) - Modeling Temporal Dynamics and Spatial Configurations of Actions Usi, 3633–3642 (2017)

Liu, J., et al.: Global context-aware attention LSTM networks for 3D action recognition. In: IEEE Conference on Computer Vision and Pattern Recognition. IEEE (2017)

Chéron, G., Laptev, I., Schmid, C.: P-CNN: Pose-based CNN features for action recognition. IEEE (2015)

Simonyan, K., Zisserman, A.: Two-stream convolutional networks for action recognition in videos. Adv. Neural Inf. Process. Syst. (2014)

Yan, S., Xiong, Y.: Temporal graph convolutional networks for skeleton-based action recognition, Lin. (2018)

Yang, W. J., Zhang, J. L.: Shallow graph convolutional network for skeleton-based action recognition (2021)

Shi, L., et al.: Skeleton-based action recognition with directed graph neural networks. In: 2019 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR). IEEE (2020)

Shi, L., et al.: Two-stream adaptive graph convolutional networks for skeleton-based action recognition (2019)

Cheng, K., et al.: Skeleton-based action recognition with shift graph convolutional network. In: 2020 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR). IEEE (2020)

Bronstein, M.M., Bruna, J., LeCun, Y., Szlam, A., Vandergheynst, P.: Geometric deep learning: going beyond euclidean data. IEEE Signal Process. Mag. 34(4), 18–42 (2017)

Gori, M., Monfardini, G., Scarselli, F.: A new model for learning in graph domains. In: 2005 Proceedings of IEEE International Joint Conference on Neural Networks, vol. 2, pp. 729–734 (2005)

Scarselli, F., Gori, M., Tsoi, A.C., Hagenbuchner, M., Monfardini, G.: The graph neural network model. IEEE Trans. Neural Netw. 20(1), 61–80 (2008)

Bruna, J., Zaremba, W., Szlam, A., Lecun, Y.: Spectral networks and locally connected networks on graphs. In: International Conference on Learning Representations (ICLR2014), CBLS (2014)

Henaff, M., Bruna, J., LeCun, Y.: Deep convolutional networks on graph-structured data (2015). arXiv preprint arXiv:1506.05163

Defferrard, M., Bresson, X., Vandergheynst, P.: Convolutional neural networks on graphs with fast localized spectral filtering. Adv. Neural Inform. Process. Syst. 3844–3852 (2016)

Kipf, T.N., Welling, M.: Semi-supervised classification with graph convolutional networks. In: 5th International Conference on Learning Representations, ICLR (2017)

Micheli, A.: Neural network for graphs: a contextual constructive approach. IEEE Trans. Neural Netw. 20(3), 498–511 (2009)

Niepert, M., Ahmed, M., Kutzkov, K.: Learning convolutional neural networks for graphs. In: International Conference on Machine Learning, pp. 2014–2023 (2016)

Such, F.P., et al.: Robust spatial filtering with graph convolutional neural networks. IEEE J. Sel. Top. Sign. Proces. (2017)

Song, Y. F., et al.: Stronger, faster and more explainable: a graph convolutional baseline for skeleton-based action recognition. ACM (2020)

Shahroudy, A., et al.: NTU-RGB+D: a large scale dataset for 3D human activity analysis. IEEE Comput. Soc. 1010–1019 (2016)

Liu, J., et al.: NTU-RGB+D 120: a large-scale benchmark for 3D human activity understanding (2019)

Kay, W., et al.: The kinetics human action video datasets (2017)

Cao, Z., et al.: Realtime multi-person 2D pose estimation using part affinity fields. IEEE Trans. Pattern Anal. Mach. Intell. (2018)

Li, M., Chen, S., Chen, X., Zhang, Y., Wang, Y., Tian, Q.: Actional-structural graph convolutional networks for skeleton-based action recognition. In: IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR) (2019)

Song, Y.F., Zhang, Z., Shan, C., Wang, L.: Richly activated graph convolutional network for robust skeleton-based action recognition. IEEE Trans. Circuits Syst. Video Technol. (2020)

Feng, D., et al.: Multi-scale spatial temporal graph neural network for skeleton-based action recognition. IEEE Access (2021)

Author information

Authors and Affiliations

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2021 Springer Nature Switzerland AG

About this paper

Cite this paper

He, C., Xiao, C., Liu, S., Qin, X., Zhao, Y., Zhang, X. (2021). Single-Skeleton and Dual-Skeleton Hypergraph Convolution Neural Networks for Skeleton-Based Action Recognition. In: Mantoro, T., Lee, M., Ayu, M.A., Wong, K.W., Hidayanto, A.N. (eds) Neural Information Processing. ICONIP 2021. Lecture Notes in Computer Science(), vol 13109. Springer, Cham. https://doi.org/10.1007/978-3-030-92270-2_2

Download citation

DOI: https://doi.org/10.1007/978-3-030-92270-2_2

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-030-92269-6

Online ISBN: 978-3-030-92270-2

eBook Packages: Computer ScienceComputer Science (R0)