Abstract

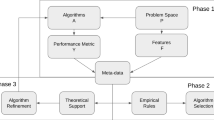

Knowledge gained by meta-learning processes is valuable when it can be successfully used in solving algorithm selection problems. There is still strong need for automated tools for learning from data, performing model construction and selection with little or no effort from human operator. This article provides evidence for efficacy of a general meta-learning algorithm performing validations of candidate learning methods and driving the search for most attractive models on the basis of an analysis of learning results profiles. The profiles help in finding similar processes performed for other datasets and pointing to promising learning machines configurations. Further research on profile management is expected to bring very attractive automated tools for learning from data. Here, several components of the framework have been examined and an extended test performed to confirm the possibilities of the method. The discussion also touches on the subject of testing and comparing the results of meta-learning algorithms.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

References

Balte, A., Pise, N., Kulkarni, P.: Meta-learning with landmarking: a survey. Int. J. Comput. Appl. 105(8), 47–51 (2014)

Bensusan, H., Giraud-Carrier, C., Kennedy, C.J.: A higher-order approach to meta-learning. In: Cussens, J., Frisch, A. (eds.) Proceedings of the Work-in-Progress Track at the 10th International Conference on Inductive Logic Programming, pp. 33–42 (2000)

Dua, D., Graff, C.: UCI machine learning repository (2017). http://archive.ics.uci.edu/ml

Duch, W., Grudziński, K.: Meta-learning via search combined with parameter optimization. In: Rutkowski, L., Kacprzyk, J. (eds.) Advances in Soft Computing, vol. 17, pp. 13–22. Springer, Heidelberg (2002). https://doi.org/10.1007/978-3-7908-1777-5_2

Engels, R., Theusinger, C.: Using a data metric for preprocessing advice for data mining applications. In: Proceedings of the European Conference on Artificial Intelligence (ECAI-98), pp. 430–434. Wiley (1998)

Feurer, M., Klein, A., Eggensperger, K., Springenberg, J., Blum, M., Hutter, F.: Efficient and robust automated machine learning. In: Cortes, C., Lawrence, N., Lee, D., Sugiyama, M., Garnett, R. (eds.) Advances in Neural Information Processing Systems, vol. 28, pp. 2962–2970. Curran Associates, Inc. (2015)

Fürnkranz, J., Petrak, J.: An evaluation of landmarking variants. In: Giraud-Carrier, C., Lavra, N., Moyle, S., Kavsek, B. (eds.) Proceedings of the ECML/PKDD Workshop on Integrating Aspects of Data Mining, Decision Support and Meta-Learning (2001)

Grąbczewski, K., Jankowski, N.: Saving time and memory in computational intelligence system with machine unification and task spooling. Knowl.-Based Syst. 24, 570–588 (2011)

Guyon, I., Saffari, A., Dror, G., Cawley, G.: Model selection: beyond the Bayesian/frequentist divide. J. Mach. Learn. Res. 11, 61–87 (2010)

Hutter, F., Hoos, H.H., Leyton-Brown, K.: Sequential model-based optimization for general algorithm configuration. In: Coello, C.A.C. (ed.) LION 2011. LNCS, vol. 6683, pp. 507–523. Springer, Heidelberg (2011). https://doi.org/10.1007/978-3-642-25566-3_40

Jankowski, N., Grąbczewski, K.: Universal meta-learning architecture and algorithms. In: Jankowski, N., Duch, W., Grąbczewski, K. (eds.) Meta-Learning in Computational Intelligence. Studies in Computational Intelligence, vol. 358, pp. 1–76. Springer, Heidelberg (2011). https://doi.org/10.1007/978-3-642-20980-2_1

Malkomes, G., Schaff, C., Garnett, R.: Bayesian optimization for automated model selection. In: Proceedings of the 30th International Conference on Neural Information Processing Systems, NIPS’16, pp. 2900–2908. Curran Associates Inc., Red Hook (2016)

Olson, R.S., Bartley, N., Urbanowicz, R.J., Moore, J.H.: Evaluation of a tree-based pipeline optimization tool for automating data science. In: Proceedings of the Genetic and Evolutionary Computation Conference 2016, GECCO ’16, pp. 485–492. Association for Computing Machinery, New York (2016)

Peng, Y., Flach, P.A., Soares, C., Brazdil, P.: Improved dataset characterisation for meta-learning. In: Lange, S., Satoh, K., Smith, C.H. (eds.) DS 2002. LNCS, vol. 2534, pp. 141–152. Springer, Heidelberg (2002). https://doi.org/10.1007/3-540-36182-0_14

Pfahringer, B., Bensusan, H., Giraud-Carrier, C.: Meta-learning by landmarking various learning algorithms. In: Proceedings of the Seventeenth International Conference on Machine Learning, pp. 743–750. Morgan Kaufmann (2000)

Rice, J.R.: The algorithm selection problem - abstract models. Technical report, Computer Science Department, Purdue University, West Lafayette, Indiana (1974). cSD-TR 116

Smith-Miles, K.A.: Cross-disciplinary perspectives on meta-learning for algorithm selection. ACM Comput. Surv. 41(1), 6:1–6:25 (2009)

Soares, C., Petrak, J., Brazdil, P.: Sampling-based relative landmarks: systematically test-driving algorithms before choosing. In: Brazdil, P., Jorge, A. (eds.) EPIA 2001. LNCS (LNAI), vol. 2258, pp. 88–95. Springer, Heidelberg (2001). https://doi.org/10.1007/3-540-45329-6_12

Thornton, C., Hutter, F., Hoos, H.H., Leyton-Brown, K.: Auto-WEKA: combined selection and hyperparameter optimization of classification algorithms. In: Proceedings of the 19th ACM SIGKDD International Conference on Knowledge Discovery and Data Mining, KDD ’13, pp. 847–855. Association for Computing Machinery, New York (2013)

Vanschoren, J., van Rijn, J.N., Bischl, B., Torgo, L.: OpenML: networked science in machine learning. SIGKDD Explor. 15(2), 49–60 (2013)

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2022 Springer Nature Switzerland AG

About this paper

Cite this paper

Grąbczewski, K. (2022). Using Result Profiles to Drive Meta-learning. In: Themistocleous, M., Papadaki, M. (eds) Information Systems. EMCIS 2021. Lecture Notes in Business Information Processing, vol 437. Springer, Cham. https://doi.org/10.1007/978-3-030-95947-0_6

Download citation

DOI: https://doi.org/10.1007/978-3-030-95947-0_6

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-030-95946-3

Online ISBN: 978-3-030-95947-0

eBook Packages: Computer ScienceComputer Science (R0)