Abstract

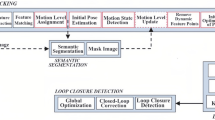

Exploring an unfamiliar indoor environment and avoiding obstacles is challenging for visually impaired people. Currently, several approaches achieve the avoidance of static obstacles based on the mapping of indoor scenes. To solve the issue of distinguishing dynamic obstacles, we propose an assistive system with an RGB-D sensor to detect dynamic information of a scene. Once the system captures an image, panoptic segmentation is performed to obtain the prior dynamic object information. With sparse feature points extracted from images and the depth information, poses of the user can be estimated. After the ego-motion estimation, the dynamic object can be identified and tracked. Then, poses and speed of tracked dynamic objects can be estimated, which are passed to the users through acoustic feedback.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

References

Bai, J., Liu, Z., Lin, Y., Li, Y., Lian, S., Liu, D.: Wearable travel aid for environment perception and navigation of visually impaired people. Electronics 8(6), 697 (2019)

Bescós, B., Campos, C., Tardós, J.D., Neira, J.: DynaSLAM II: tightly-coupled multi-object tracking and SLAM. IEEE Robot. Autom. Lett. 6(3), 5191–5198 (2021)

Bescós, B., Fácil, J.M., Civera, J., Neira, J.: DynaSLAM: tracking, mapping, and inpainting in dynamic scenes. IEEE Robot. Autom. Lett. 3(4), 4076–4083 (2018)

Cao, Z., Hidalgo, G., Simon, T., Wei, S., Sheikh, Y.: OpenPose: realtime multi-person 2D pose estimation using part affinity fields. IEEE Trans. Pattern Anal. Mach. Intell. (2021)

Dai, W., Zhang, Y., Li, P., Fang, Z., Scherer, S.: RGB-D SLAM in dynamic environments using point correlations. IEEE Trans. Pattern Anal. Mach. Intell. 44(1), 373–389 (2022)

Kerl, C., Sturm, J., Cremers, D.: Dense visual SLAM for RGB-D cameras. In: IROS, pp. 2100–2106 (2013)

Kim, D.H., Kim, J.H.: Effective background model-based RGB-D dense visual odometry in a dynamic environment. IEEE Trans. Robot. (2016)

Kroeger, T., Timofte, R., Dai, D., Van Gool, L.: Fast optical flow using dense inverse search. In: Leibe, B., Matas, J., Sebe, N., Welling, M. (eds.) ECCV 2016. LNCS, vol. 9908, pp. 471–488. Springer, Cham (2016). https://doi.org/10.1007/978-3-319-46493-0_29

Lepetit, V., Moreno-Noguer, F., Fua, P.: EPnP: an accurate O(n) solution to the PnP problem. Int. J. Comput. Vis. 81(2), 155–166 (2009)

Li, B., et al.: Vision-based mobile indoor assistive navigation aid for blind people. IEEE Trans. Mob. Comput. 18(3), 702–714 (2019)

Li, Y., et al.: Fully convolutional networks for panoptic segmentation. In: CVPR, pp. 214–223 (2021)

Liu, H., Liu, R., Yang, K., Zhang, J., Peng, K., Stiefelhagen, R.: HIDA: towards holistic indoor understanding for the visually impaired via semantic instance segmentation with a wearable solid-state LiDAR sensor. In: ICCVW, pp. 1780–1790 (2021)

Martinez, M., Yang, K., Constantinescu, A., Stiefelhagen, R.: Helping the blind to get through COVID-19: social distancing assistant using real-time semantic segmentation on RGB-D video. Sensors 20(18), 5202 (2020)

Mur-Artal, R., Tardós, J.D.: ORB-SLAM2: An open-source SLAM system for monocular, stereo and RGB-D cameras. IEEE Trans. Robot. 33(5), 1255–1262 (2017)

Palazzolo, E., Behley, J., Lottes, P., Giguère, P., Stachniss, C.: ReFusion: 3D reconstruction in dynamic environments for RGB-D cameras exploiting residuals. In: IROS, pp. 7855–7862 (2019)

Sturm, J., Engelhard, N., Endres, F., Burgard, W., Cremers, D.: A benchmark for the evaluation of RGB-D SLAM systems. In: IROS, pp. 573–580 (2012)

Yu, C., Liu, Z., Liu, X., Xie, F., Yang, Y., Wei, Q., Qiao, F.: DS-SLAM: a semantic visual SLAM towards dynamic environments. In: IROS, pp. 1168–1174 (2018)

Zhang, H., Ye, C.: An indoor wayfinding system based on geometric features aided graph SLAM for the visually impaired. IEEE Trans. Neural Syst. Rehabil. Eng. 29(9), 1592–1604 (2017)

Zhang, J., Yang, K., Constantinescu, A., Peng, K., Müller, K., Stiefelhagen, R.: Trans4Trans: efficient transformer for transparent object segmentation to help visually impaired people navigate in the real world. In: ICCVW, pp. 1760–1770 (2021)

Zhang, J., Henein, M., Mahony, R.E., Ila, V.: VDO-SLAM: A visual dynamic object-aware SLAM system. arXiv preprint arXiv:2005.11052 (2020)

Zhang, T., Nakamura, Y.: PoseFusion: dense RGB-D SLAM in dynamic human environments. In: Xiao, J., Kröger, T., Khatib, O. (eds.) ISER 2018. SPAR, vol. 11, pp. 772–780. Springer, Cham (2020). https://doi.org/10.1007/978-3-030-33950-0_66

Zhuo, C., Liu, X., Kojima, M., Huang, Q., Arai, T.: A wearable navigation device for visually impaired people based on the real-time semantic visual SLAM system. Sensors 21(4), 1536 (2021)

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2022 Springer Nature Switzerland AG

About this paper

Cite this paper

Ou, W. et al. (2022). Indoor Navigation Assistance for Visually Impaired People via Dynamic SLAM and Panoptic Segmentation with an RGB-D Sensor. In: Miesenberger, K., Kouroupetroglou, G., Mavrou, K., Manduchi, R., Covarrubias Rodriguez, M., Penáz, P. (eds) Computers Helping People with Special Needs. ICCHP-AAATE 2022. Lecture Notes in Computer Science, vol 13341. Springer, Cham. https://doi.org/10.1007/978-3-031-08648-9_19

Download citation

DOI: https://doi.org/10.1007/978-3-031-08648-9_19

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-031-08647-2

Online ISBN: 978-3-031-08648-9

eBook Packages: Computer ScienceComputer Science (R0)