Abstract

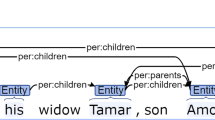

Open relation extraction (ORE) aims to assign semantic relationships between arguments, essential to the automatic construction of knowledge graphs. The previous methods either depend on external NLP tools (e.g., PoS-taggers) and language-specific relation formations, or suffer from inherent problems in sequence representations, thus leading to unsatisfactory extraction in diverse languages and domains. To address the above problems, we propose a Query-based Open Relation Extractor (QORE). QORE utilizes a Transformers-based language model to derive a representation of the interaction between arguments and context, and can process multilingual texts effectively. Extensive experiments are conducted on seven datasets covering four languages, showing that QORE models significantly outperform conventional rule-based systems and the state-of-the-art method LOREM [6]. Regarding the practical challenges [1] of Corpus Heterogeneity and Automation, our evaluations illustrate that QORE models show excellent zero-shot domain transferability and few-shot learning ability.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

References

Banko, M., Cafarella, M.J., Soderland, S., Broadhead, M., Etzioni, O.: Open information extraction from the web. In: Veloso, M.M. (ed.) Proceedings of the 20th International Joint Conference on Artificial Intelligence (IJCAI 2007), Hyderabad, 6–12 January 2007, pp. 2670–2676 (2007). http://ijcai.org/Proceedings/07/Papers/429.pdf

Corro, L.D., Gemulla, R.: Clausie: clause-based open information extraction. In: Schwabe, D., Almeida, V.A.F., Glaser, H., Baeza-Yates, R., Moon, S.B. (eds.) 22nd International World Wide Web Conference (WWW 2013), Rio de Janeiro, 13–17 May 2013, pp. 355–366. International World Wide Web Conferences Steering Committee/ACM (2013). https://doi.org/10.1145/2488388.2488420

Devlin, J., Chang, M., Lee, K., Toutanova, K.: BERT: pre-training of deep bidirectional transformers for language understanding. In: Burstein, J., Doran, C., Solorio, T. (eds.) Proceedings of the 2019 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies (NAACL-HLT 2019), Minneapolis, 2–7 June 2019, vol. 1 (Long and Short Papers), pp. 4171–4186. Association for Computational Linguistics (2019). https://doi.org/10.18653/v1/n19-1423

Faruqui, M., Kumar, S.: Multilingual open relation extraction using cross-lingual projection. In: Mihalcea, R., Chai, J.Y., Sarkar, A. (eds.) The 2015 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies (NAACL HLT 2015), Denver, 31 May–5 June 2015, pp. 1351–1356. The Association for Computational Linguistics (2015). https://doi.org/10.3115/v1/n15-1151

FitzGerald, N., Michael, J., He, L., Zettlemoyer, L.: Large-scale QA-SRL parsing. In: Gurevych, I., Miyao, Y. (eds.) Proceedings of the 56th Annual Meeting of the Association for Computational Linguistics (ACL 2018), Melbourne, 15–20 July 2018, vol. 1: Long Papers, pp. 2051–2060. Association for Computational Linguistics (2018). https://doi.org/10.18653/v1/P18-1191

Harting, T., Mesbah, S., Lofi, C.: LOREM: language-consistent open relation extraction from unstructured text. In: Huang, Y., King, I., Liu, T., van Steen, M. (eds.) The Web Conference 2020 (WWW 2020), Taipei, 20–24 April 2020, pp. 1830–1838. ACM/IW3C2 (2020). https://doi.org/10.1145/3366423.3380252

Jia, S., Xiang, Y., Chen, X.: Supervised neural models revitalize the open relation extraction. arXiv preprint arXiv:1809.09408 (2018)

Joshi, M., Chen, D., Liu, Y., Weld, D.S., Zettlemoyer, L., Levy, O.: Spanbert: improving pre-training by representing and predicting spans. Trans. Assoc. Comput. Linguist. 8, 64–77 (2020). https://transacl.org/ojs/index.php/tacl/article/view/1853

Lyu, Z., Shi, K., Li, X., Hou, L., Li, J., Song, B.: Multi-grained dependency graph neural network for Chinese open information extraction. In: Karlapalem, K., et al. (eds.) PAKDD 2021. LNCS (LNAI), vol. 12714, pp. 155–167. Springer, Cham (2021). https://doi.org/10.1007/978-3-030-75768-7_13

Mausam. Open information extraction systems and downstream applications. In: Kambhampati, S. (ed.) Proceedings of the Twenty-Fifth International Joint Conference on Artificial Intelligence, IJCAI 2016, New York, 9–15 July 2016, pp. 4074–4077. IJCAI/AAAI Press (2016). http://www.ijcai.org/Abstract/16/604

Mausam, Schmitz, M., Soderland, S., Bart, R., Etzioni, O.: Open language learning for information extraction. In: Tsujii, J., Henderson, J., Pasca, M. (eds.) Proceedings of the 2012 Joint Conference on Empirical Methods in Natural Language Processing and Computational Natural Language Learning (EMNLP-CoNLL 2012), 12–14 July 2012, Jeju Island, pp. 523–534. ACL (2012). https://aclanthology.org/D12-1048/

Ro, Y., Lee, Y., Kang, P.: Multi\({}^{\text{2}}\)oie: Multilingual open information extraction based on multi-head attention with BERT. In: Cohn, T., He, Y., Liu, Y. (eds.) Proceedings of the 2020 Conference on Empirical Methods in Natural Language Processing: Findings, EMNLP 2020, Online Event, 16–20 November 2020. Findings of ACL, vol. EMNLP 2020, pp. 1107–1117. Association for Computational Linguistics (2020). https://doi.org/10.18653/v1/2020.findings-emnlp.99

Solawetz, J., Larson, S.: LSOIE: A large-scale dataset for supervised open information extraction. In: Merlo, P., Tiedemann, J., Tsarfaty, R. (eds.) Proceedings of the 16th Conference of the European Chapter of the Association for Computational Linguistics: Main Volume (EACL 2021), Online, 19–23 April 2021, pp. 2595–2600. Association for Computational Linguistics (2021). https://aclanthology.org/2021.eacl-main.222/

Sun, M., Li, X., Wang, X., Fan, M., Feng, Y., Li, P.: Logician: a unified end-to-end neural approach for open-domain information extraction. In: Chang, Y., Zhai, C., Liu, Y., Maarek, Y. (eds.) Proceedings of the Eleventh ACM International Conference on Web Search and Data Mining (WSDM 2018), Marina Del Rey, 5–9 February 2018, pp. 556–564. ACM (2018). https://doi.org/10.1145/3159652.3159712

Tseng, Y., et al.: Chinese open relation extraction for knowledge acquisition. In: Bouma, G., Parmentier, Y. (eds.) Proceedings of the 14th Conference of the European Chapter of the Association for Computational Linguistics (EACL 2014), 26–30 April 2014, Gothenburg, pp. 12–16. The Association for Computer Linguistics (2014). https://doi.org/10.3115/v1/e14-4003

Vaswani, A., et al.: Attention is all you need. In: Guyon, I., et al. (eds.) Advances in Neural Information Processing Systems 30: Annual Conference on Neural Information Processing Systems 2017, 4–9 December 2017, Long Beach, pp. 5998–6008 (2017). https://proceedings.neurips.cc/paper/2017/hash/3f5ee243547dee91fbd053c1c4a845aa-Abstract.html

Acknowledgements

This work is supported by the NSFC-General Technology Basic Research Joint Funds under Grant (U1936220), the National Natural Science Foundation of China under Grant (61972047) and the National Key Research and Development Program of China (2018YFC0831500).

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2022 The Author(s), under exclusive license to Springer Nature Switzerland AG

About this paper

Cite this paper

Yang, H., Li, DW., Li, Z., Yang, D., Qi, J., Wu, B. (2022). Open Relation Extraction via Query-Based Span Prediction. In: Memmi, G., Yang, B., Kong, L., Zhang, T., Qiu, M. (eds) Knowledge Science, Engineering and Management. KSEM 2022. Lecture Notes in Computer Science(), vol 13369. Springer, Cham. https://doi.org/10.1007/978-3-031-10986-7_6

Download citation

DOI: https://doi.org/10.1007/978-3-031-10986-7_6

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-031-10985-0

Online ISBN: 978-3-031-10986-7

eBook Packages: Computer ScienceComputer Science (R0)