Abstract

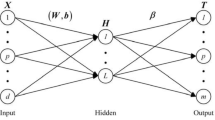

The imbalanced classification is an important branch of supervised learning and plays the important roles in many application fields. Compared with the sophisticated improvements on classification algorithms, it is easier to obtain the good performance by synthesizing the minority class samples so that the classification algorithms can be trained based on the balanced data sets. In consideration of the strong representation ability of multi-layer extreme learning machine (MLELM), this paper proposes a new method to create the synthetic minority class samples based on auto-encoder ML-ELM (simplified as AE-MLELM-SynMin). Firstly, an AE-MLELM is trained to obtain the deep feature encodings of original minority class samples. Secondly, the crossover and mutation operations are preformed on the original deep feature encodings and a number of new deep feature encodings are generated. Thirdly, the synthetic minority class samples are created by transforming the new deep feature encodings with AE-MLELM. Finally, the persuasive experiments are conducted to demonstrate the effectiveness of AE-MLELM-SynMin method. The experimental results show that our method can obtain the better imbalanced classification performance than SMOTE, Borderline-SMOTE, Random-SMOTE, and SMOTE-IPF methods.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

References

Japkowicz, N., Stephen, S.: The class imbalance problem: a systematic study. Intell. Data Anal. 6(5), 429–449 (2002)

Díez-Pastor, J.F., Rodríguez, J.J., García-Osorio, C., Kuncheva, L.I.: Random balance: ensembles of variable priors classifiers for imbalanced data. Knowl.-Based Syst. 85, 96–111 (2015)

Liu, X., Wu, J., Zhou, Z.: Exploratory undersampling for class-imbalance learning. IEEE Trans. Syst. Man Cybernet Part B (Cybernetics) 39(2), 539–550 (2009)

Sun, Y., Kamel, M.S., Wong, A.K.C., Wang, Y.: Cost-sensitive boosting for classification of imbalanced data. Pattern Recogn. 40(12), 3358–3378 (2007)

Tan, S.: Neighbor-weighted K-nearest neighbor for unbalanced text corpus. Expert Syst. Appl. 28(4), 667–671 (2005)

Zong, W.W., Huang, G.B., Chen, Y.Q.: Weighted extreme learning machine for imbalance learning. Neurocomputing 101, 229–242 (2013)

Chawla, N.V., Bowyer, K.W., Hall, L.O., Kegelmeyer, W.P.: SMOTE: synthetic minority over-sampling technique. J. Artif. Intell. Res. 16(1), 321–357 (2002)

Han, H., Wang, W.Y., Mao, B.H.: Borderline-SMOTE: a new over-sampling method in imbalanced data sets learning. Lect. Notes Comput. Sci. 3644, 878–887 (2005)

Dong, Y.J., Wang, X.H.: A new over-sampling approach: Random-SMOTE for learning from imbalanced data sets. In: Proceedings of the 5th International Conference on Knowledge Science, Engineering and Management, vol. 10, pp. 343–352 (2011)

Sáez, J.A., Luengo, J., Stefanowski, J., Herrera, F.: SMOTE-IPF: addressing the noisy and borderline examples problem in imbalanced classification by a re-sampling method with filtering. Inf. Sci. 291, 184–203 (2015)

Calleja, J.L., Fuentes, O.: A Distance-based over-sampling method for learning from imbalanced data sets. In: Proceedings of the Twentieth International Florida Artificial Intelligence Research Society Conference (2007)

Puntumapon, K., Waiyamai, K.: A pruning-based approach for searching precise and generalized region for synthetic minority over-sampling. In: Tan, P.-N., Chawla, S., Ho, C.K., Bailey, J. (eds.) PAKDD 2012. LNCS (LNAI), vol. 7302, pp. 371–382. Springer, Heidelberg (2012). https://doi.org/10.1007/978-3-642-30220-6_31

Lee, H., Kim, J., Kim, S.: Gaussian-based SMOTE algorithm for solving skewed class distributions. Int. J. Fuzzy Logic Intell. Syst. 17, 229–234 (2017)

Douzas, G., Bacao, F., Last, F.: Improving imbalanced learning through a heuristic oversampling method based on k-means and SMOTE. Inf. Sci. 465, 1–20 (2018)

Kasun, L., Zhou, H.M., Huang, G.B., Vong, C.M.: Representational Learning with ELMs for Big Data. IEEE Intell. Syst. 28, 31–34 (2013)

Lu, S.X., Wang, X., Zhang, G.Q., Zhou, X.: Effective algorithms of the Moore-Penrose inverse matrices for extreme learning machine. Intell. Data Anal. 19, 743–760 (2015)

Alcala-Fdez, I., et al.: KEEL data-mining software tool: data set repository, integration of algorithms and experimental analysis framework. J. Multiple-Valu. Logic Soft Comput. 17, 255–287 (2010)

He, Y.L., Liu, J.N.K., Wang, X.Z., Hu, Y.X.: Optimal bandwidth selection for re-substitution entropy estimation. Appl. Math. Comput. 219(8), 3425–3460 (2012)

Hand, D.J., Till, R.J.: A simple Generalisation of the area under the ROC curve for multiple class classification problems. Mach. Learn. 45(2), 171–186 (2001)

Sun, Y., Kamel, M.S., Wang, Y.: Boosting for learning multiple classes with imbalanced class distribution. In: Proceedings of the Sixth International Conference on Data Mining, pp. 592–602 (2006)

Lipton, Z.C., Elkan, C., Naryanaswamy, B.: Optimal thresholding of classifiers to maximize F1 measure. In: Proceedings of Machine Learning and Knowledge Discovery in Databases, pp. 225–239 (2014)

Acknowledgement

The authors would like to thank the chairs and anonymous reviewers whose meticulous readings and valuable suggestions help them to improve this paper significantly. This paper was supported by National Natural Science Foundation of China (61972261) and Basic Research Foundation of Shenzhen (JCYJ 20210324093609026, JCYJ 20200813091134001), and Scientific Research Foundation of Shenzhen University for Newly-introduced Teachers (860/000002110628).

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2022 The Author(s), under exclusive license to Springer Nature Switzerland AG

About this paper

Cite this paper

He, Y., Huang, Q., Xu, S., Huang, J.Z. (2022). A Novel Method to Create Synthetic Samples with Autoencoder Multi-layer Extreme Learning Machine. In: Rage, U.K., Goyal, V., Reddy, P.K. (eds) Database Systems for Advanced Applications. DASFAA 2022 International Workshops. DASFAA 2022. Lecture Notes in Computer Science, vol 13248. Springer, Cham. https://doi.org/10.1007/978-3-031-11217-1_2

Download citation

DOI: https://doi.org/10.1007/978-3-031-11217-1_2

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-031-11216-4

Online ISBN: 978-3-031-11217-1

eBook Packages: Computer ScienceComputer Science (R0)