Abstract

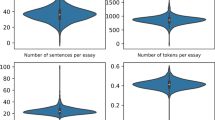

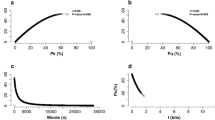

Experienced readers intuitively mark text passages containing central concepts as learning-relevant when reading actively. Although this intuitive process of marking important information is sometimes imperfect, it fosters comprehension. It would be beneficial to approximate this intuition by automatically detecting potential learning-relevant content. It is a building block for various upstream tasks such as automatic self-assessment or intelligent author assistance. This work argues that learners often apply heuristics based on different sentence types to determine the learning-relevant contents in texts. We show that such heuristics can be approximated using neural sentence classifiers and implement two neural sentence classifiers detecting causal and definitory sentences. We evaluate the classifiers’ ability to detect learning-relevant information in an empirical study (N = 37). Furthermore, a system performance evaluation compares the proposed classifiers with unsupervised summarization systems. We find evidence for a small but reliable association between the chosen automatically detectable sentence types (definition/causal) and the learners’ perception of content relevance. Additionally, the classifiers outperform most other relevant content selection techniques in our experiments. Interestingly, other simple heuristics based on sentence position or length also exhibit strong performance.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

References

Becker, L., Basu, S., Vanderwende, L.: Mind the gap: learning to choose gaps for question generation. In: Proceedings of the 2012 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies, pp. 742–751 (2012)

Best, R.M., Rowe, M., Ozuru, Y., McNamara, D.S.: Deep-level comprehension of science texts: the role of the reader and the text. Top. Lang. Disord. 25(1), 65–83 (2005)

Chen, G., Yang, J., Gasevic, D.: A comparative study on question-worthy sentence selection strategies for educational question generation. In: Isotani, S., Millán, E., Ogan, A., Hastings, P., McLaren, B., Luckin, R. (eds.) AIED 2019. LNCS (LNAI), vol. 11625, pp. 59–70. Springer, Cham (2019). https://doi.org/10.1007/978-3-030-23204-7_6

Dee-Lucas, D., Larkin, J.H.: Novice strategies for processing scientific texts. Discourse Process. 9(3), 329–354 (1986)

Devlin, J., Chang, M.W., Lee, K., Toutanova, K.: Bert: Pre-training of deep bidirectional transformers for language understanding. In: NAACL-HLT (1) (2019)

Du, X., Cardie, C.: Identifying where to focus in reading comprehension for neural question generation. In: Proceedings of the 2017 Conference on Empirical Methods in Natural Language Processing, pp. 2067–2073 (2017)

Erkan, G., Radev, D.R.: Lexrank: graph-based lexical centrality as salience in text summarization. J. Arti. Intell. Res. 22, 457–479 (2004)

Filighera, A., Steuer, T., Rensing, C.: Automatic text difficulty estimation using embeddings and neural networks. In: Scheffel, M., Broisin, J., Pammer-Schindler, V., Ioannou, A., Schneider, J. (eds.) EC-TEL 2019. LNCS, vol. 11722, pp. 335–348. Springer, Cham (2019). https://doi.org/10.1007/978-3-030-29736-7_25

Mahdavi, S., An, A., Davoudi, H., Delpisheh, M., Gohari, E.: Question-worthy sentence selection for question generation. In: Canadian Conference on AI, pp. 388–400 (2020)

McFadden, D., et al.: Conditional logit analysis of qualitative choice behavior (1973)

Nenkova, A., Vanderwende, L., McKeown, K.: A compositional context sensitive multi-document summarizer: exploring the factors that influence summarization. In: Proceedings of the 29th annual international ACM SIGIR Conference on Research and Development in Information Retrieval, pp. 573–580 (2006)

Stasaski, K., Rathod, M., Tu, T., Xiao, Y., Hearst, M.A.: Automatically generating cause-and-effect questions from passages. In: Proceedings of the 16th Workshop on Innovative Use of NLP for Building Educational Applications, pp. 158–170 (2021)

Steuer, T., Filighera, A., Meuser, T., Rensing, C.: I do not understand what i cannot define: Automatic question generation with pedagogically-driven content selection (2021). arXiv preprint arXiv:2110.04123

Steuer, T., Filighera, A., Rensing, C.: Remember the facts? investigating answer-aware neural question generation for text comprehension. In: Bittencourt, I.I., Cukurova, M., Muldner, K., Luckin, R., Millán, E. (eds.) Artificial Intelligence in Education, pp. 512–523. Springer International Publishing, Cham (2020)

Willis, A., Davis, G., Ruan, S., Manoharan, L., Landay, J., Brunskill, E.: Key phrase extraction for generating educational question-answer pairs. In: Proceedings of the Sixth (2019) ACM Conference on Learning@ Scale, pp. 1–10 (2019)

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2022 Springer Nature Switzerland AG

About this paper

Cite this paper

Steuer, T., Filighera, A., Zimmer, G., Tregel, T. (2022). What Is Relevant for Learning? Approximating Readers’ Intuition Using Neural Content Selection. In: Rodrigo, M.M., Matsuda, N., Cristea, A.I., Dimitrova, V. (eds) Artificial Intelligence in Education. AIED 2022. Lecture Notes in Computer Science, vol 13355. Springer, Cham. https://doi.org/10.1007/978-3-031-11644-5_41

Download citation

DOI: https://doi.org/10.1007/978-3-031-11644-5_41

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-031-11643-8

Online ISBN: 978-3-031-11644-5

eBook Packages: Computer ScienceComputer Science (R0)