Abstract

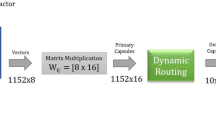

Capsule Network (CapsNet) is among the promising classifiers and a possible successor of the classifiers built based on Convolutional Neural Network (CNN). CapsNet is more accurate than CNNs in detecting images with overlapping categories and those with applied affine transformations. In this work, we propose a deep variant of CapsNet consisting of several capsule layers. In addition, we design the Capsule Summarization layer to reduce the complexity by reducing the number of parameters. DL-CapsNet, while being highly accurate, employs a small number of parameters and delivers faster training and inference. DL-CapsNet can process complex datasets with a high number of categories.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

References

Sabour, S., Frosst, N., Hinton, G.E.: Dynamic Routing Between Capsules. In: NIPS (2017)

Lecun, Y.: The MNIST database of handwritten digits. http://yann.lecun.com/exdb/mnist/

Xiao, H., Rasul, K., Vollgraf, R.: Fashion-MNIST: a novel image dataset for benchmarking machine learning algorithms, August 2017

Krizhevsky, A., Nair, V., Hinton, G.: CIFAR-10 and CIFAR-100 datasets (2009)

Xi, E., Bing, S., Jin, Y.: Capsule network performance on complex data. 10707(Fall), 1–7 (2017)

Rajasegaran, J., Jayasundara, V., Jayasekara, S., Jayasekara, H., Seneviratne, S., Rodrigo, R.: DeepCaps: going deeper with capsule networks. In: Proceedings of the IEEE Computer Society Conference on Computer Vision and Pattern Recognition, pp. 10717–10725 (2019)

Yang, S., et al.: RS-CapsNet: an advanced capsule network. IEEE Access 8, 85007–85018 (2020)

Huang, W., Zhou, F.: DA-CapsNet: dual attention mechanism capsule network. Sci. Rep. 10, 11383 (2020)

Shiri, P., Sharifi, R., Baniasadi, A.: Quick-CapsNet (QCN): a fast alternative to capsule networks. In: Proceedings of IEEE/ACS International Conference on Computer Systems and Applications, AICCSA, November 2020

Shiri, P., Baniasadi, A.: Convolutional fully-connected capsule network (CFC-CapsNet). In: ACM International Conference Proceeding Series (2021)

Deli, A.: HitNet: a neural network with capsules embedded in a Hit-or-Miss layer, extended with hybrid data augmentation and ghost capsules, pp. 1–19 (2018)

He, J., Cheng, X., He, J., Honglei, X.: CV-CapsNet: complex-valued capsule network. IEEE Access 7, 85492–85499 (2019)

Chen, J., Liu, Z.: Mask dynamic routing to combined model of deep capsule network and U-net. IEEE Trans. Neural Netw. Learn. Syst. 31(7), 2653–2664 (2020)

Ayidzoe, M.A., Yu, Y., Mensah, P.K., Cai, J., Adu, K., Tang, Y.: Gabor capsule network with preprocessing blocks for the recognition of complex images. Mach. Vis. Appl. 32(4), 91 (2021)

Tao, J., Zhang, X., Luo, X., Wang, Y., Song, C., Sun, Y.: Adaptive capsule network. Comput. Vis. Image Underst. 218, 103405 (2022)

Rajasegaran, J., Jayasundara, V., Jayasekara, S., Jayasekara, H., Seneviratne, S., Rodrigo, R.: DeepCaps: going deeper with capsule networks (2019)

Kolesnikov, A., et al.: Big Transfer (BiT): general visual representation learning. In: Vedaldi, A., Bischof, H., Brox, T., Frahm, J.-M. (eds.) ECCV 2020. LNCS, vol. 12350, pp. 491–507. Springer, Cham (2020). https://doi.org/10.1007/978-3-030-58558-7_29

Huang, G., Liu, Z., van der Maaten, L., Weinberger, K.Q.: Densely connected convolutional networks, August 2016

Acknowledgment

This research has been funded in part or completely by the Computing Hardware for Emerging Intelligent Sensory Applications (COHESA) project. COHESA is financed under the National Sciences and Engineering Research Council of Canada (NSERC) Strategic Networks grant number NETGP485577-15.

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2022 Springer Nature Switzerland AG

About this paper

Cite this paper

Shiri, P., Baniasadi, A. (2022). DL-CapsNet: A Deep and Light Capsule Network. In: Desnos, K., Pertuz, S. (eds) Design and Architecture for Signal and Image Processing. DASIP 2022. Lecture Notes in Computer Science, vol 13425. Springer, Cham. https://doi.org/10.1007/978-3-031-12748-9_5

Download citation

DOI: https://doi.org/10.1007/978-3-031-12748-9_5

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-031-12747-2

Online ISBN: 978-3-031-12748-9

eBook Packages: Computer ScienceComputer Science (R0)