Abstract

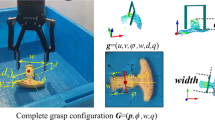

The Grasping of unknown objects is a challenging but critical problem in the field of robotic research. However, existing studies only focus on the shape of objects and ignore the impact of the differences in robot systems which has a vital influence on the completion of grasping tasks. In this work, we present a novel grasping approach with a dynamic annotation mechanism to address the problem, which includes a grasping dataset and a grasping detection network. The dataset provides two annotations named basic and decent annotation respectively, and the former can be transformed to the latter according to mechanical parameters of antipodal grippers and absolute positioning accuracies of robots. So that we take the characters of the robot system into account. Meanwhile, a new evaluation metric is presented to provide reliable assessments for the predicted grasps. The proposed grasping detection network is a fully convolutional network that can generate robust grasps for robots. In addition, evaluations based on datasets and experiments on a real robot show the effectiveness of our approach.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

References

Yun, J., Moseson, S., Saxena, A.: Efficient grasping from RGBD images: learning using a new rectangle representation. In: 2011 IEEE International Conference on Robotics and Automation, pp. 3304–3311. IEEE, Shanghai, China (2011)

Mahler, J., et al.: Dex-net 2.0: deep learning to plan robust grasps with synthetic point clouds and analytic grasp metrics. In: Robotics: Science and Systems, MIT Press, Massachusetts, USA (2017)

Fang, H.S., Wang, C., Gou M., Lu, C.: GraspNet-1Billion: a large-scale benchmark for general object grasping. In: 2020 IEEE/CVF Conference on Computer Vision and Pattern Recognition, pp. 11441–11450. IEEE, Seattle, WA, USA (2020)

Morrison, D., Corke, P., Leitner, J.: Closing the loop for robotic grasping: a real-time, generative grasp synthesis approach. In: Robotics: Science and Systems. MIT Press, Pitts-burgh, Pennsylvania, USA (2018)

Kumra, S., Joshi, S., Sahin, F.: Antipodal robotic grasping using generative residual convolutional neural network. In: 2020 IEEE/RSJ International Conference on Intelligent Robots and Systems, pp. 9626–9633. IEEE, Las Vegas, USA (2020)

Redmon, J., Angelova, A.: Real-time grasp detection using convolutional neural networks. In: 2015 IEEE International Conference on Robotics and Automation, pp. 1316–1322. IEEE, Seattle, WA, USA (2015)

Chu, F.J., Xu, R., Patricio, V.: Real-world multiobject, multigrasp detection. IEEE Robot. Autom. Lett. 3(4), 3355–3362 (2018)

Shelhamer, E., Long, J., Darrell, T.: Fully convolutional networks for semantic segmentation. IEEE Trans. Pattern Anal. Mach. Intell. 39(4), 640–651 (2016)

Zhou, Z., Siddiquee, M.M.R., Tajbakhsh, N., Liang, J.: UNet++: redesigning skip connections to exploit multiscale features in image segmentation. IEEE Trans. Med. Imaging 39(6), 1856–1867 (2020)

Huang, G., Liu, Z., Laurens, V., Weinberger, K.Q.: Densely connected convolutional networks. In: 2017 IEEE Conference on Computer Vision and Pattern Recognition, pp. 2261–2269. IEEE, Honolulu, HI, USA (2017)

Le, Q.V., Kamm, D., Kara, A.F., Ng, A.Y.: Learning to grasp objects with multiple contact points. In: 2010 IEEE International Conference on Robotics and Automation, pp. 5062–5069. IEEE, Anchorage, AK, USA (2010)

Depierre, A., Dellandrea, E., Chen, L.: Jacquard: a large scale dataset for robotic grasp detection. In: 2018 IEEE/RSJ International Conference on Intelligent Robots and Systems, pp. 3511–3516. IEEE, Madrid, Spain (2018)

Chang, A.X., et al.: ShapeNet: an information-rich 3D model repository Computer Science. CoRR abs/1512.03012 (2015)

Mahler, J., Matl, M., Liu, X., Li, A., Gealy, D., Goldberg, K.: Dex-Net 3.0: computing robust vacuum suction grasp targets in point clouds using a new analytic model and deep learning. In: 2018 International Conference on Robotics and Automation, pp.5620–5627. IEEE, Brisbane, QLD, Australia (2018)

Cao, H., Fang, H.S., Liu, W., Lu, C.: SuctionNet-1Billion: a large-scale benchmark for suction grasping. IEEE Robot. Autom. Lett. 6(4), 8718–8725 (2021)

Kumra, S., Kanan, C.: Robotic grasp detection using deep convolutional neural networks. In: 2017 IEEE/RSJ International Conference on Intelligent Robots and Systems, pp. 769–776. IEEE, Vancouver, BC, Canada (2017)

Zhang, H., Lan, X., Bai, S., Zhou, X., Tian, Z., Zheng, N.: ROI-based robotic grasp detection for object overlapping scenes. In: 2019 IEEE/RSJ International Conference on Intelligent Robots and Systems, pp. 4768–4775. IEEE, Macau, China (2019)

Wu, G., Chen, W., Cheng, H., Zuo, W., Zhang, D., You, J.: Multi-object grasping detection with hierarchical feature fusion. IEEE Access 7, 43884–43894 (2019)

Gou, M., Fang, H.S., Zhu, Z., Xu, S., Wang, C., Lu, C.: RGB matters: learning 7-DoF grasp poses on monocular RGBD images. In: 2021 IEEE International Conference on Robotics and Automation, pp. 13459–13466. IEEE, Xi’an, China (2021)

Pas, A.T., Gualtieri, M., Saenko, K., Platt, R.: Grasp pose detection in point clouds. Int. J. Robot. Res. 36(13–14), 1455–1473 (2017)

Liang, H., et al.: PointNetGPD: detecting grasp configurations from point sets. In: 2019 International Conference on Robotics and Automation, pp. 3629–3635. IEEE, Montreal, QC, Canada (2019)

Chen, L.C., Papandreou, G., Kokkinos, I., Murphy, K., Yuille, A.L.: DeepLab: semantic image segmentation with deep convolutional nets, atrous convolution, and fully connected CRFs. IEEE Trans. Pattern Anal. Mach. Intell. 40(4), 834–848 (2017)

Cao, J., Anwer, R.M., Cholakkal, H., Khan, F.S., Pang, Y., Shao, L.: SipMask: spatial information preservation for fast image and video instance segmentation. In: Vedaldi, A., Bischof, H., Brox, T., Frahm, J.-M. (eds.) ECCV 2020. LNCS, vol. 12359, pp. 1–18. Springer, Cham (2020). https://doi.org/10.1007/978-3-030-58568-6_1

Xie, E., Wang, W., Yu, Z., Anandkumar, A., A, J.M., Luo, P.: SegFormer: simple and effi-cient design for semantic segmentation with transformers. In: 35th Conference on Neural Information Processing Systems, pp. 12077–12090. MIT Press, Virtual Conference (2021)

Wu, S., Zhong, S., Liu, Y.: Deep residual learning for image steganalysis. Multi. Tools Appl. 77(9), 10437–10453 (2017). https://doi.org/10.1007/s11042-017-4440-4

He, K., Zhang, X., Ren, S., Sun, J.: Deep residual learning for image recognition. In: 2016 IEEE Conference on Computer Vision and Pattern Recognition, pp. 770–778. IEEE, Las Vegas, NV, USA (2016)

He, K., Zhang, X., Ren, S., Sun, J.: Identity mappings in deep residual networks. In: Leibe, B., Matas, J., Sebe, N., Welling, M. (eds.) ECCV 2016. LNCS, vol. 9908, pp. 630–645. Springer, Cham (2016). https://doi.org/10.1007/978-3-319-46493-0_38

Simonyan, K., Zisserman, A.: Very deep convolutional networks for large-scale image recognition. CoRR, abs/1490.1556 (2014)

Szegedy, C., Ioffe, S., Vanhoucke, V., Alemi, A.: Inception-v4, inception-resnet and the impact of residual connections on learning. In: 31th AAAI Conference on Artificial Intelligence, pp. 4278–4284. AAAI Press, San Francisco California USA (2017)

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2022 The Author(s), under exclusive license to Springer Nature Switzerland AG

About this paper

Cite this paper

Yang, S., Wang, B., Tao, J., Duan, Q., Liu, H. (2022). A Novel Grasping Approach with Dynamic Annotation Mechanism. In: Liu, H., et al. Intelligent Robotics and Applications. ICIRA 2022. Lecture Notes in Computer Science(), vol 13455. Springer, Cham. https://doi.org/10.1007/978-3-031-13844-7_5

Download citation

DOI: https://doi.org/10.1007/978-3-031-13844-7_5

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-031-13843-0

Online ISBN: 978-3-031-13844-7

eBook Packages: Computer ScienceComputer Science (R0)