Abstract

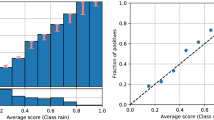

The concept of calibration is key in the development and validation of Machine Learning models, especially in sensitive contexts such as the medical one. However, existing calibration metrics can be difficult to interpret and are affected by theoretical limitations. In this paper, we present a new metric, called GICI (Global Interpretable Calibration Index), which is characterized by being local and defined only in terms of simple geometrical primitives, which makes it both simpler to interpret, and more general than other commonly used metrics, as it can be used also in recalibration procedures. Also, compared to traditional metrics, the GICI allows for a more comprehensive evaluation, as it provides a three-level information: a bin-level local estimate, a global one, and an estimate of the extent confidence scores are either over- or under-confident with respect to actual error rate. We also report the results from experiments aimed at testing the above statements and giving insights about the practical utility of this metric also to improve discriminative accuracy.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

References

Assel, M., Sjoberg, D., Vickers, A.: The brier score does not evaluate the clinical utility of diagnostic tests or prediction models. Diagn. Progn. Res. 1, 1–7 (2017)

Brier, G.W.: Verification of forecasts expressed in terms of probability. Mon. Weather Rev. 78(1), 1–3 (1950)

Burt, T., Button, K., Thom, H., Noveck, R., Munafò, M.R.: The burden of the “false-negatives’’ in clinical development: analyses of current and alternative scenarios and corrective measures. Clin. Transl. Sci. 10(6), 470–479 (2017)

Cabitza, F., Campagner, A., Sconfienza, L.M.: As if sand were stone. New concepts and metrics to probe the ground on which to build trustable AI. BMC Med. Inform. Decis. Making 20(1), 1–21 (2020)

Cabitza, F., et al.: The importance of being external. Methodological insights for the external validation of machine learning models in medicine. Comput. Methods Programs Biomed. 208, 106288 (2021)

Chawla, N.V., Bowyer, K.W., Hall, L.O., Kegelmeyer, W.P.: SMOTE: synthetic minority over-sampling technique. J. Artif. Intell. Res. 16, 321–357 (2002)

Christodoulou, E., Ma, J., Collins, G.S., Steyerberg, E.W., Verbakel, J.Y., Van Calster, B.: A systematic review shows no performance benefit of machine learning over logistic regression for clinical prediction models. J. Clin. Epidemiol. 110, 12–22 (2019)

Cleveland, W.S.: Robust locally weighted regression and smoothing scatterplots. J. Am. Stat. Assoc. 74(368), 829–836 (1979)

Cleveland, W.S., Devlin, S.J.: Locally weighted regression: an approach to regression analysis by local fitting. J. Am. Stat. Assoc. 83(403), 596–610 (1988)

DeGroot, M.H., Fienberg, S.E.: The comparison and evaluation of forecasters. J. Roy. Stat. Soc. Ser. D (Stat.) 32(1–2), 12–22 (1983)

Demšar, J.: Statistical comparisons of classifiers over multiple data sets. J. Mach. Learn. Res. 7, 1–30 (2006)

Efron, B.: Bootstrap methods: another look at the jackknife. Ann. Stat. 7(1), 1–26 (1979)

Frank, A., Asuncion, A.: Statlog (heart) data set (2010). http://archive.ics.uci.edu/ml/datasets/Statlog+(Heart)

Gneiting, T., Raftery, A.E.: Strictly proper scoring rules, prediction, and estimation. J. Am. Stat. Assoc. 102(477), 359–378 (2007)

Guo, C., Pleiss, G., Sun, Y., Weinberger, K.Q.: On calibration of modern neural networks. In: International Conference on Machine Learning, pp. 1321–1330. PMLR (2017)

Hartmann, H.C., Pagano, T.C., Sorooshian, S., Bales, R.: Confidence builders: evaluating seasonal climate forecasts from user perspectives. Bull. Am. Meteor. Soc. 83(5), 683–698 (2002)

Kompa, B., Snoek, J., Beam, A.L.: Second opinion needed: communicating uncertainty in medical machine learning. NPJ Digit. Med. 4(1), 1–6 (2021)

Luo, H., Pan, X., Wang, Q., Ye, S., Qian, Y.: Logistic regression and random forest for effective imbalanced classification. In: 2019 IEEE 43rd Annual Computer Software and Applications Conference (COMPSAC), vol. 1, pp. 916–917. IEEE (2019)

Luo, R., et al.: Localized calibration: metrics and recalibration. arXiv preprint arXiv:2102.10809 (2021)

Naeini, M.P., Cooper, G.F., Hauskrecht, M.: Obtaining well calibrated probabilities using Bayesian binning. In: Proceedings of the Twenty-Ninth AAAI Conference on Artificial Intelligence, AAAI 2015, pp. 2901–2907. AAAI Press (2015)

Niculescu-Mizil, A., Caruana, R.: Predicting good probabilities with supervised learning. In: Proceedings of the 22nd International Conference on Machine Learning, pp. 625–632 (2005)

Nusinovici, S., et al.: Logistic regression was as good as machine learning for predicting major chronic diseases. J. Clin. Epidemiol. 122, 56–69 (2020)

Platt, J.: Probabilistic outputs for support vector machines and comparisons to regularized likelihood methods. Adv. Large Margin Classif. 10(3), 61–74 (1999)

Raghu, M., et al.: Direct uncertainty prediction for medical second opinions. In: International Conference on Machine Learning, pp. 5281–5290. PMLR (2019)

Ramana, B.V., Boddu, R.S.K.: Performance comparison of classification algorithms on medical datasets. In: 2019 IEEE 9th Annual Computing and Communication Workshop and Conference (CCWC), pp. 0140–0145. IEEE (2019)

Robert, C., Casella, G.: Monte Carlo Statistical Methods, vol. 2. Springer, New York (2004). https://doi.org/10.1007/978-1-4757-4145-2

Rossi, R.A., Ahmed, N.K.: ILP, Indian liver patient dataset. In: AAAI (2015). https://networkrepository.com

Rossi, R.A., Ahmed, N.K.: Pima Indians diabets dataset. In: AAAI (2015). https://networkrepository.com

Sahoo, R., Zhao, S., Chen, A., Ermon, S.: Reliable decisions with threshold calibration. In: Advances in Neural Information Processing Systems, vol. 34 (2021)

Scargle, J.D.: Studies in astronomical time series analysis. V. Bayesian blocks, a new method to analyze structure in photon counting data. Astrophys. J. 504(1), 405 (1998)

Steyerberg, E., et al.: Assessing the performance of prediction models a framework for traditional and novel measures. Epidemiology 21, 128–38 (2010)

Vaicenavicius, J., Widmann, D., Andersson, C., Lindsten, F., Roll, J., Schön, T.: Evaluating model calibration in classification. In: The 22nd International Conference on Artificial Intelligence and Statistics, pp. 3459–3467. PMLR (2019)

Van Calster, B., McLernon, D.J., Van Smeden, M., Wynants, L., Steyerberg, E.W.: Calibration: the achilles heel of predictive analytics. BMC Med. 17(1), 1–7 (2019)

Van Calster, B., Vickers, A.J.: Calibration of risk prediction models: impact on decision-analytic performance. Med. Decis. Making 35(2), 162–169 (2015)

Vovk, V., Petej, I.: Venn-abers predictors. arXiv preprint arXiv:1211.0025 (2012)

Wallace, B.C., Dahabreh, I.J.: Class probability estimates are unreliable for imbalanced data (and how to fix them). In: 2012 IEEE 12th International Conference on Data Mining, pp. 695–704. IEEE (2012)

Wolbergs, W., et al.: Breast cancer wisconsin (diagnostic) data set. UCI Machine Learning Repository (1992). http://archive.ics.uci.edu/ml/

Zhao, S., Ma, T., Ermon, S.: Individual calibration with randomized forecasting. In: International Conference on Machine Learning, pp. 11387–11397. PMLR (2020)

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2022 IFIP International Federation for Information Processing

About this paper

Cite this paper

Cabitza, F., Campagner, A., Famiglini, L. (2022). Global Interpretable Calibration Index, a New Metric to Estimate Machine Learning Models’ Calibration. In: Holzinger, A., Kieseberg, P., Tjoa, A.M., Weippl, E. (eds) Machine Learning and Knowledge Extraction. CD-MAKE 2022. Lecture Notes in Computer Science, vol 13480. Springer, Cham. https://doi.org/10.1007/978-3-031-14463-9_6

Download citation

DOI: https://doi.org/10.1007/978-3-031-14463-9_6

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-031-14462-2

Online ISBN: 978-3-031-14463-9

eBook Packages: Computer ScienceComputer Science (R0)