Abstract

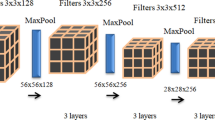

Convolutional neural networks have achieved success in various tasks, but often lack compactness and robustness, which are, however, required under resource-constrained and safety-critical environments. Previous works mainly focused on enhancing either compactness or robustness of neural networks, such as network pruning and adversarial training. Robust neural network pruning aims to reduce computational cost while preserving both accuracy and robustness of a network. Existing robust pruning works usually require expert experiences and trial-and-error to design proper pruning criteria or auxiliary modules, limiting their applications. Meanwhile, evolutionary algorithms (EAs) have been used to prune neural networks automatically, achieving impressive results but without considering the robustness. In this paper, we propose a novel robust pruning method CCRP by cooperative coevolution. Specifically, robust pruning is formulated as a three-objective optimization problem that optimizes accuracy, robustness and compactness simultaneously, and solved by a cooperative coevolution pruning framework, which prunes filters in each layer by EAs separately. The experiments on CIFAR-10 and SVHN show that CCRP can achieve comparable performance with state-of-the-art methods.

This work was supported by the NSFC (62022039, 62106098) and the Jiangsu NSF (BK20201247).

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

References

Bäck, T.: Evolutionary Algorithms in Theory and Practice: Evolution Strategies, Evolutionary Programming. Genetic Algorithms. Oxford University Press, Oxford, UK (1996)

Cheng, J., Wang, P., Li, G., Hu, Q., Lu, H.: Recent advances in efficient computation of deep convolutional neural networks. Front. Inf. Technol. Electron. Eng. 19(1), 64–77 (2018). https://doi.org/10.1631/FITEE.1700789

Deb, K., Agrawal, S., Pratap, A., Meyarivan, T.: A fast and elitist multiobjective genetic algorithm: NSGA-II. IEEE Trans. Evol. Comput. 6(2), 182–197 (2002)

Duan, R., Ma, X., Wang, Y., Bailey, J., Qin, A.K., Yang, Y.: Adversarial camouflage: hiding physical-world attacks with natural styles. In: Proceedings of the 2020 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Seattle, WA, pp. 997–1005 (2020)

Eykholt, K., et al.: Robust physical-world attacks on deep learning visual classification. In: Proceedings of the 2018 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Salt Lake City, UT, pp. 1625–1634 (2018)

Girshick, R.B., Donahue, J., Darrell, T., Malik, J.: Rich feature hierarchies for accurate object detection and semantic segmentation. In: Proceedings of the 2014 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Columbus, OH, pp. 580–587 (2014)

Goodfellow, I.J., Shlens, J., Szegedy, C.: Explaining and harnessing adversarial examples. In: Proceedings of the 3rd International Conference on Learning Representations (ICLR), San Diego, CA (2015)

Han, S., Mao, H., Dally, W.J.: Deep compression: Compressing deep neural network with pruning, trained quantization and huffman coding. In: Proceedings of the 4th International Conference on Learning Representations (ICLR), San Juan, Puerto Rico (2016)

He, K., Zhang, X., Ren, S., Sun, J.: Deep residual learning for image recognition. In: Proceedings of the 2016 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Las Vegas, NV, pp. 770–778 (2016)

Huang, H., Wang, Y., Erfani, S., Gu, Q., Bailey, J., Ma, X.: Exploring architectural ingredients of adversarially robust deep neural networks. In: Advances in Neural Information Processing Systems (NeurIPS), vol. 34, New Orleans, LA, pp. 5545–5559 (2021)

Krizhevsky, A., Hinton, G.: Learning multiple layers of features from tiny images. Technical report, University of Toronto, Toronto, Canada (2009)

Kundu, S., Nazemi, M., Beerel, P.A., Pedram, M.: DNR: a tunable robust pruning framework through dynamic network rewiring of DNNs. In: Proceedings of the 26th Asia and South Pacific Design Automation Conference (ASPDAC), Tokyo, Japan, pp. 344–350 (2021)

Li, G., Qian, C., Jiang, C., Lu, X., Tang, K.: Optimization based layer-wise magnitude-based pruning for DNN compression. In: Proceedings of the 27th International Joint Conference on Artificial Intelligence (IJCAI), Stockholm, Sweden, pp. 2383–2389 (2018)

Li, G., Yang, P., Qian, C., Hong, R., Tang., K.: Magnitude-based pruning for recurrent neural networks. IEEE Trans. Neural Networks Learn. Syst. (in press)

Li, H., Kadav, A., Durdanovic, I., Samet, H., Graf, H.P.: Pruning filters for efficient convnets. In: Proceedings of the 5th International Conference on Learning Representations (ICLR), Toulon, France (2017)

Lin, M., et al.: HRank: filter pruning using high-rank feature map. In: Proceedings of the 2020 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Los Alamitos, CA, pp. 1526–1535 (2020)

Loshchilov, I., Hutter, F.: SGDR: stochastic gradient descent with warm restarts. In: Proceedings of the 5th International Conference on Learning Representations (ICLR), Toulon, France (2017)

Luo, J., Wu, J.: Autopruner: an end-to-end trainable filter pruning method for efficient deep model inference. Pattern Recogn. 107(107461), 107461 (2020)

Madry, A., Makelov, A., Schmidt, L., Tsipras, D., Vladu, A.: Towards deep learning models resistant to adversarial attacks. In: Proceedings of the 6th International Conference on Learning Representations (ICLR), Vancouver, Canada (2018)

Netzer, Y., Wang, T., Coates, A., Bissacco, A., Wu, B., Ng, A.Y.: Reading digits in natural images with unsupervised feature learning. In: Advances in Neural Information Processing Systems, Workshop (NeurIPS) (2011)

Sehwag, V., Wang, S., Mittal, P., Jana, S.: Towards compact and robust deep neural networks. CoRR p. abs/1906.06110 (2019)

Sehwag, V., Wang, S., Mittal, P., Jana, S.: HYDRA: pruning adversarially robust neural networks. In: Advances in Neural Information Processing Systems (NeurIPS), vol. 33, Vancouver, Canada, pp. 19655–19666 (2020)

Shang, H., Wu, J.L., Hong, W., Qian, C.: Neural network pruning by cooperative coevolution. In: Proceedings of the 31st International Joint Conference on Artificial Intelligence (IJCAI), Vienna, Austria (2022)

Simonyan, K., Zisserman, A.: Very deep convolutional networks for large-scale image recognition. In: Proceedings of the 3rd International Conference on Learning Representations (ICLR), San Diego, CA (2015)

Wu, B., Chen, J., Cai, D., He, X., Gu, Q.: Do wider neural networks really help adversarial robustness? In: Advances in Neural Information Processing Systems (NeurIPS), vol. 34, New Orleans, LA New Orleans, LA, pp. 7054–7067 (2021)

Yao, X.: Evolving artificial neural networks. Proc. IEEE 87(9), 1423–1447 (1999)

Ye, S., et al.: Adversarial robustness vs. model compression, or both? In: Proceedings of the 2019 IEEE International Conference on Computer Vision (ICCV), Seoul, Korea (South), pp. 111–120 (2019)

Zagoruyko, S., Komodakis, N.: Wide residual networks. In: Proceedings of the 2016 British Machine Vision Conference (BMVC), York, UK (2016)

Zhang, H., Yu, Y., Jiao, J., Xing, E.P., Ghaoui, L.E., Jordan, M.I.: Theoretically principled trade-off between robustness and accuracy. In: Proceedings of the 36th International Conference on Machine Learning (ICML), Long Beach CA, pp. 7472–7482 (2019)

Zhou, A., Yao, A., Guo, Y., Xu, L., Chen, Y.: Incremental network quantization: Towards lossless CNNs with low-precision weights. In: Proceedings of the 5th International Conference on Learning Representations (ICLR), Toulon, France (2017)

Zhou, Z., Yu, Y., Qian, C.: Evolutionary Learning: Advances in Theories and Algorithms. Springer, Singapore (2019)

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2022 The Author(s), under exclusive license to Springer Nature Switzerland AG

About this paper

Cite this paper

Wu, JL., Shang, H., Hong, W., Qian, C. (2022). Robust Neural Network Pruning by Cooperative Coevolution. In: Rudolph, G., Kononova, A.V., Aguirre, H., Kerschke, P., Ochoa, G., Tušar, T. (eds) Parallel Problem Solving from Nature – PPSN XVII. PPSN 2022. Lecture Notes in Computer Science, vol 13398. Springer, Cham. https://doi.org/10.1007/978-3-031-14714-2_32

Download citation

DOI: https://doi.org/10.1007/978-3-031-14714-2_32

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-031-14713-5

Online ISBN: 978-3-031-14714-2

eBook Packages: Computer ScienceComputer Science (R0)