Abstract

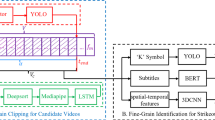

Figure skating is an ornamental competitive sport with fancy technical moves. In particular, highlight videos in figure skating, which contain these elegant moves, have always been a favorite part of the vast audience. However, research in highlight video detection has not yielded much success. Previous researches mainly focus on detecting the falling action in the video rather than numerous technical moves. Therefore, we propose a segmentation method for the whole video to use Tube self-Attention for highlights detection. In particular, we have added a new module outside the existing network, which enables the editing and integration of the highlight moments of athletes in a single competition to produce highlight videos. Additionally, since few datasets have explored highlight actions in figure skating, we design a new dataset HS-FS (Highlight Shot in Figure Skating), which can be used to train the Tube Self-Attention model to satisfy highlight detection. Experiments show that the training accuracy obtained on the new dataset is 99.35% during training. Visualizations have demonstrated that our proposed methods could identify the highlight moment in figure skating videos.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

References

Cao, Z., Hidalgo Martinez, G., Simon, T., Wei, S., Sheikh, Y.A.: OpenPose: realtime multi-person 2D pose estimation using part affinity fields. IEEE Trans. Pattern Anal. Mach. Intell. 43, 172–186 (2019)

Cao, Z., Simon, T., Wei, S.E., Sheikh, Y.: Realtime multi-person 2D pose estimation using part affinity fields. In: CVPR (2017)

Carreira, J., Zisserman, A.: Quo Vadis, action recognition? A new model and the kinetics dataset. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 6299–6308 (2017)

Kay, W., et al.: The kinetics human action video dataset. arXiv preprint arXiv:1705.06950 (2017)

Kuehne, H., Arslan, A., Serre, T.: The language of actions: recovering the syntax and semantics of goal-directed human activities. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 780–787 (2014)

Lea, C., Flynn, M.D., Vidal, R., Reiter, A., Hager, G.D.: Temporal convolutional networks for action segmentation and detection. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 156–165 (2017)

Li, Y., Ye, Z., Rehg, J.M.: Delving into egocentric actions. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 287–295 (2015)

Liu, S., et al.: FSD-10: a dataset for competitive sports content analysis. arXiv preprint arXiv:2002.03312 (2020)

Nakano, T., Sakata, A., Kishimoto, A.: Estimating blink probability for highlight detection in figure skating videos. arXiv preprint arXiv:2007.01089 (2020)

Pan, J.H., Gao, J., Zheng, W.S.: Action assessment by joint relation graphs. In: Proceedings of the IEEE/CVF International Conference on Computer Vision (ICCV), October 2019

Park, J., Kim, D., Huh, S., Jo, S.: Maximization and restoration: action segmentation through dilation passing and temporal reconstruction. Pattern Recognit. 129, 108764 (2022)

Parmar, P., Morris, B.: Action quality assessment across multiple actions. In: 2019 IEEE Winter Conference on Applications of Computer Vision (WACV), pp. 1468–1476. IEEE (2019)

Parmar, P., Morris, B.T.: What and how well you performed? A multitask learning approach to action quality assessment. In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), June 2019

Parmar, P., Tran Morris, B.: Learning to score Olympic events. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition Workshops, pp. 20–28 (2017)

Paszke, A., et al.: Automatic differentiation in PyTorch (2017)

Ping, Q., Chen, C.: Video highlights detection and summarization with lag-calibration based on concept-emotion mapping of crowd-sourced time-sync comments. arXiv preprint arXiv:1708.02210 (2017)

Rochan, M., Krishna Reddy, M.K., Ye, L., Wang, Y.: Adaptive video highlight detection by learning from user history. In: Vedaldi, A., Bischof, H., Brox, T., Frahm, J.-M. (eds.) ECCV 2020. LNCS, vol. 12366, pp. 261–278. Springer, Cham (2020). https://doi.org/10.1007/978-3-030-58589-1_16

Simon, T., Joo, H., Matthews, I., Sheikh, Y.: Hand keypoint detection in single images using multiview bootstrapping. In: CVPR (2017)

Tang, Y., et al.: Uncertainty-aware score distribution learning for action quality assessment. In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), June 2020

Tran, D., Bourdev, L., Fergus, R., Torresani, L., Paluri, M.: Learning spatiotemporal features with 3d convolutional networks. In: Proceedings of the IEEE International Conference on Computer Vision, pp. 4489–4497 (2015)

Wang, S., Yang, D., Zhai, P., Chen, C., Zhang, L.: TSA-Net: tube self-attention network for action quality assessment. In: Proceedings of the 29th ACM International Conference on Multimedia, pp. 4902–4910 (2021)

Wei, S.E., Ramakrishna, V., Kanade, T., Sheikh, Y.: Convolutional pose machines. In: CVPR (2016)

Xia, J., et al.: Audio-visual MLP for scoring sport (2022). https://doi.org/10.48550/ARXIV.2203.03990, https://arxiv.org/abs/2203.03990

Xu, C., Fu, Y., Zhang, B., Chen, Z., Jiang, Y.G., Xue, X.: Learning to score figure skating sport videos. IEEE Trans. Circuits Syst. Video Technol. 30(12), 4578–4590 (2019)

Xu, M., Wang, H., Ni, B., Zhu, R., Sun, Z., Wang, C.: Cross-category video highlight detection via set-based learning. In: Proceedings of the IEEE/CVF International Conference on Computer Vision (ICCV), pp. 7970–7979, October 2021

Yi, F., Wen, H., Jiang, T.: AsFormer: transformer for action segmentation. arXiv preprint arXiv:2110.08568 (2021)

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2022 The Author(s), under exclusive license to Springer Nature Switzerland AG

About this paper

Cite this paper

Fan, S., Wei, Y., Xia, J., Zheng, F. (2022). Hightlight Video Detection in Figure Skating. In: Yu, S., et al. Pattern Recognition and Computer Vision. PRCV 2022. Lecture Notes in Computer Science, vol 13536. Springer, Cham. https://doi.org/10.1007/978-3-031-18913-5_50

Download citation

DOI: https://doi.org/10.1007/978-3-031-18913-5_50

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-031-18912-8

Online ISBN: 978-3-031-18913-5

eBook Packages: Computer ScienceComputer Science (R0)