Abstract

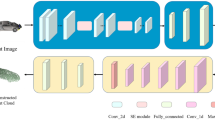

Reconstruction networks for well-ordered data such as 2D images and 1D continuous signals are easy to optimize through element-wised squared errors, while permutation-arbitrary point clouds cannot be constrained directly because their points permutations are not fixed. Though existing works design algorithms to match two point clouds and evaluate shape errors based on matched results, they are limited by pre-defined matching processes. In this work, we propose a novel framework named PCLossNet which learns to train a point cloud reconstruction network without any matching. By training through an adversarial process together with the reconstruction network, PCLossNet can better explore the differences between point clouds and create more precise reconstruction results. Experiments on multiple datasets prove the superiority of our method, where PCLossNet can help networks achieve much lower reconstruction errors and extract more representative features, with about 4 times faster training efficiency than the commonly-used EMD loss. Our codes can be found in https://github.com/Tianxinhuang/PCLossNet.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

References

Achlioptas, P., Diamanti, O., Mitliagkas, I., Guibas, L.: Learning representations and generative models for 3D point clouds. In: International Conference on Machine Learning, pp. 40–49. PMLR (2018)

Arandjelovic, R., Gronat, P., Torii, A., Pajdla, T., Sivic, J.: NetVLAD: CNN architecture for weakly supervised place recognition. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 5297–5307 (2016)

Arjovsky, M., Chintala, S., Bottou, L.: Wasserstein GAN. arXiv preprint arXiv:1701.07875 (2017)

Fan, H., Su, H., Guibas, L.J.: A point set generation network for 3D object reconstruction from a single image. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 605–613 (2017)

Gadelha, M., Wang, R., Maji, S.: Multiresolution tree networks for 3D point cloud processing. In: Proceedings of the European Conference on Computer Vision (ECCV), pp. 103–118 (2018)

Goodfellow, I.J., et al.: Generative adversarial networks. arXiv preprint arXiv:1406.2661 (2014)

Gulrajani, I., Ahmed, F., Arjovsky, M., Dumoulin, V., Courville, A.C.: Improved training of Wasserstein GANs. In: Advances in Neural Information Processing Systems, pp. 5767–5777 (2017)

Han, Z., Wang, X., Liu, Y.S., Zwicker, M.: Multi-angle point cloud-VAE: unsupervised feature learning for 3D point clouds from multiple angles by joint self-reconstruction and half-to-half prediction. In: 2019 IEEE/CVF International Conference on Computer Vision (ICCV), pp. 10441–10450. IEEE (2019)

Huang, T., Liu, Y.: 3D point cloud geometry compression on deep learning. In: Proceedings of the 27th ACM International Conference on Multimedia, pp. 890–898 (2019)

Huang, T., et al.: RFNet: recurrent forward network for dense point cloud completion. In: Proceedings of the IEEE/CVF International Conference on Computer Vision, pp. 12508–12517 (2021)

Huang, Z., Yu, Y., Xu, J., Ni, F., Le, X.: PF-net: point fractal network for 3D point cloud completion. In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, pp. 7662–7670 (2020)

Kingma, D.P., Ba, J.: Adam: a method for stochastic optimization. arXiv preprint arXiv:1412.6980 (2014)

Li, R., Li, X., Fu, C.W., Cohen-Or, D., Heng, P.A.: PU-GAN: a point cloud upsampling adversarial network. In: Proceedings of the IEEE/CVF International Conference on Computer Vision, pp. 7203–7212 (2019)

Liu, M., Sheng, L., Yang, S., Shao, J., Hu, S.M.: Morphing and sampling network for dense point cloud completion. In: Proceedings of the AAAI Conference on Artificial Intelligence, vol. 34, pp. 11596–11603 (2020)

Mao, X., Li, Q., Xie, H., Lau, R.Y., Wang, Z., Paul Smolley, S.: Least squares generative adversarial networks. In: Proceedings of the IEEE International Conference on Computer Vision, pp. 2794–2802 (2017)

Nguyen, T., Pham, Q.H., Le, T., Pham, T., Ho, N., Hua, B.S.: Point-set distances for learning representations of 3D point clouds. In: Proceedings of the IEEE/CVF International Conference on Computer Vision, pp. 10478–10487 (2021)

Qi, C.R., Su, H., Mo, K., Guibas, L.J.: PointNet: deep learning on point sets for 3D classification and segmentation. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 652–660 (2017)

Qi, C.R., Yi, L., Su, H., Guibas, L.J.: PointNet++: deep hierarchical feature learning on point sets in a metric space. In: Advances in Neural Information Processing Systems, pp. 5099–5108 (2017)

Rao, Y., Lu, J., Zhou, J.: Global-local bidirectional reasoning for unsupervised representation learning of 3D point clouds. In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, pp. 5376–5385 (2020)

Urbach, D., Ben-Shabat, Y., Lindenbaum, M.: DPDist: comparing point clouds using deep point cloud distance. In: Vedaldi, A., Bischof, H., Brox, T., Frahm, J.-M. (eds.) ECCV 2020. LNCS, vol. 12356, pp. 545–560. Springer, Cham (2020). https://doi.org/10.1007/978-3-030-58621-8_32

Wang, H., Jiang, Z., Yi, L., Mo, K., Su, H., Guibas, L.J.: Rethinking sampling in 3D point cloud generative adversarial networks. arXiv preprint arXiv:2006.07029 (2020)

Wang, X., Ang Jr, M.H., Lee, G.H.: Cascaded refinement network for point cloud completion. In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, pp. 790–799 (2020)

Wang, Y., Sun, Y., Liu, Z., Sarma, S.E., Bronstein, M.M., Solomon, J.M.: Dynamic graph CNN for learning on point clouds. Acm Trans. Graph. (tog) 38(5), 1–12 (2019)

Wu, C.H., et al.: PCC arena: a benchmark platform for point cloud compression algorithms. In: Proceedings of the 12th ACM International Workshop on Immersive Mixed and Virtual Environment Systems, pp. 1–6 (2020)

Wu, T., Pan, L., Zhang, J., Wang, T., Liu, Z., Lin, D.: Density-aware chamfer distance as a comprehensive metric for point cloud completion. arXiv preprint arXiv:2111.12702 (2021)

Wu, Z., et al.: 3D shapenets: a deep representation for volumetric shapes. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 1912–1920 (2015)

Yang, Y., Feng, C., Shen, Y., Tian, D.: FoldingNet: point cloud auto-encoder via deep grid deformation. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 206–215 (2018)

Yi, L., et al.: A scalable active framework for region annotation in 3d shape collections. ACM Trans. Graph. (ToG) 35(6), 1–12 (2016)

Yu, L., Li, X., Fu, C.W., Cohen-Or, D., Heng, P.A.: PU-net: point cloud upsampling network. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 2790–2799 (2018)

Yuan, W., Khot, T., Held, D., Mertz, C., Hebert, M.: PCN: point completion network. In: 2018 International Conference on 3D Vision (3DV), pp. 728–737. IEEE (2018)

Zhao, Y., Birdal, T., Deng, H., Tombari, F.: 3D point capsule networks. In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, pp. 1009–1018 (2019)

Acknowledgement

We thank all authors, reviewers and the chair for the excellent contributions. This work is supported by the National Science Foundation 62088101.

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

1 Electronic supplementary material

Below is the link to the electronic supplementary material.

Rights and permissions

Copyright information

© 2022 The Author(s), under exclusive license to Springer Nature Switzerland AG

About this paper

Cite this paper

Huang, T. et al. (2022). Learning to Train a Point Cloud Reconstruction Network Without Matching. In: Avidan, S., Brostow, G., Cissé, M., Farinella, G.M., Hassner, T. (eds) Computer Vision – ECCV 2022. ECCV 2022. Lecture Notes in Computer Science, vol 13661. Springer, Cham. https://doi.org/10.1007/978-3-031-19769-7_11

Download citation

DOI: https://doi.org/10.1007/978-3-031-19769-7_11

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-031-19768-0

Online ISBN: 978-3-031-19769-7

eBook Packages: Computer ScienceComputer Science (R0)