Abstract

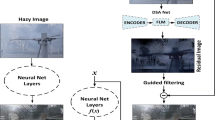

In the real world, the degradation of images taken under haze can be quite complex, where the spatial distribution of haze varies from image to image. Recent methods adopt deep neural networks to recover clean scenes from hazy images directly. However, due to the generic design of network architectures and the failure in estimating an accurate haze degradation model, the generalization ability of recent dehazing methods on real-world hazy images is not ideal. To address the problem of modeling real-world haze degradation, we propose a novel Separable Hybrid Attention (SHA) module to perceive haze density by capturing positional-sensitive features in the orthogonal directions to achieve this goal. Moreover, a density encoding matrix is proposed to model the uneven distribution of the haze explicitly. The density encoding matrix generates positional encoding in a semi-supervised way – such a haze density perceiving and modeling strategy captures the unevenly distributed degeneration at the feature-level effectively. Through a suitable combination of SHA and density encoding matrix, we design a novel dehazing network architecture, which achieves a good complexity-performance trade-off. Comprehensive evaluation on both synthetic datasets and real-world datasets demonstrates that the proposed method surpasses all the state-of-the-art approaches with a large margin both quantitatively and qualitatively. The code is released in https://github.com/Owen718/ECCV22-Perceiving-and-Modeling-Density-for-Image-Dehazing.

T. Ye, Y. Zhang and M. Jiang—Equal contribution.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

References

Ancuti, C.O., Ancuti, C., Sbert, M., Timofte, R.: Dense haze: a benchmark for image dehazing with dense-haze and haze-free images. In: IEEE International Conference on Image Processing (ICIP). IEEE ICIP 2019 (2019)

Ancuti, C.O., Ancuti, C., Timofte, R., Vleeschouwer, C.D.: O-haze: a dehazing benchmark with real hazy and haze-free outdoor images. In: IEEE Conference on Computer Vision and Pattern Recognition, NTIRE Workshop. NTIRE CVPR’18 (2018)

Ancuti, C.O., Ancuti, C., Vasluianu, F.A., Timofte, R.: Ntire 2020 challenge on nonhomogeneous dehazing. In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition Workshops, pp. 490–491 (2020)

Ancuti, C.O., Ancuti, C., Vasluianu, F.A., Timofte, R.: Ntire 2021 nonhomogeneous dehazing challenge report. In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, pp. 627–646 (2021)

Berman, D., Avidan, S., et al.: Non-local image dehazing. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 1674–1682 (2016)

Cai, B., Xu, X., Jia, K., Qing, C., Tao, D.: Dehazenet: an end-to-end system for single image haze removal. IEEE Trans. Image Process. 25(11), 5187–5198 (2016)

Charbonnier, P., Blanc-Feraud, L., Aubert, G., Barlaud, M.: Two deterministic half-quadratic regularization algorithms for computed imaging. In: Proceedings of 1st International Conference on Image Processing, vol. 2, pp. 168–172. IEEE (1994)

Dong, H., et al.: Multi-scale boosted dehazing network with dense feature fusion. In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, pp. 2157–2167 (2020)

Fattal, R.: Dehazing using color-lines. ACM Trans. Graph. 34(1), 13:1–13:14 (2014)

Fu, J., et al.: Dual attention network for scene segmentation. In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, pp. 3146–3154 (2019)

He, K., Sun, J., Tang, X.: Single image haze removal using dark channel prior. IEEE Trans. Pattern Anal. Mach. Intell. 33(12), 2341–2353 (2010)

Hong, M., Xie, Y., Li, C., Qu, Y.: Distilling image dehazing with heterogeneous task imitation. In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, pp. 3462–3471 (2020)

Hu, J., Shen, L., Sun, G.: Squeeze-and-excitation networks. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 7132–7141 (2018)

Jiang, Y., Sun, C., Zhao, Y., Yang, L.: Image dehazing using adaptive bi-channel priors on superpixels. Comput. Vis. Image Underst. 165, 17–32 (2017)

Katiyar, K., Verma, N.: Single image haze removal algorithm using color attenuation prior and multi-scale fusion. Int. J. Comput. Appl. 141(10), 037–042 (2016). https://doi.org/10.5120/ijca2016909827

Li, B., Peng, X., Wang, Z., Xu, J., Feng, D.: Aod-net: all-in-one dehazing network. In: Proceedings of the IEEE International Conference on Computer Vision, pp. 4770–4778 (2017)

Li, B.: Benchmarking single-image dehazing and beyond. IEEE Trans. Image Process. 28(1), 492–505 (2018)

Li, Y., Yao, T., Pan, Y., Mei, T.: Contextual transformer networks for visual recognition. arXiv preprint arXiv:2107.12292 (2021)

Liu, X., Ma, Y., Shi, Z., Chen, J.: Griddehazenet: attention-based multi-scale network for image dehazing. In: Proceedings of the IEEE/CVF International Conference on Computer Vision, pp. 7314–7323 (2019)

Liu, Y., et al.: From synthetic to real: image dehazing collaborating with unlabeled real data. arXiv preprint arXiv:2108.02934 (2021)

Qin, X., Wang, Z., Bai, Y., Xie, X., Jia, H.: Ffa-net: feature fusion attention network for single image dehazing. In: Proceedings of the AAAI Conference on Artificial Intelligence, vol. 34, pp. 11908–11915 (2020)

Woo, S., Park, J., Lee, J.Y., Kweon, I.S.: Cbam: convolutional block attention module. In: Proceedings of the European Conference on Computer Vision (ECCV), pp. 3–19 (2018)

Wu, H., et al.: Contrastive learning for compact single image dehazing. In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, pp. 10551–10560 (2021)

Zamir, S.W., et al.: Multi-stage progressive image restoration. In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, pp. 14821–14831 (2021)

Acknowledgement

This work is supported partially by the Natural Science Foundation of Fujian Province of China under Grant (2021J01867), Education Department of Fujian Province under Grant (JAT190301), Foundation of Jimei University under Grant (ZP2020034), the National Nature Science Foundation of China under Grant (61901117), Natural Science Foundation of Chongqing, China under Grant (No. cstc2020jcyj-msxmX0324).

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

1 Electronic supplementary material

Below is the link to the electronic supplementary material.

Rights and permissions

Copyright information

© 2022 The Author(s), under exclusive license to Springer Nature Switzerland AG

About this paper

Cite this paper

Ye, T. et al. (2022). Perceiving and Modeling Density for Image Dehazing. In: Avidan, S., Brostow, G., Cissé, M., Farinella, G.M., Hassner, T. (eds) Computer Vision – ECCV 2022. ECCV 2022. Lecture Notes in Computer Science, vol 13679. Springer, Cham. https://doi.org/10.1007/978-3-031-19800-7_8

Download citation

DOI: https://doi.org/10.1007/978-3-031-19800-7_8

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-031-19799-4

Online ISBN: 978-3-031-19800-7

eBook Packages: Computer ScienceComputer Science (R0)