Abstract

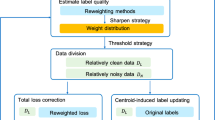

Label noise is prevalent in real-world visual learning applications and correcting all label mistakes can be prohibitively costly. Training neural network classifiers on such noisy datasets may lead to significant performance degeneration. Active label correction (ALC) attempts to minimize the re-labeling costs by identifying examples for which providing correct labels will yield maximal performance improvements. Existing ALC approaches typically select the examples that the classifier is least confident about (e.g. with the largest entropies). However, such confidence estimates can be unreliable as the classifier itself is initially trained on noisy data. Also, naïvely selecting a batch of low confidence examples can result in redundant labeling of spatially adjacent examples. We present a new ALC algorithm that addresses these challenges. Our algorithm robustly estimates label confidence values by regulating the contributions of individual examples in the parameter update of the network. Further, our algorithm avoids redundant labeling by promoting diversity in batch selection through propagating the confidence of each newly labeled example to the entire dataset. Experiments involving four benchmark datasets and two types of label noise demonstrate that our algorithm offers a significant improvement in re-labeling efficiency over state-of-the-art ALC approaches.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

Notes

- 1.

We denote a single update step of W for a given mini-batch (Eq. 2) by ‘pass’ while an ‘epoch’ involves multiple mini-batch passes including all the training examples.

- 2.

This algorithm cannot be directly applied to our setting as it requires multiple annotations for each newly labeled example. Our approach selects the points with the largest sums of the loss and entropy values.

- 3.

DACL’s accuracy often decreased in the second iteration as it switches from the entire dataset D to the labeled dataset \(S^t\) in estimating the class transition matrix T. At early ALC stages, these data points are limited and the corresponding T estimation is unreliable, leading to degraded performances.

References

Arachie, C., Huang, B.: A general framework for adversarial label learning. JMLR 22, 1–33 (2021)

Bernhardt, M., et al.: Active label cleaning: improving dataset quality under resource constraints. In: arXiv:2109.00574 (2021)

Budninskiy, M., Abdelaziz, A., Tong, Y., Desbrun, M.: Laplacian-optimized diffusion for semi-supervised learning. Comput. Aided Geom. Des. 79 (2020)

Shampine, L.F.: Tolerance proportionality in ODE codes. In: Bellen, A., Gear, C.W., Russo, E. (eds.) Numerical Methods for Ordinary Differential Equations. LNM, vol. 1386, pp. 118–136. Springer, Heidelberg (1989). https://doi.org/10.1007/BFb0089235

Fang, T., Lu, N., Niu, G., Sugiyama, M.: Rethinking importance weighting for deep learning under distribution shift. In: NeurIPS (2020)

Gao, R., Saar-Tsechansky, M.: Cost-accuracy aware adaptive labeling for active learning. In: AAAI, pp. 2569–2576 (2020)

Griffin, G., Holub, A., Perona, P.: Caltech-256 object category dataset. Technical report. California Institute of Technology (2007)

Han, B., et al.: Co-teaching: robust training of deep neural networks with extremely noisy labels. In: NIPS (2018)

He, K., Zhang, X., Ren, S., Sun, J.: Deep residual learning for image recognition. In: CVPR, pp. 770–778 (2016)

Hein, M.: Geometrical Aspects of Statistical Learning Theory. Ph.D. thesis. Technical University of Darmstadt, Germany (2005)

Hein, M., Audibert, J.-Y., von Luxburg, U.: From graphs to manifolds – weak and strong pointwise consistency of graph laplacians. In: Auer, P., Meir, R. (eds.) COLT 2005. LNCS (LNAI), vol. 3559, pp. 470–485. Springer, Heidelberg (2005). https://doi.org/10.1007/11503415_32

Hein, M., Maier, M.: Manifold denoising. In: NIPS, pp. 561–568 (2007)

Henter, D., Stahlt, A., Ebbecke, M., Gillmann, M.: Classifier self-assessment: active learning and active noise correction for document classification. In: ICDAR, pp. 276–280 (2015)

Huang, J., Qu, L., Jia, R., Zhao, B.: O2U-Net: a simple noisy label detection approach for deep neural networks. In: ICCV, pp. 3326–3334 (2019)

Iserles, A.: A First Course in the Numerical Analysis of Differential Equations. Cambridge University Press, 2nd edn. (2012)

Kremer, J., Sha, F., Igel, C.: Robust active label correction. In: AISTATS, pp. 308–316 (2018)

Krizhevsky, A.: Learning Multiple Layers of Features from Tiny Images. Technical report. University of Toronto (2009)

Krüger, M., Novo, A.S., Nattermann, T., Mohamed, M., Bertram, T.: Reducing noise in label annotation: a lane change prediction case study. In: IFAC Symposium on Intelligent Autonomous Vehicles, pp. 221–226 (2019)

Li, S.-Y., Shi, Y., Huang, S.-J., Chen, S.: Improving deep label noise learning with dual active label correction. Mach. Learn. 111, 1–22 (2021). https://doi.org/10.1007/s10994-021-06081-9

Liu, T., Tao, D.: Classification with noisy labels by importance reweighting. IEEE TPAMI 38(3), 447–461 (2016)

Nallapati, R., Surdeanu, M., Manning, C.: CorrActive learning: learning from noisy data through human interaction. In: IJCAI Workshop on Intelligence and Interaction (2009)

Parde, N., Nielsen, R.D.: Finding patterns in noisy crowds: regression-based annotation aggregation for crowdsourced data. In: EMNLP, pp. 1907–1912 (2017)

Park, S., Jo, D.U., Choi, J.Y.: Over-fit: noisy-label detection based on the overfitted model property. In: arXiv:2106.07217 (2021)

Pierri, F., Piccardi, C., Ceri, S.: Topology comparison of twitter diffusion networks effectively reveals misleading information. Sci. Rep. 10(1372), 1–19 (2020)

Rebbapragada, U., Brodley, C.E., Sulla-Menashe, D., Friedl, M.A.: Active label correction. In: ICDM, pp. 1080–1085 (2012)

Rehbein, I., Ruppenhofer, J.: Detecting annotation noise in automatically labelled data. In: ACL, pp. 1160–1170 (2018)

Ren, M., Zeng, W., Yang, B., Urtasun, R.: Learning to reweight examples for robust deep learning. In: ICML (2018)

Rosenberg, S.: The Laplacian on a Riemannian Manifold. Cambridge University Press (2009)

Shen, Y., Sanghavi, S.: Learning with bad training data via iterative trimmed loss minimization. In: ICML (2019)

Sheng, V.S., Provost, F., Ipeirotis, P.G.: Get another label? improving data quality and data mining using multiple, noisy labelers. In: KDD, pp. 614–622 (2009)

Stokes, J.W., Kapoor, A., Ray, D.: Asking for a second opinion: re-querying of noisy multi-class labels. In: ICASSP, pp. 2329–2333 (2016)

Szlam, A.D., Maggioni, M., Coifman, R.R.: Regularization on graphs with function-adapted diffusion processes. JMLR 9, 1711–1739 (2008)

Tajbakhsh, N., Jeyaseelan, L., Li, Q., Chiang, J.N., Wu, Z., Ding, X.: Embracing imperfect datasets: a review of deep learning solutions for medical image segmentation. Med. Image Anal. 63 (2020)

Urner, R., David, S.B., Shamir, O.: Learning from weak teachers. In: AISTATS, pp. 1252–1260 (2012)

van Rooyen, B., Menon, A.K., Williamson, R.C.: Learning with symmetric label noise: the importance of being unhinged. In: NIPS (2015)

Wang, S., et al.: Annotation-efficient deep learning for automatic medical image segmentation. Nat. Commun. 12(1), 1–13 (2021)

Xiao, H., Rasul, K., Vollgraf, R.: FashionMNIST: a novel image dataset for benchmarking machine learning algorithms. arXiv:1708.07747 (2017)

Yan, S., Chaudhuri, K., Javidi, T.: Active learning from imperfect labelers. In: NIPS (2016)

Younesian, T., Epema, D., Chen, L.Y.: Active learning for noisy data streams using weak and strong labelers. arXiv:2010.14149v1 (2020)

Zhang, C., Chaudhuri, K.: Active learning from weak and strong labelers. In: NIPS (2015)

Zhang, C., Bengio, S., Hardt, M., Recht, B., Vinyals, O.: Understanding deep learning requires rethinking generalization. In: ICLR (2017)

Zhang, M., Hu, L., Shi, C., Wang, X.: Adversarial label-flipping attack and defense for graph neural networks. In: ICDM (2020)

Zhu, Z., Dong, Z., Liu, Y.: Detecting corrupted labels without training a model to predict. In: ICML (2022)

Ørting, S.N., et al.: A survey of crowdsourcing in medical image analysis. Hum. Comput. 7, 1–26 (2020)

Acknowledgments

We thank James Tompkin for fruitful discussions and the anonymous reviewers for their insightful comments. This work was supported by the National Research Foundation of Korea (NRF) grant (No. 2021R1A2C2012195, Data efficient machine learning for estimating skeletal pose across multiple domains, 1/2) and Institute of Information & Communications Technology Planning & Evaluation (IITP) grant (No. 2021–0–00537, Visual common sense through self-supervised learning for restoration of invisible parts in images, 1/2) both funded by the Korea government (MSIT).

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

1 Electronic supplementary material

Below is the link to the electronic supplementary material.

Rights and permissions

Copyright information

© 2022 The Author(s), under exclusive license to Springer Nature Switzerland AG

About this paper

Cite this paper

Kim, K.I. (2022). Active Label Correction Using Robust Parameter Update and Entropy Propagation. In: Avidan, S., Brostow, G., Cissé, M., Farinella, G.M., Hassner, T. (eds) Computer Vision – ECCV 2022. ECCV 2022. Lecture Notes in Computer Science, vol 13681. Springer, Cham. https://doi.org/10.1007/978-3-031-19803-8_1

Download citation

DOI: https://doi.org/10.1007/978-3-031-19803-8_1

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-031-19802-1

Online ISBN: 978-3-031-19803-8

eBook Packages: Computer ScienceComputer Science (R0)