Abstract

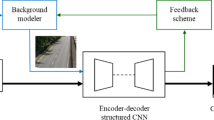

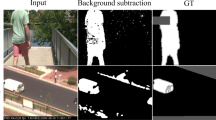

Video background subtraction has applied widely in video conferencing. However, it still cannot handle well for motion blurring, e.g., shaking the head or waving hands. To overcome the motion blur problem in background subtraction, we propose a novel optical flow-based encoder-decoder network (FUNet) that combines both traditional Horn-Schunck optical-flow estimation technique and autoencoder neural networks to perform robust real-time video background subtraction. We concatenate the optical flow motion feature and original image’s appearance feature, and pass the concatenated value into an encoder-decoder structure to perform video background subtraction. We also introduce a video and image subtraction dataset: Conference Video Segmentation Dataset. Code and pre-trained models are available on our GitHub repository: https://github.com/kuangzijian/Flow-Based-Video-Matting.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

References

Lin, S., Ryabtsev, A., Sengupta, S., Curless, B., Seitz, S., Kemelmacher-Shlizerman, I.: Real-time high-resolution background matting (2020)

Godbehere, A. ., Matsukawa, A., Goldberg, K.: Visual tracking of human visitors under variable-lighting conditions for a responsive audio art installation. In: 2012 American Control Conference (ACC) (2012)

Zivkovic, Z., Heijden, F.V.D.: Efficient adaptive density estimation per image pixel for the task of background subtraction. Pattern Recogn. Lett. 27(7), 773–780 (2006)

KaewTraKulPong, P., Bowden, R.: An improved adaptive background mixture model for real-time tracking with shadow detection. In: Remagnino, P., Jones, G.A., Paragios, N., Regazzoni, C.S. (eds) Video-Based Surveillance Systems, Springer (2002). https://doi.org/10.1007/978-1-4615-0913-4_11

Zivkovic, Z.: Improved adaptive gaussian mixture model for background subtraction. In: Proceedings of the 17th International Conference on Pattern Recognition. ICPR (2004)

Zhou, D., Zhang, H., Ray, N..: Texture based background subtraction. In: 2008 International Conference on Information and Automation (2008)

Qin, X., Zhang, Z., Huang, C., Dehghan, M., Zaiane, O.R., Jagersand, M.: U2-Net: Going deeper with nested u-structure for salient object detection. Pattern Recogn. vol. 106, pp. 107404 (2020). https://doi.org/10.1016%2Fj.patcog.2020.107404

Zhao, X., Chen, Y., Tang, M., Wang, J.: Joint background reconstruction and foreground segmentation via a two-stage convolutional neural network. In: 2017 IEEE International Conference on Multimedia and Expo (ICME) (2017)

Ke, Z., et al.: Is a green screen really necessary for real-time portrait matting? https://arxiv.org/abs/2109.15130 (2020)

Niklaus, S.: A reimplementation of PWC-Net using PyTorch. https://github.com/sniklaus/pytorch-pwc (2018)

Ding, S.: Motion-aware contrastive video representation learning via foreground-background merging. https://arxiv.org/abs/2109.15130 (2021)

Tezcan, M.O., Ishwar, P., Konrad, J.: BSUV-Net: a fully-convolutional neural network for background subtraction of unseen videos. https://arxiv.org/abs/1907.11371 (2019)

Yang, Z., Wei, Y., Yang, Y.: Collaborative video object segmentation by foreground-background integration. https://arxiv.org/abs/2003.08333 (2020)

Cheng, I., Nilufar, S., Flores-Mir, C., Basu, A. : Airway segmentation and measurement in CT images. In: 2007 29th Annual International Conference of the IEEE Engineering in Medicine and Biology Society (2007)

Zhao, F., Zhao, J., Zhao, W., Qu, F.: Guide filter-based gradient vector flow module for infrared image segmentation. 54(33), 9807–17 (2015). https://opg.optica.org/ao/abstract.cfm?uri=ao-54-33-9809

Xu, C., Prince, J.L.: Snakes, shapes, and gradient vector flow. https://jhu.pure.elsevier.com/en/publications/snakes-shapes-and-gradient-vector-flow-4 (2016)

Ronneberger, O., Fischer, P., Brox, T.: U-Net: convolutional networks for biomedical image segmentation. In: Navab, N., Hornegger, J., Wells, W.M., Frangi, A.F. (eds.) MICCAI 2015. LNCS, vol. 9351, pp. 234–241. Springer, Cham (2015). https://doi.org/10.1007/978-3-319-24574-4_28

Sun, D., Yang, X., Liu, M.-Y., Kautz, J.: PWC-Net: CNNs for optical flow using pyramid, warping, and cost volume (2018)

Acknowledgment

The authors would like to thank our mentor Xuanyi Wu, for her guidance and feedback throughout the research and study. We would also thank our advisor Dr. Anup Basu and Dr. Lihang Ying for their motivation and support to bring out the novelty in our research.

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2022 The Author(s), under exclusive license to Springer Nature Switzerland AG

About this paper

Cite this paper

Kuang, Z., Tie, X., Wu, X., Ying, L. (2022). FUNet: Flow Based Conference Video Background Subtraction. In: Berretti, S., Su, GM. (eds) Smart Multimedia. ICSM 2022. Lecture Notes in Computer Science, vol 13497. Springer, Cham. https://doi.org/10.1007/978-3-031-22061-6_2

Download citation

DOI: https://doi.org/10.1007/978-3-031-22061-6_2

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-031-22060-9

Online ISBN: 978-3-031-22061-6

eBook Packages: Computer ScienceComputer Science (R0)