Abstract

Social robotics is intended for interacting and communicating with people. This communication needs to be natural and fluid, similar to a human conversation. For instance, people do not always use exactly the same words when transmitting the same message in different situations. One way to imitate this is through paraphrase generation, allowing the robot to use different sentences while keeping the same meaning. In this paper we propose the application of deep learning models to generate these paraphrases in a social robot speaking in Spanish. This application has been integrated into the Mini robot both in English and Spanish, and can be run locally or from an external server. We integrated and evaluated 3 different models. The T5 and Pegasus Transformers have been considered as a base, and we have chosen three different models finetuned for paraphrase generation, one based on Pegasus, and the other two on T5 (Finetuned T5 and Parrot). Compared to the Parrot and Pegasus models, that provide similar results, the Finetuned T5 model can generate paraphrases that do not vary as much from the original sentence, but keep more of the meaning. However, the main advantage of the Finetuned T5 model is its average execution time, around one second faster than the other considered models.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

References

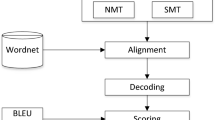

Bannard, C., Callison-Burch, C.: Paraphrasing with bilingual parallel corpora. In: ACL’05, pp. 597–604. ACL, Michigan, USA (2005)

Egonmwan, E., Chali, Y.: Transformer and seq2seq model for paraphrase generation. In: WNGT, pp. 249–255. ACL, Hong Kong (2019)

Fenogenova, A.: Russian paraphrasers: paraphrase with transformers. In: BSNLP, pp. 11–19. ACL, Kiyv, Ukraine (2021)

Mallinson, J., Sennrich, R., Lapata, M.: Paraphrasing revisited with neural machine translation. In: EACL, vol. 1, Long Papers, pp. 881–893. ACL, Valencia, Spain (2017)

Papineni, K., Roukos, S., Ward, T., Zhu, W.: BLEU: a method for automatic evaluation of machine translation. In: ACL’02, pp. 311–318. ACL, Philadelphia, USA (2002)

Prakash, A., Hasan, S.A., Lee, K., Datla, V., Qadir, A., Liu, J., Farri, O.: Neural paraphrase generation with stacked residual LSTM networks. In: COLING 2016: Technical Papers, pp. 2923–2934. COLING, Osaka, Japan (2016)

Raffel, C., Shazeer, N., Roberts, A., Lee, K., Narang, S., Matena, M., Zhou, Y., Li, W., Liu, P.J.: Exploring the limits of transfer learning with a unified text-to-text transformer. JMLR 21(140), 1–67 (2020)

ROS Messages. http://wiki.ros.org/Messages. Last accessed 19 Apr 2022

Salichs, M.A., Castro González, Á., Salichs, E., Fernández Rodicio, E., Maroto Gómez, M., Gamboa Montero, J.J., Marqués Villarroya, S., Castillo, J.C., Alonso Martín, F., Malfaz, M.: Mini: a new social robot for the elderly. Int. J. Soc. Robot. (12), 1231–1249 (2020)

Shiwa, T., Kanda, T., Imai, M., Ishiguro, H., Hagita, N., Anzai, Y.: How quickly should communication robots respond? In: ACM/IEEE International Conference on HRI, pp. 153–160 (2008)

Sutskever, I., Vinyals, O., Le, Q. V.: Sequence to sequence learning with neural networks. In: NIPS, vol. 2, pp. 3104–3112. Montreal, Canada (2014)

Vaswani, A., Shazeer, N., Parmar, N., Uszkoreit, J., Jones, L., Gomez, A., Kaiser, Ł., Polosukhin, I.: Attention is all you need. In: NIPS, pp. 6000–6010. California, USA (2017)

Witteveen, S., Andrews, M.: Paraphrasing with large language models. In: WNGT, pp. 215–220. ACL, Hong Kong (2019)

Xue, L., Constant, N., Roberts, A., Kale, M., Al-Rfou, R., Siddhant, A., Barua, A., Raffel, C.: mT5: a massively multilingual pre-trained text-to-text transformer. In: NAACL: Human Language Technologies, pp. 483–498. ACL (2021)

Yang, Y., Zhang, Y., Tar, C., Baldridge, J.: PAWS-X: a cross-lingual adversarial dataset for paraphrase identification. In: IJCNLP, pp. 3685–3690. ACL, Hong Kong, China (2019)

Zhang, T., Kishore, V., Wu, F., Weinberger, K.Q., Artzi, Y.: BERTScore: evaluating text generation with BERT. In: ICLR (2020)

Zhang, J., Zhao, Y., Saleh, M., Liu, P.: PEGASUS: pre-training with extracted gap-sentences for abstractive summarization. In: ICML, pp. 11328–11339 (2020)

Acknowledgement

This work was partially supported by project Robots sociales para estimulación física, cognitiva y afectiva de mayores (ROSES) RTI2018-096338-B-I00 funded by Agencia Estatal de Investigación (AEI), Ministerio de Ciencia, Innovación y Universidades. This publication is part of the R &D &I project PLEC2021-007819 funded by MCIN/AEI/10.13039/501100011033 and by the European Union NextGenerationEU/PRTR. This work has been supported by the Madrid Government (Comunidad de Madrid-Spain) under the Multiannual Agreement with UC3M (“Fostering Young Doctors Research”, SMM4HRI-CM-UC3M), and in the context of the V PRICIT (Research and Technological Innovation Regional Programme).

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2023 The Author(s), under exclusive license to Springer Nature Switzerland AG

About this paper

Cite this paper

Martín Galván, L., Fernández-Rodicio, E., Sevilla Salcedo, J., Castro-González, Á., Salichs, M.A. (2023). Using Deep Learning for Implementing Paraphrasing in a Social Robot. In: Julián, V., Carneiro, J., Alonso, R.S., Chamoso, P., Novais, P. (eds) Ambient Intelligence—Software and Applications—13th International Symposium on Ambient Intelligence. ISAmI 2022. Lecture Notes in Networks and Systems, vol 603. Springer, Cham. https://doi.org/10.1007/978-3-031-22356-3_21

Download citation

DOI: https://doi.org/10.1007/978-3-031-22356-3_21

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-031-22355-6

Online ISBN: 978-3-031-22356-3

eBook Packages: Intelligent Technologies and RoboticsIntelligent Technologies and Robotics (R0)