Abstract

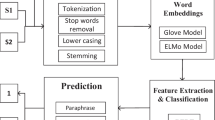

Information Disguise (ID), a part of computational ethics in Natural Language Processing (NLP), is concerned with best practices of textual paraphrasing to prevent the non-consensual use of authors’ posts on the Internet. Research on ID becomes important when authors’ written online communication pertains to sensitive domains, e.g., mental health. Over time, researchers have utilized AI-based automated word spinners (e.g., SpinRewriter, WordAI) for paraphrasing content. However, these tools fail to satisfy the purpose of ID as their paraphrased content still leads to the source when queried on search engines. There is limited prior work on judging the effectiveness of paraphrasing methods for ID on search engines or their proxies, neural retriever (NeurIR) models. We propose a framework where, for a given sentence from an author’s post, we perform iterative perturbation on the sentence in the direction of paraphrasing with an attempt to confuse the search mechanism of a NeurIR system when the sentence is queried on it. Our experiments involve the subreddit “r/AmItheAsshole” as the source of public content and Dense Passage Retriever as a NeurIR system-based proxy for search engines. Our work introduces a novel method of phrase-importance rankings using perplexity scores and involves multi-level phrase substitutions via beam search. Our multi-phrase substitution scheme succeeds in disguising sentences 82% of the time and hence takes an essential step towards enabling researchers to disguise sensitive content effectively before making it public. We also release the code of our approach. (https://github.com/idecir/idecir-Towards-Effective-Paraphrasing-for-Information-Disguise)

A. Agarwal and S. Gupta—Authors contributed equally.

M Gaur—Research with KAI\(^2\) Lab @ UMBC.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

Notes

- 1.

A source document has high locatability if a system engine retrieves it in the top-K results when queried with one of the sentences within the document.

References

Adams, N.N.: ‘Scraping’ reddit posts for academic research? addressing some blurred lines of consent in growing internet-based research trend during the time of covid-19. Int. J. Soc. Res. Methodol., 1–16 (2022). https://doi.org/10.1080/13645579.2022.2111816

Alikaniotis, D., Raheja, V.: The unreasonable effectiveness of transformer language models in grammatical error correction. In: Proceedings of the Fourteenth Workshop on Innovative Use of NLP for Building Educational Applications, pp. 127–133. Association for Computational Linguistics, Florence, August 2019. https://doi.org/10.18653/v1/W19-4412. https://aclanthology.org/W19-4412

Alzantot, M., Sharma, Y., Elgohary, A., Ho, B.J., Srivastava, M., Chang, K.W.: Generating natural language adversarial examples. In: Proceedings of the 2018 Conference on Empirical Methods in Natural Language Processing. pp. 2890–2896. Association for Computational Linguistics, Brussels, October–November 2018. https://doi.org/10.18653/v1/D18-1316. https://aclanthology.org/D18-1316

Bruckman, A.: Studying the amateur artist: a perspective on disguising data collected in human subjects research on the Internet. Ethics Inf. Technol. 4(3), 217–231 (2002)

Cer, D., et al.: Universal sentence encoder, March 2018

Fitria, T.N.: Quillbot as an online tool: Students’ alternative in paraphrasing and rewriting of english writing. Englisia: J. Lang. Educ. Humanities 9(1), 183–196 (2021)

Gao, J., Lanchantin, J., Soffa, M.L., Qi, Y.: Black-box generation of adversarial text sequences to evade deep learning classifiers. In: 2018 IEEE Security and Privacy Workshops (SPW), pp. 50–56 (2018)

Garg, S., Ramakrishnan, G.: BAE: BERT-based adversarial examples for text classification. In: Proceedings of the 2020 Conference on Empirical Methods in Natural Language Processing (EMNLP), pp. 6174–6181. Association for Computational Linguistics, Online, November 2020. https://doi.org/10.18653/v1/2020.emnlp-main.498. https://aclanthology.org/2020.emnlp-main.498

HRW: “how dare they peep into my private life?" October 2022. https://www.hrw.org/report/2022/05/25/how-dare-they-peep-my-private-life/childrens-rights-violations-governments

Iyyer, M., Wieting, J., Gimpel, K., Zettlemoyer, L.: Adversarial example generation with syntactically controlled paraphrase networks. In: Proceedings of the 2018 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies, Volume 1 (Long Papers), pp. 1875–1885. Association for Computational Linguistics, New Orleans, June 2018. https://doi.org/10.18653/v1/N18-1170. https://aclanthology.org/N18-1170

Jia, R., Raghunathan, A., Göksel, K., Liang, P.: Certified robustness to adversarial word substitutions. In: Proceedings of the 2019 Conference on Empirical Methods in Natural Language Processing and the 9th International Joint Conference on Natural Language Processing (EMNLP-IJCNLP), pp. 4129–4142. Association for Computational Linguistics, Hong Kong, November 2019. https://doi.org/10.18653/v1/D19-1423. https://aclanthology.org/D19-1423

Jin, D., Jin, Z., Zhou, J.T., Szolovits, P.: Is bert really robust? a strong baseline for natural language attack on text classification and entailment. In: Proceedings of the AAAI Conference on Artificial Intelligence, vol. 34, pp. 8018–8025 (2020)

Karpukhin, V., et al.: Dense passage retrieval for open-domain question answering. In: Proceedings of the 2020 Conference on Empirical Methods in Natural Language Processing (EMNLP), pp. 6769–6781. Association for Computational Linguistics, Online, November 2020. https://doi.org/10.18653/v1/2020.emnlp-main.550. https://aclanthology.org/2020.emnlp-main.550

Kitaev, N., Cao, S., Klein, D.: Multilingual constituency parsing with self-attention and pre-training. In: Proceedings of the 57th Annual Meeting of the Association for Computational Linguistics, pp. 3499–3505 (2019)

Li, J., Ji, S., Du, T., Li, B., Wang, T.: Textbugger: generating adversarial text against real-world applications. In: 26th Annual Network and Distributed System Security Symposium, NDSS 2019, San Diego, California, USA, 24–27 February 2019. The Internet Society (2019). https://www.ndss-symposium.org/ndss-paper/textbugger-generating-adversarial-text-against-real-world-applications/

Li, L., Ma, R., Guo, Q., Xue, X., Qiu, X.: BERT-ATTACK: adversarial attack against BERT using BERT. In: Proceedings of the 2020 Conference on Empirical Methods in Natural Language Processing (EMNLP), pp. 6193–6202. Association for Computational Linguistics, Online, November 2020. https://doi.org/10.18653/v1/2020.emnlp-main.500. https://aclanthology.org/2020.emnlp-main.500

Minervini, P., Riedel, S.: Adversarially regularising neural nli models to integrate logical background knowledge. In: Conference on Computational Natural Language Learning (2018)

Mrkšić, N., et al.: Counter-fitting word vectors to linguistic constraints. In: Proceedings of the 2016 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies, pp. 142–148. Association for Computational Linguistics, San Diego, June 2016. https://doi.org/10.18653/v1/N16-1018. https://aclanthology.org/N16-1018

Raval, N., Verma, M.: One word at a time: adversarial attacks on retrieval models. arXiv preprint arXiv:2008.02197 (2020)

Reagle, J.: Disguising Reddit sources and the efficacy of ethical research. Ethics Inf. Technol. 24(3), September 2022

Reagle, J., Gaur, M.: Spinning words as disguise: shady services for ethical research? First Monday, January 2022

Ren, S., Deng, Y., He, K., Che, W.: Generating natural language adversarial examples through probability weighted word saliency. In: Annual Meeting of the Association for Computational Linguistics (2019)

Ribeiro, M.T., Wu, T., Guestrin, C., Singh, S.: Beyond accuracy: Behavioral testing of NLP models with CheckList. In: Proceedings of the 58th Annual Meeting of the Association for Computational Linguistics. pp. 4902–4912. Association for Computational Linguistics, Online, July 2020. https://doi.org/10.18653/v1/2020.acl-main.442. https://aclanthology.org/2020.acl-main.442

Salazar, J., Liang, D., Nguyen, T.Q., Kirchhoff, K.: Masked language model scoring. In: Proceedings of the 58th Annual Meeting of the Association for Computational Linguistics, pp. 2699–2712. Association for Computational Linguistics, Online, July 2020. https://doi.org/10.18653/v1/2020.acl-main.240. https://aclanthology.org/2020.acl-main.240

Wang, Y., Lyu, L., Anand, A.: Bert rankers are brittle: a study using adversarial document perturbations. In: Proceedings of the 2022 ACM SIGIR International Conference on Theory of Information Retrieval, ICTIR 2022, pp. 115–120. Association for Computing Machinery, New York (2022). https://doi.org/10.1145/3539813.3545122. https://doi.org/10.1145/3539813.3545122

Wu, C., Zhang, R., Guo, J., de Rijke, M., Fan, Y., Cheng, X.: Prada: practical black-box adversarial attacks against neural ranking models. ACM Trans. Inf. Syst., December 2022. https://doi.org/10.1145/3576923. https://doi.org/10.1145/3576923

Xu, Q., Zhang, J., Qu, L., Xie, L., Nock, R.: D-page: diverse paraphrase generation. CoRR abs/1808.04364 (2018). https://arxiv.org/abs/1808.04364

Yoo, J.Y., Qi, Y.: Towards improving adversarial training of NLP models. In: Findings of the Association for Computational Linguistics: EMNLP 2021, pp. 945–956. Association for Computational Linguistics, Punta Cana, Dominican Republic, November 2021. https://doi.org/10.18653/v1/2021.findings-emnlp.81. https://aclanthology.org/2021.findings-emnlp.81

Zhao, W., Peyrard, M., Liu, F., Gao, Y., Meyer, C.M., Eger, S.: MoverScore: Text generation evaluating with contextualized embeddings and earth mover distance. In: Proceedings of the 2019 Conference on Empirical Methods in Natural Language Processing and the 9th International Joint Conference on Natural Language Processing (EMNLP-IJCNLP), pp. 563–578. Association for Computational Linguistics, Hong Kong, November 2019. https://doi.org/10.18653/v1/D19-1053. https://aclanthology.org/D19-1053

Zhou, J., Bhat, S.: Paraphrase generation: a survey of the state of the art. In: Proceedings of the 2021 Conference on Empirical Methods in Natural Language Processing, pp. 5075–5086 (2021)

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2023 The Author(s), under exclusive license to Springer Nature Switzerland AG

About this paper

Cite this paper

Agarwal, A., Gupta, S., Bonagiri, V., Gaur, M., Reagle, J., Kumaraguru, P. (2023). Towards Effective Paraphrasing for Information Disguise. In: Kamps, J., et al. Advances in Information Retrieval. ECIR 2023. Lecture Notes in Computer Science, vol 13981. Springer, Cham. https://doi.org/10.1007/978-3-031-28238-6_22

Download citation

DOI: https://doi.org/10.1007/978-3-031-28238-6_22

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-031-28237-9

Online ISBN: 978-3-031-28238-6

eBook Packages: Computer ScienceComputer Science (R0)