Abstract

As deep learning becomes the mainstream in the field of natural language processing, the need for suitable active learning method are becoming unprecedented urgent. Active Learning (AL) methods based on nearest neighbor classifier are proposed and demonstrated superior results. However, existing nearest neighbor classifiers are not suitable for classifying mutual exclusive classes because inter-class discrepancy cannot be assured. As a result, informative samples in the margin area can not be discovered and AL performance are damaged. To this end, we propose a novel Nearest neighbor Classifier with Margin penalty for Active Learning (NCMAL). Firstly, mandatory margin penalties are added between classes, therefore both inter-class discrepancy and intra-class compactness are both assured. Secondly, a novel sample selection strategy is proposed to discover informative samples within the margin area. To demonstrate the effectiveness of the methods, we conduct extensive experiments on three real-world datasets with other state-of-the-art methods. The experimental results demonstrate that our method achieves better results with fewer annotated samples than all baseline methods.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

References

Ash, J.T., Zhang, C., Krishnamurthy, A., Langford, J., Agarwal, A.: Deep batch active learning by diverse, uncertain gradient lower bounds. In: 8th International Conference on Learning Representations, ICLR 2020, Addis Ababa, Ethiopia, 26–30 April 2020. OpenReview.net (2020). https://openreview.net/forum?id=ryghZJBKPS

Culotta, A., McCallum, A.: Reducing labeling effort for structured prediction tasks. In: AAAI, vol. 5, pp. 746–751 (2005)

Deng, J., Guo, J., Xue, N., Zafeiriou, S.: Arcface: additive angular margin loss for deep face recognition. In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, pp. 4690–4699 (2019)

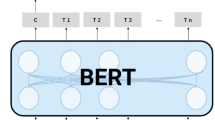

Devlin, J., Chang, M., Lee, K., Toutanova, K.: BERT: pre-training of deep bidirectional transformers for language understanding. In: Burstein, J., Doran, C., Solorio, T. (eds.) Proceedings of the 2019 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies, NAACL-HLT 2019, Minneapolis, MN, USA, 2–7 June 2019, Volume 1 (Long and Short Papers), pp. 4171–4186. Association for Computational Linguistics (2019). https://doi.org/10.18653/v1/n19-1423

Dor, L.E., et al.: Active learning for BERT: an empirical study. In: Proceedings of the 2020 Conference on Empirical Methods in Natural Language Processing (EMNLP), pp. 7949–7962 (2020)

Gal, Y., Islam, R., Ghahramani, Z.: Deep Bayesian active learning with image data. In: International Conference on Machine Learning, pp. 1183–1192. PMLR (2017)

Gissin, D., Shalev-Shwartz, S.: Discriminative active learning. arXiv preprint arXiv:1907.06347 (2019)

Houlsby, N., Huszár, F., Ghahramani, Z., Lengyel, M.: Bayesian active learning for classification and preference learning. arXiv preprint arXiv:1112.5745 (2011)

Huang, J., Child, R., Rao, V., Liu, H., Satheesh, S., Coates, A.: Active learning for speech recognition: the power of gradients. arXiv preprint arXiv:1612.03226 (2016)

Kontorovich, A., Sabato, S., Urner, R.: Active nearest-neighbor learning in metric spaces. J. Mach. Learn. Res. 18, 195:1–195:38 (2017). http://jmlr.org/papers/v18/16-499.html

Lai, S., Xu, L., Liu, K., Zhao, J.: Recurrent convolutional neural networks for text classification. In: Twenty-Ninth AAAI Conference on Artificial Intelligence (2015)

Lan, Z., Chen, M., Goodman, S., Gimpel, K., Sharma, P., Soricut, R.: ALBERT: a lite BERT for self-supervised learning of language representations. In: 8th International Conference on Learning Representations, ICLR 2020, Addis Ababa, Ethiopia, 26–30 April 2020. OpenReview.net (2020). https://openreview.net/forum?id=H1eA7AEtvS

Lewis, D.D., Gale, W.A.: A sequential algorithm for training text classifiers. In: Croft, B.W., van Rijsbergen, C.J. (eds.) SIGIR ’94, pp. 3–12. Springer, London (1994). https://doi.org/10.1007/978-1-4471-2099-5_1

Li, C., et al.: Unsupervised active learning via subspace learning. In: Proceedings of the AAAI Conference on Artificial Intelligence, vol. 35, pp. 8332–8339 (2021)

Loshchilov, I., Hutter, F.: Decoupled weight decay regularization. In: 7th International Conference on Learning Representations, ICLR 2019, New Orleans, LA, USA, 6–9 May 2019. OpenReview.net (2019). https://openreview.net/forum?id=Bkg6RiCqY7

Van der Maaten, L., Hinton, G.: Visualizing data using t-SNE. J. Mach. Learn. Res. 9(11) (2008)

Nafa, Y., et al.: Active deep learning on entity resolution by risk sampling. Knowl.-Based Syst. 236, 107729 (2022)

Nguyen, C.V., Ho, L.S.T., Xu, H., Dinh, V., Nguyen, B.T.: Bayesian active learning with abstention feedbacks. Neurocomputing 471, 242–250 (2022)

Nguyen, Q.P., Low, B.K.H., Jaillet, P.: An information-theoretic framework for unifying active learning problems. In: Proceedings of AAAI, pp. 9126–9134 (2021)

Prabhu, S., Mohamed, M., Misra, H.: Multi-class text classification using BERT-based active learning. In: Dragut, E.C., Li, Y., Popa, L., Vucetic, S. (eds.) 3rd Workshop on Data Science with Human in the Loop, DaSH@KDD, Virtual Conference, 15 August 2021 (2021). https://drive.google.com/file/d/1xVy4p29UPINmWl8Y7OospyQgHiYfH4wc/view

Ren, P., et al.: A survey of deep active learning. ACM Comput. Surv. 54(9), 180:1–180:40 (2022). https://doi.org/10.1145/3472291

Scheffer, T., Decomain, C., Wrobel, S.: Active hidden Markov models for information extraction. In: Hoffmann, F., Hand, D.J., Adams, N., Fisher, D., Guimaraes, G. (eds.) IDA 2001. LNCS, vol. 2189, pp. 309–318. Springer, Heidelberg (2001). https://doi.org/10.1007/3-540-44816-0_31

Sener, O., Savarese, S.: Active learning for convolutional neural networks: a core-set approach. In: 6th International Conference on Learning Representations, ICLR 2018, Vancouver, BC, Canada, 30 April–3 May 2018, Conference Track Proceedings. OpenReview.net (2018). https://openreview.net/forum?id=H1aIuk-RW

Settles, B.: Active learning literature survey (2009)

Settles, B., Craven, M.: An analysis of active learning strategies for sequence labeling tasks. In: Proceedings of the 2008 Conference on Empirical Methods in Natural Language Processing, pp. 1070–1079 (2008)

Wana, F., Yuana, T., Fua, M., Jib, X., Yea, Q.H.Q.: Nearest neighbor classifier embedded network for active learning. In: Proceedings of the AAAI Conference on Artificial Intelligence, vol. 35, pp. 10041–10048 (2021)

Yoo, D., Kweon, I.S.: Learning loss for active learning. In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, pp. 93–102 (2019)

Zhou, B., Cai, X., Zhang, Y., Guo, W., Yuan, X.: Mtaal: multi-task adversarial active learning for medical named entity recognition and normalization. In: Proceedings of the AAAI Conference on Artificial Intelligence, vol. 35, pp. 14586–14593 (2021)

Zhu, J., Wang, H., Yao, T., Tsou, B.K.: Active learning with sampling by uncertainty and density for word sense disambiguation and text classification. In: Proceedings of the 22nd International Conference on Computational Linguistics (Coling 2008), pp. 1137–1144 (2008)

Acknowledgement

This work was supported by National Natural Science Foundation of China (Grant No. 61702043, No. 72274022).

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2023 The Author(s), under exclusive license to Springer Nature Switzerland AG

About this paper

Cite this paper

Cao, Y., Gao, Z., Hu, J., Yang, M., Chen, J. (2023). Nearest Neighbor Classifier with Margin Penalty for Active Learning. In: Tanveer, M., Agarwal, S., Ozawa, S., Ekbal, A., Jatowt, A. (eds) Neural Information Processing. ICONIP 2022. Lecture Notes in Computer Science, vol 13623. Springer, Cham. https://doi.org/10.1007/978-3-031-30105-6_32

Download citation

DOI: https://doi.org/10.1007/978-3-031-30105-6_32

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-031-30104-9

Online ISBN: 978-3-031-30105-6

eBook Packages: Computer ScienceComputer Science (R0)