Abstract

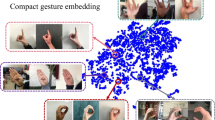

In recent years, gesture recognition has achieved remarkable advances, restrained from either the mainly limited attribute of the adopted single modality or the synchronous existence of multiple involved modalities. This paper proposes a novel visual-audio modal gesture embedding framework, aiming to absorb the information from other auxiliary modalities to enhance performance. The framework includes two main learning components, i.e., multimodal joint training and visual-audio modal embedding training. Both are beneficial to exploring the fundamental semantic gesture information but with a shared recognition network or a shared gesture embedding space, respectively. The enhanced framework trained with this method can efficiently take advantage of the complementary information from other modalities. We experiment on a large-scale gesture recognition dataset. The obtained results demonstrate that the proposed framework is competitive or superior to other outstanding methods, emphasizing the importance of the proposed visual-audio learning for gesture recognition.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

References

Abavisani, M., Joze, H.R.V., Patel, V.M.: Improving the performance of unimodal dynamic hand-gesture recognition with multimodal training. In: CVPR, pp. 1165–1174 (2019)

Afouras, T., Chung, J.S., Senior, A., Vinyals, O., Zisserman, A.: Deep audio-visual speech recognition. IEEE Trans. Pattern Anal. Mach. Intell. 44(12), 8717–8727 (2018)

Brousmiche, M., Rouat, J., Dupont, S.: Audio-visual fusion and conditioning with neural networks for event recognition. In: MLSP, pp. 1–6 (2019)

Chang, J.Y., Tejero-de Pablos, A., Harada, T.: Improved optical flow for gesture-based human-robot interaction. In: ICRA, pp. 7983–7989 (2019)

Chechik, G.: Sharma, varun, Shalit, Uri, Bengio, Samy: large scale online learning of image similarity through ranking. J. Mach. Learn. Res. 11, 1109–1135 (2010)

Chen, X., Guo, H., Wang, G., Zhang, L.: Motion feature augmented recurrent neural network for skeleton-based dynamic hand gesture recognition. In: ICIP, pp. 2881–2885 (2017)

Cho, K., Van Merriënboer, B., Bahdanau, D., Bengio, Y.: On the properties of neural machine translation: Encoder-decoder approaches. arXiv preprint arXiv:1409.1259 (2014)

Cui, R., Liu, H., Zhang, C.: A deep neural framework for continuous sign language recognition by iterative training. IEEE Trans. Multimedia 21(7), 1880–1891 (2019)

Ding, C., Tao, D.: Trunk-branch ensemble convolutional neural networks for video-based face recognition. IEEE Trans. Pattern Anal. Mach. Intell. 40(4), 1002–1014 (2016)

Eyben, F., Wöllmer, M., Schuller, B.: Opensmile: the munich versatile and fast open-source audio feature extractor. In: Proceedings of the 18th ACM International Conference on Multimedia, pp. 1459–1462 (2010)

Giard, P.: Auditory-visual integration during multimodal object recognition in humans: a behavioral and electrophysiological study. J. Cogn. Neurosci. 11(5), 473–490 (1999)

Goldstein, E.B., Brockmole, J.: Sensation and perception. In: Cengage Learning (2016)

Han, J., Zhang, Z., Keren, G., Schuller, B.: Emotion recognition in speech with latent discriminative representations learning. Acta Acustica united with Acustica 104(5), 737–740 (2018)

Huang, J., gang Zhou, W., Li, H., Li, W.: Attention-based 3d-cnns for large-vocabulary sign language recognition. IEEE Trans. Circ. Syst. Video Technol. 29, 2822–2832 (2019)

Khan, A., et al.: Packerrobo: model-based robot vision self supervised learning in cart. Alexandria Eng. J. 61(12), 12549–12566 (2022)

Kim, M., Hong, J., Park, S.J., Ro, Y.M.: Cromm-vsr: cross-modal memory augmented visual speech recognition. IEEE Trans. Multimedia 24, 4342–4355 (2021)

Krizhevsky, A., Sutskever, I., Hinton, G.E.: ImageNet classification with deep convolutional neural networks. Commun. ACM 60, 84–90 (2012)

Kumar, A., Khadkevich, M., Fügen, C.: Knowledge transfer from weakly labeled audio using convolutional neural network for sound events and scenes. In: ICASSP, pp. 326–330. IEEE (2018)

Liu, J., Furusawa, K., Tateyama, T., Iwamoto, Y., Chen, Y.W.: An improved hand gesture recognition with two-stage convolution neural networks using a hand color image and its pseudo-depth image. In: ICIP, pp. 375–379 (2019)

Maréchal, C., et al.: Survey on AI-based multimodal methods for emotion detection. In: High-Performance Modelling and Simulation for Big Data Applications (2019)

McFee, B., et al.: librosa: Audio and music signal analysis in python. In: Proceedings of the 14th Python in Science Conference, vol. 8, pp. 18–25 (2015)

Mullick, K., Namboodiri, A.M.: Learning deep and compact models for gesture recognition. In: ICIP (2017)

Nguyen, X.S., Brun, L., Lézoray, O., Bougleux, S.: A neural network based on SPD manifold learning for skeleton-based hand gesture recognition. In: CVPR, pp. 12036–12045 (2019)

Praveen, R.G., Granger, E., Cardinal, P.: Cross attentional audio-visual fusion for dimensional emotion recognition. In: FG 2021, pp. 1–8 (2021)

Rautaray, S.S., Agrawal, A.: Vision based hand gesture recognition for human computer interaction: a survey. Artif. Intell. Rev. 43(1), 1–54 (2015)

Schroff, F., Kalenichenko, D., Philbin, J.: Facenet: a unified embedding for face recognition and clustering. In: CVPR, pp. 815–823 (2015)

Shi, L., Zhang, Y., Hu, J., Cheng, J., Lu, H.: Gesture recognition using spatiotemporal deformable convolutional representation. In: ICIP, pp. 1900–1904 (2019)

Simonyan, K., Zisserman, A.: Very deep convolutional networks for large-scale image recognition. In: ICLR (2015). http://arxiv.org/abs/1409.1556

Tang, J., Cheng, H., Zhao, Y., Guo, H.: Structured dynamic time warping for continuous hand trajectory gesture recognition. Pattern Recogn. 80, 21–31 (2018)

Tran, D., Bourdev, L., Fergus, R., Torresani, L., Paluri, M.: Learning spatiotemporal features with 3d convolutional networks. In: ICCV, pp. 4489–4497 (2015)

Wu, D., et al.: Deep dynamic neural networks for multimodal gesture segmentation and recognition. IEEE Trans. Pattern Anal. Mach. Intell. 38(8), 1583–1597 (2016)

Acknowledgment

The work is supported by the National Natural Science Foundation of China under Grant No.: 61976132, 61991411 and U1811461, and the Natural Science Foundation of Shanghai under Grant No.: 19ZR1419200.

We appreciate the High Performance Computing Center of Shanghai University and Shanghai Engineering Research Center of Intelligent Computing System No.: 19DZ2252600 for providing the computing resources.

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2023 The Author(s), under exclusive license to Springer Nature Switzerland AG

About this paper

Cite this paper

Cao, Y., Fang, Y., Xiao, S. (2023). Enhance Gesture Recognition via Visual-Audio Modal Embedding. In: Tanveer, M., Agarwal, S., Ozawa, S., Ekbal, A., Jatowt, A. (eds) Neural Information Processing. ICONIP 2022. Lecture Notes in Computer Science, vol 13624. Springer, Cham. https://doi.org/10.1007/978-3-031-30108-7_33

Download citation

DOI: https://doi.org/10.1007/978-3-031-30108-7_33

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-031-30107-0

Online ISBN: 978-3-031-30108-7

eBook Packages: Computer ScienceComputer Science (R0)