Abstract

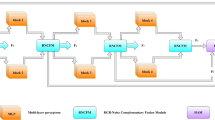

With the development of multimedia technology, the difficulty of image tampering has been reduced in recent years. Propagation of tampered images brings many adverse effects so that the technology of image tamper detection needs to be urgently developed. A faster-rcnn based image tamper localization recognition method with dual-flow Discrete Cosine Transform (DCT) high-frequency and low-frequency input is presented. For capturing subtle transform edges not visible in RGB domain, we extract high-frequency features from the image as an additional data stream embedding model. Our network model uses low-frequency images as the subject data to detect object consistency in different regions, further complements high-rate streams to strengthen image region consistency detection, and complements duplicate stream object tampering detection. Extensive experiments are performed on the CASIA V2.0 image dataset. These results demonstrate that faster-rcnn-w outperforms existing mainstream image tampering detection methods in different evaluation indicators.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

Data Availability

The data in the experiments, used to support the findings of this study are available from the corresponding author upon request.

References

Goodfellow, I., et al.: Generative adversarial nets. In: NIPS (2014)

Zhu, J.-Y., Park, T., Isola, P., Efros, A.A.: Unpaired image-to-image translation using cycle-consistent adversarial networks. In: ICCV (2017)

Mirza, M., Osindero, S.: Conditional generative adversarial nets. arXiv preprint arXiv:1411.1784 (2014)

Cozzolino, D., Poggi, G., Verdoliva, L.: Efficient dense-field copy–move forgery detection. IEEE Trans. Inform. Forensic. Secur. 10(11), 2284–2297 (2015). https://doi.org/10.1109/TIFS.2015.2455334

Rao, Y., Ni, J.: A deep learning approach to detection of splicing and copy-move forgeries in images. In: WIFS (2016)

Huh, M., Liu, A., Owens, A., Efros, A.A.: Fighting fake news: image splice detection via learned self-consistency. In: Ferrari, V., Hebert, M., Sminchisescu, C., Weiss, Y. (eds.) ECCV 2018. LNCS, vol. 11215, pp. 106–124. Springer, Cham (2018). https://doi.org/10.1007/978-3-030-01252-6_7

Cozzolino, D., Poggi, G., Verdoliva, L.: Splicebuster: a new blind image splicing detector. In: WIFS (2015)

Kniaz, V.V., Knyaz, V., Remondino, F.: The point where reality meets fantasy: Mixed adversarial generators for image splice detection (2019)

Zhu, X., Qian, Y., Zhao, X., Sun, B., Sun, Y.: A deep learning approach to patch-based image in-painting forensics. Signal Process. Image Commun. 67, 90–99 (2018)

Rao, Y., Ni, J.: A deep learning approach to detection of splicing and copy-move forgeries in images. In: IEEE International Workshop on Information Forensics and Security (WIFS), pp. 1–6. IEEE Computer Society, Abu Dhabi (2017)

Fridrich, J., Kodovsky, J.: Rich models for steganalysis of digital imagcs. IEEE Trans. Lnform. Forens. Secur. 7, 868–882 (2012). https://doi.org/10.1109/TIFS.2012.2190402

Zhou, L.-N., Wang, D.-M.: Digital Image Forensics. Beijing University ol Posts and Telecommunications Press, Beijing (2008). (in Chinese)

Chen, S., Yao, T., Chen, Y., Ding, S., Li, J., Ji, R.: Local relation learning for face forgery detection. In: AAAI (2021)

Qian, Y., Yin, G., Sheng, L., Chen, Z., Shao, J.: Thinking in frequency: Face forgery detection by mining frequency-aware clues. In: ECCV (2020)

Wang, J., Wu, Z., Chen, J., Jiang, Y.-G.: M2tr: Multi-modal multi-scale transformers for deep-fake detection. arXiv preprint arXiv:2104.09770 (2021)

Bianchi, T., Rosa, A.D., Piva, A.: Improved DCT coefficient analysis of forgery localization in JPEG images. In: Proceedings of the IEEE International Conference on Acoustics? Speech and Signal Processing (ICASSP), pp. 2444–2447. Prague, Czech Republic (2011)

Huang, X., Yan, F., Xu, W., Li, M.: Multi-attention and incorporating background information model for chest x-ray image report generation. IEEE Access 7, 154808–154817 (2019)

Lin, T.-Y., RoyChowdhury, A., Maji, S.: Bilinear cnn models for fine-grained visual recognition. In: ICCV (2015)

Gao, Y., Beijbom, O., Zhang, N., Darrell, T.: Compact bilinear pooling. In: CVPR (2016)

Acknowledgment

This work has been supported in part by the Natural Science Foundation of China under grant No. 62202211, the project supported by National Social Science Foundation under Grant No. 19CTJ014, the Science and Technology Research Project of Jiangxi Provincial Department of Education (No. GJJ170234).

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Ethics declarations

The authors declare that they have no conflicts of interest.

Rights and permissions

Copyright information

© 2023 ICST Institute for Computer Sciences, Social Informatics and Telecommunications Engineering

About this paper

Cite this paper

Deng, L., Peng, J., Deng, W., Liu, K., Cao, Z., Wang, W. (2023). A Dual-Stream Input Faster-CNN Model for Image Forgery Detection. In: Cao, Y., Shao, X. (eds) Mobile Networks and Management. MONAMI 2022. Lecture Notes of the Institute for Computer Sciences, Social Informatics and Telecommunications Engineering, vol 474. Springer, Cham. https://doi.org/10.1007/978-3-031-32443-7_7

Download citation

DOI: https://doi.org/10.1007/978-3-031-32443-7_7

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-031-32442-0

Online ISBN: 978-3-031-32443-7

eBook Packages: Computer ScienceComputer Science (R0)