Abstract

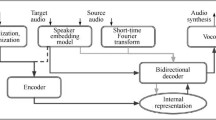

Organic dysphonia can lead to vocal impairments. Recording patients’ impaired voice could allow them to use voice cloning systems. Voice cloning, being the process of producing speech matching a target speaker voice, given textual input and an audio sample from the speaker, can be used in such a context. However, dysphonic patients may only produce speech with specific or limited phonetic content.

Considering a complete voice cloning process, we investigate the relation between the phonetic content, the length of samples and their impact on the output quality and speaker similarity through the use of phonetically limited artificial voices.

The analysis of the speakers embedding which are used to capture voices shows an impact of the phonetic content. However, we were not able to observe those variations in the final generated speech.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

References

Andreev, P., Alanov, A., Ivanov, O., Vetrov, D.: HiFi++: a unified framework for bandwidth extension and speech enhancement (2022). https://doi.org/10.48550/ARXIV.2203.13086

Arik, S.O., Chen, J., Peng, K., Ping, W., Zhou, Y.: Neural voice cloning with a few samples. In: Advances in Neural Information Processing Systems, pp. 10019–10029 (2018)

Baevski, A., Zhou, H., Mohamed, A., Auli, M.: wav2vec 2.0: a framework for self-supervised learning of speech representations (2020). https://doi.org/10.48550/ARXIV.2006.11477

Chen, Y., et al.: Sample efficient adaptive text-to-speech. In: Proceedings of the International Conference on Learning Representations (2019)

Cooper, E., et al.: Zero-shot multi-speaker text-to-speech with state-of-the-art neural speaker embeddings. In: IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), pp. 6184–6188 (2020). https://doi.org/10.1109/ICASSP40776.2020.9054535

Jia, Y., et al.: Transfer learning from speaker verification to multispeaker text-to-speech synthesis. In: Proceedings of the Neural Information Processing Systems Conference, no. 32 (2018)

Le Huche, F., Allali, A.: La voix. Collection Phoniatrie, Elsevier Masson, 2e édition edn. (2010)

Lo, C.C., et al.: MOSNet: deep learning-based objective assessment for voice conversion. In: Interspeech (2019). https://doi.org/10.21437/Interspeech.2019-2003

Mozilla: CommonVoice, commonvoice.mozilla.org, consulted in December 2020

Prenger, R., Valle, R., Catanzaro, B.: WaveGlow: a flow-based generative network for speech synthesis. In: 2019 IEEE International Conference on Acoustics, Speech and Signal Processing, ICASSP 2019, pp. 3617–3621 (2019). https://doi.org/10.1109/ICASSP.2019.8683143

Shen, J., et al.: Natural TTS synthesis by conditioning WaveNet on Mel spectrogram predictions. In: Proceedings of the IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP) (2018)

Sini, A.: Characterisation and generation of expressivity in function of speaking styles for audiobook synthesis. Theses, Université Rennes 1 (2020)

Sini, A., Lolive, D., Vidal, G., Tahon, M., Delais-Roussarie, E.: SynPaFlex-corpus: an expressive French audiobooks corpus dedicated to expressive speech synthesis. In: Proceedings of the 11th International Conference on Language Resources and Evaluation (LREC), Miyazaki, Japan (2018)

Sini, A., Maguer, S.L., Lolive, D., Delais-Roussarie, E.: Introducing prosodic speaker identity for a better expressive speech synthesis control. In: 10th International Conference on Speech Prosody 2020, Tokyo, Japan, pp. 935–939. ISCA (2020). https://doi.org/10.21437/speechprosody.2020-191. https://hal.science/hal-03000148

Snyder, D., Garcia-Romero, D., Povey, D., Khudanpur, S.: Deep neural network embeddings for text-independent speaker verification. In: Proceedings of Interspeech (2017)

Steuer, C.E., El-Deiry, M., Parks, J.R., Higgins, K.A., Saba, N.F.: An update on larynx cancer. CA Cancer J. Clin. 67(1), 31–50 (2017)

Wan, L., Wang, Q., Papir, A., Moreno, I.L.: Generalized end-to-end loss for speaker verification. In: IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), pp. 4879–4883 (2018)

Yamagishi, J., Honnet, P.E., Garner, P., Lazaridis, A.: The SIWIS French speech synthesis database. Technical report, Idiap Research Institute (2017)

Acknowledgements

This work was granted access to the HPC resources of IDRIS under the allocation 2023-AD011011870R2 made by GENCI.

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2023 The Author(s), under exclusive license to Springer Nature Switzerland AG

About this paper

Cite this paper

Wadoux, L., Barbot, N., Chevelu, J., Lolive, D. (2023). Voice Cloning for Voice Disorders: Impact of Phonetic Content. In: Ekštein, K., Pártl, F., Konopík, M. (eds) Text, Speech, and Dialogue. TSD 2023. Lecture Notes in Computer Science(), vol 14102. Springer, Cham. https://doi.org/10.1007/978-3-031-40498-6_26

Download citation

DOI: https://doi.org/10.1007/978-3-031-40498-6_26

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-031-40497-9

Online ISBN: 978-3-031-40498-6

eBook Packages: Computer ScienceComputer Science (R0)