Abstract

A scoring system is a simple decision model that checks a set of features, adds a certain number of points to a total score for each feature that is satisfied, and finally makes a decision by comparing the total score to a threshold. Scoring systems have a long history of active use in safety-critical domains such as healthcare and justice, where they provide guidance for making objective and accurate decisions. Given their genuine interpretability, the idea of learning scoring systems from data is obviously appealing from the perspective of explainable AI. In this paper, we propose a practically motivated extension of scoring systems called probabilistic scoring lists (PSL), as well as a method for learning PSLs from data. Instead of making a deterministic decision, a PSL represents uncertainty in the form of probability distributions. Moreover, in the spirit of decision lists, a PSL evaluates features one by one and stops as soon as a decision can be made with enough confidence. To evaluate our approach, we conduct a case study in the medical domain.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

Notes

- 1.

\(\llbracket P \rrbracket = 1\) if predicate P is true (positive decision) and \(\llbracket P \rrbracket = 0\) if P is false (negative decision).

- 2.

As the importance of a feature \(x_k\), and hence the score \(s_k\), can only be decided relative to other features, the choice of the score for the first feature is ambiguous; assuming this feature to be important, we given it the largest score possible.

- 3.

References

Bösner, S., et al.: Accuracy of symptoms and signs for coronary heart disease assessed in primary care. Br. J. Gener. Pract. 60(575), e246–e257 (2010)

Chevaleyre, Y., Koriche, F., Zucker, J.D.: Rounding methods for discrete linear classification. In: Proceedings of ICML, International Conference on Machine Learning, pp. 651–659 (2013)

Foygel Barber, R., Candes, J., Emmanuel, J., Ramdas, A., Tibshirani, R.J.: The limits of distribution-free conditional predictive inference. Inf. Inference 10(2), 455–482 (2021). https://doi.org/10.1093/imaiai/iaaa017

Fürnkranz, J.: Separate-and-conquer rule learning. Artif. Intell. Rev. 13(1), 3–54 (1999)

Fürnkranz, J., Gamberger, D., Lavrač, N.: Foundations of Rule Learning. Springer, Heidelberg (2012). https://doi.org/10.1007/978-3-540-75197-7. ISBN 978-3-540-75196-0

Hastie, T.J.: Generalized Additive Models. Routledge (2017)

Hüllermeier, E., Waegeman, W.: Aleatoric and epistemic uncertainty in machine learning: an introduction to concepts and methods. Mach. Learn. 110(3), 457–506 (2021). https://doi.org/10.1007/s10994-021-05946-3

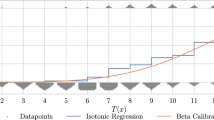

Kull, M., Silva Filho, T., Flach, P.: Beta calibration: a well-founded and easily implemented improvement on logistic calibration for binary classifiers. In: Proceedings of AISTATS, 20th International Conference on Artificial Intelligence and Statistics, vol. 54, pp. 623–631. PMLR (2017)

Moreira, J., Bisig, B., Muwawenimana, P., Basinga, P., Bisoffi, Z., Haegeman, F.: Weighing harm in therapeutic decisions of smear-negative pulmonary tuberculosis. Med. Decis. Making 3, 380–390 (2009)

Možina, M., Demšar, J., Bratko, I., Žabkar, J.: Extreme value correction: a method for correcting optimistic estimations in rule learning. Mach. Learn. 108(2), 297–329 (2018). https://doi.org/10.1007/s10994-018-5731-3

Niculescu-Mizil, A., Caruana, R.: Predicting good probabilities with supervised learning. In: Proceedings of ICML, 22nd International Conference on Machine Learning, New York, USA, pp. 625–632 (2005)

Provost, F.J., Domingos, P.: Tree induction for probability-based ranking. Mach. Learn. 52(3), 199–215 (2003)

Rivest, R.L.: Learning decision lists. Mach. Learn. 2, 229–246 (1987)

Senge, R., et al.: Reliable classification: learning classifiers that distinguish aleatoric and epistemic uncertainty. Inf. Sci. 255, 16–29 (2014)

Silva Filho, T., Song, H., Perelló-Nieto, M., Santos-Rodríguez, R., Kull, M., Flach, P.A.: Classifier calibration: how to assess and improve predicted class probabilities: a survey. CoRR, abs/2112.10327 (2021). https://arxiv.org/abs/2112.10327

Simsek, O., Buckmann, M.: On learning decision heuristics. In: Imperfect Decision Makers: Admitting Real-World Rationality, pp. 75–85 (2017)

Six, A., Backus, B., Kelder, J.: Chest pain in the emergency room: value of the heart score. Neth. Hear. J. 16(6), 191–196 (2008)

Subramanian, V., Mascha, E.J., Kattan, M.W.: Developing a clinical prediction score: comparing prediction accuracy of integer scores to statistical regression models. Anesth. Analg. 132(6), 1603–1613 (2021)

Sulzmann, J.-N., Fürnkranz, J.: An empirical comparison of probability estimation techniques for probabilistic rules. In: Gama, J., Costa, V.S., Jorge, A.M., Brazdil, P.B. (eds.) DS 2009. LNCS, vol. 5808, pp. 317–331. Springer, Heidelberg (2009). https://doi.org/10.1007/978-3-642-04747-3_25

Tsalatsanis, A., Hozo, I., Vickers, A., Djulbegovic, B.: A regret theory approach to decision curve analysis: a novel method for eliciting decision makers’ preferences and decision-making. BMC Med. Inform. Decis. Mak. 10, 51 (2010). https://doi.org/10.1186/1472-6947-10-51

Ustun, B., Rudin, C.: Supersparse linear integer models for optimized medical scoring systems. Mach. Learn. 102(3), 349–391 (2016)

Ustun, B., Rudin, C.: Optimized risk scores. In: Proceedings of 23rd ACM SIGKDD International Conference on Knowledge Discovery and Data Mining, pp. 1125–1134 (2017)

Ustun, B., Rudin, C.: Learning optimized risk scores. J. Mach. Learn. Res. 20(150), 1–75 (2019)

Vovk, V., Petej, I.: Venn-Abers predictors. In: Proceedings of UAI, 30th Conference on Uncertainty in Artificial Intelligence (2014)

Vovk, V., Shafer, G., Nouretdinov, I.: Self-calibrating probability forecasting. In: Proceedings of NIPS, Advances in Neural Information Processing Systems, vol. 16, pp. 1133–1140 (2004)

Wang, C., Han, B., Patel, B., Rudin, C.: In pursuit of interpretable, fair and accurate machine learning for criminal recidivism prediction. J. Quant. Criminol. 39, 1–63 (2022)

Webb, G.I.: Recent progress in learning decision lists by prepending inferred rules. In: Proceedings of the 2nd Singapore International Conference on Intelligent Systems, pp. B280–B285 (1994)

Acknowledgment

We gratefully acknowledge funding by the German Research Foundation (Deutsche Forschungsgemeinschaft, DFG): TRR 318/1 2021 – 438445824 and the German Research Foundation (DFG) within the Collaborative Research Center “On-The-Fly Computing” (SFB 901/3 project no. 160364472).

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2023 The Author(s), under exclusive license to Springer Nature Switzerland AG

About this paper

Cite this paper

Hanselle, J., Fürnkranz, J., Hüllermeier, E. (2023). Probabilistic Scoring Lists for Interpretable Machine Learning. In: Bifet, A., Lorena, A.C., Ribeiro, R.P., Gama, J., Abreu, P.H. (eds) Discovery Science. DS 2023. Lecture Notes in Computer Science(), vol 14276. Springer, Cham. https://doi.org/10.1007/978-3-031-45275-8_13

Download citation

DOI: https://doi.org/10.1007/978-3-031-45275-8_13

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-031-45274-1

Online ISBN: 978-3-031-45275-8

eBook Packages: Computer ScienceComputer Science (R0)