Abstract

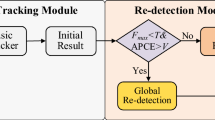

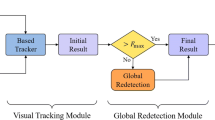

In long-term visual tracking, target occlusion and out-of-view are common problems that lead to target drift, adding re-detection to short-term tracking algorithms is a general solution. To better handle the problem of target disappearing and reappearing in long-term visual tracking, this paper proposes a temporal global re-detection method based on interaction-fusion attention. Firstly, ResNet50 is used as the feature extraction network to obtain the depth features of the template and the search region. Then, a new interaction-fusion attention is added to extract the connection of different dimensionality of features. Finally, Temporal ROI Align is introduced to select candidate boxes, increasing the use of historical information by re-detection method, and improving the accuracy of target localization. STMTrack algorithm is selected as the short-term tracking algorithm, which works with the proposed re-detection method to construct a long-term tracking algorithm, and experiments are conducted on the UAV123, LaSOT, UAV20L, and VOT2018-LT datasets, and the effectiveness of this re-detection method can be seen from the experimental results.

Supported by the National Natural Science Foundation of China under grant no. 62072370 and the Natural Science Foundation of Shaanxi Province under grant no. 2023-JC-YB-598.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

References

Liu, C., Chen, X.F., Bo, C.J., Wang, D.: Long-term visual tracking: review and experimental comparison. Mach. Intell. Res. 19, 1–19 (2022)

Mueller, M., Smith, N., Ghanem, B.: A benchmark and simulator for UAV tracking. In: Leibe, B., Matas, J., Sebe, N., Welling, M. (eds.) ECCV 2016. LNCS, vol. 9905, pp. 445–461. Springer, Cham (2016). https://doi.org/10.1007/978-3-319-46448-0_27

Fan, H., et al.: LaSOT: a high-quality benchmark for large-scale single object tracking. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 5374–5383 (2019)

Lukežič, A., Zajc, L.Č., Vojíř, T., Matas, J., Kristan, M.: Now you see me: evaluating performance in long-term visual tracking. In: Proceedings of the European Conference on Computer Vision (2018)

Kristan, M., et al.: The eighth visual object tracking VOT2020 challenge results. In: Bartoli, A., Fusiello, A. (eds.) ECCV 2020. LNCS, vol. 12539, pp. 547–601. Springer, Cham (2020). https://doi.org/10.1007/978-3-030-68238-5_39

Hong, Z., Chen, Z., Wang, C., Mei, X., Prokhorov, D., Tao, D.: Multi-store tracker (muster): a cognitive psychology inspired approach to object tracking. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 749–758 (2015)

Lukežič, A., Zajc, L.Č, Vojíř, T., Matas, J., Kristan, M.: FuCoLoT – a fully-correlational long-term tracker. In: Jawahar, C.V., Li, H., Mori, G., Schindler, K. (eds.) ACCV 2018. LNCS, vol. 11362, pp. 595–611. Springer, Cham (2019). https://doi.org/10.1007/978-3-030-20890-5_38

Zhu, Z., Wang, Q., Li, B., Wu, W., Yan, J., Hu, W.: Distractor-aware Siamese networks for visual object tracking. In: Proceedings of the European Conference on Computer Vision, pp. 101–117 (2018)

Zhang, Y., Wang, D., Wang, L., Qi, J., Lu, H.: Learning regression and verification networks for long-term visual tracking. arXiv preprint arXiv:1809.04320 (2018)

Yan, B., Zhao, H., Wang, D., Lu, H., Yang, X.: ‘Skimming-Perusal’ tracking: a framework for real-time and robust long-term tracking. In: Proceedings of the IEEE/CVF International Conference on Computer Vision, pp. 2385–2393 (2019)

Huang, L., Zhao, X., Huang, K.: GlobalTrack: a simple and strong baseline for long-term tracking. In: Proceedings of the AAAI Conference on Artificial Intelligence, vol. 34, no. 07, pp. 11037–11044 (2020)

Gong, T., Chen, K., Wang, X., et al.: Temporal ROI align for video object recognition. In: Proceedings of the AAAI Conference on Artificial Intelligence, vol. 35, no. 2, pp. 1442–1450 (2021)

Fu, Z., Liu, Q., Fu, Z., Wang, Y.: STMTrack: template-free visual tracking with space-time memory networks. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 13774–13783 (2021)

Wu, Y., Lim, J., Yang, M.H.: Online object tracking: a benchmark. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 2411–2418 (2013)

Li, B., Wu, W., Wang, Q., Zhang, F., Xing, J., Yan, J.: SiamRPN++: evolution of Siamese visual tracking with very deep networks. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 4282–4291 (2019)

Chen, Z., Zhong, B., Li, G., Zhang, S., Ji, R.: Siamese box adaptive network for visual tracking. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 6668–6677 (2020)

Guo, D., Shao, Y., Cui, Y., Wang, Z., Zhang, L., Shen, C.: Graph attention tracking. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 9543–9552 (2021)

Voigtlaender, P., Luiten, J., Torr, P.H.S., Leibe, B.: Siam R-CNN: visual tracking by re-detection. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 6578–6588 (2020)

Zhang, Z., Liu, Y., Wang, X., Li, B., Hu, W.: Learn to match: automatic matching network design for visual tracking. In: Proceedings of the IEEE/CVF International Conference on Computer Vision, pp. 13339–13348 (2021)

Dong, X., Shen, J., Shao, L., Porikli, F.: CLNet: a compact latent network for fast adjusting Siamese trackers. In: Vedaldi, A., Bischof, H., Brox, T., Frahm, J.-M. (eds.) ECCV 2020. LNCS, vol. 12365, pp. 378–395. Springer, Cham (2020). https://doi.org/10.1007/978-3-030-58565-5_23

Cao, Z., Fu, C., Ye, J., Li, B., Li, Y.: HiFT: hierarchical feature transformer for aerial tracking. In: Proceedings of the IEEE/CVF International Conference on Computer Vision, pp. 15457–15466 (2021)

Cheng, S., Zhong, B., Li, G., Liu, X., Tang, Z., Li, X., Wang, J.: Learning to filter: Siamese relation network for robust tracking. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 4421–4431 (2021)

Zhang, Z., Peng, H., Fu, J., Li, B., Hu, W.: Ocean: object-aware anchor-free tracking. In: Vedaldi, A., Bischof, H., Brox, T., Frahm, J.-M. (eds.) ECCV 2020. LNCS, vol. 12366, pp. 771–787. Springer, Cham (2020). https://doi.org/10.1007/978-3-030-58589-1_46

Bhat, G., Danelljan, M., Gool, L.V., Timofte, R.: Learning discriminative model prediction for tracking. In: Proceedings of the IEEE/CVF International Conference on Computer Vision, pp. 6181–6190 (2019)

Dai, K., Zhang, Y., Wang, D., Li, J., Lu, H., Yang, X.: High-performance long-term tracking with meta-updater. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 6298–6307 (2020)

Guo, D., Wang, J., Cui, Y., Wang, Z., Chen, S.: SiamCAR: Siamese fully convolutional classification and regression for visual tracking. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 6269–6277 (2020)

Yang, T., Xu, P., Hu, R., Chai, H., Chan, A.B.: ROAM: recurrently optimizing tracking model. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 6718–6727 (2020)

Acknowledgements

This work is supported by the National Natural Science Foundation of China under grant no. 62072370 and the Natural Science Foundation of Shaanxi Province under grant no. 2023-JC-YB-598.

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2023 The Author(s), under exclusive license to Springer Nature Switzerland AG

About this paper

Cite this paper

Ma, J., Hou, Z., Han, R., Ma, S. (2023). Temporal Global Re-detection Based on Interaction-Fusion Attention in Long-Term Visual Tracking. In: Lu, H., et al. Image and Graphics. ICIG 2023. Lecture Notes in Computer Science, vol 14356. Springer, Cham. https://doi.org/10.1007/978-3-031-46308-2_1

Download citation

DOI: https://doi.org/10.1007/978-3-031-46308-2_1

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-031-46307-5

Online ISBN: 978-3-031-46308-2

eBook Packages: Computer ScienceComputer Science (R0)