Abstract

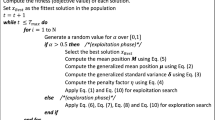

Feature selection is an essential pre-processing step in Machine Learning for improving the performance of models, reducing the time of predictions, and, more importantly, identifying the most significant features. Sometimes, this identification can reduce the time and cost of obtaining feature values because it could imply buying fewer sensors or spending less human time. This paper proposes an Estimation of Distribution Algorithm (EDA) for feature selection tailored to regression problems with a multi-objective approach. The objective is to maximize the performance of learning models and minimize the number of selected features. We use a Bayesian Network (BN) as the EDA distribution probability model. The main contribution of this work is the process used to create this BN structure. It aims to capture the redundancy and relevance among features. Also, the BN is used to create the initial EDA population. We test and compare the performance of our proposal with other multi-objective algorithms: an EDA with a Bernoulli distribution probability model, NSGA II, and AGEMOEA, using different datasets. The experimental results show that the proposed algorithm found solutions with a considerably fewer number of features. Additionally, the proposed algorithm achieves comparable results on models’ performance compared with the other algorithms. Our proposal generally expended less time and had fewer objective function evaluations.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

References

Agrawal, P., Abutarboush, H.F., Ganesh, T., Mohamed, A.W.: Metaheuristic algorithms on feature selection: a survey of one decade of research (2009–2019). IEEE Access 9, 26766–26791 (2021). https://doi.org/10.1109/ACCESS.2021.3056407

Blank, J., Deb, K.: Pymoo: multi-objective optimization in Python. IEEE Access 8, 89497–89509 (2020). https://doi.org/10.1109/ACCESS.2020.2990567

Castro, P.A., Von Zuben, F.J.: Multi-objective feature selection using a Bayesian artificial immune system. Int. J. Intell. Comput. Cybern. 3(2), 235–256 (2010). https://doi.org/10.1108/17563781011049188

Collette, Y., Siarry, P.: Multiobjective Optimization. Principles and Case Studies. Springer, Heidelberg (2004). https://doi.org/10.1007/978-3-662-08883-8

Cormen, T.H., Leiserson, C.E., Rivest, R.L., Stein, C.: Introduction to Algorithms. MIT Press (2002)

Dash, M., Liu, H.: Feature selection for classification. Intell. Data Anal. 1(1–4), 131–156 (1997). https://doi.org/10.1016/S1088-467X(97)00008-5. http://linkinghub.elsevier.com/retrieve/pii/S1088467X97000085

Deb, K., Pratap, A., Agarwal, S., Meyarivan, T.: A fast and elitist multiobjective genetic algorithm: NSGA-II. Technical report 2 (2002)

Dhal, P., Azad, C.: A comprehensive survey on feature selection in the various fields of machine learning. Appl. Intell. 52(4), 4543–4581 (2022). https://doi.org/10.1007/s10489-021-02550-9

Guyon, I., De, A.M.: An introduction to variable and feature selection André Elisseeff. Technical report (2003)

Hamdani, T.M., Won, J.M., Alimi, A.M., Karray, F.: LNCS 4431 - multi-objective feature selection with NSGA II. Technical report (2007)

Inza, I., Larrañaga, P., Etxeberria, R., Sierra, B.: Feature subset selection by Bayesian network-based optimization. Technical report (2000)

Jiao, R., Nguyen, B.H., Xue, B., Zhang, M.: A survey on evolutionary multiobjective feature selection in classification: approaches, applications, and challenges. IEEE Trans. Evol. Comput. (2023). https://doi.org/10.1109/TEVC.2023.3292527. https://ieeexplore.ieee.org/document/10173647/

Kitson, N.K., Constantinou, A.C., Guo, Z., Liu, Y., Chobtham, K.: A survey of Bayesian Network structure learning. Artif. Intell. Rev. 56, 8721–8814 (2023). https://doi.org/10.1007/s10462-022-10351-w

Larragaña, P., Lozano, J.: Genetic algorithms and evolutionary computation. In: OmeGA: A Competent Genetic Algorithm for Solving Permutation and Scheduling Problems (2002)

Markelle, K., Rachel, L., Kolby, N.: The UCI Machine Learning Repository. https://archive.ics.uci.edu

Maza, S., Touahria, M.: Feature selection for intrusion detection using new multi-objective estimation of distribution algorithms. Appl. Intell. 49(12), 4237–4257 (2019). https://doi.org/10.1007/s10489-019-01503-7

Mühlenbein, H.: The equation for response to selection and its use for prediction. Evol. Comput. 5(3), 303–346 (1997). https://doi.org/10.1162/EVCO.1997.5.3.303. https://pubmed.ncbi.nlm.nih.gov/10021762/

Panichella, A.: An adaptive evolutionary algorithm based on non-Euclidean geometry for many-objective optimization. In: Proceedings of the 2019 Genetic and Evolutionary Computation Conference, GECCO 2019, July 2019, pp. 595–603. Association for Computing Machinery, Inc. (2019). https://doi.org/10.1145/3321707.3321839

Rehman, A.U., Nadeem, A., Malik, M.Z.: Fair feature subset selection using multiobjective genetic algorithm. In: Proceedings of the 2022 Genetic and Evolutionary Computation Conference, GECCO 2022 Companion, July 2022, pp. 360–363. Association for Computing Machinery, Inc. (2022). https://doi.org/10.1145/3520304.3529061

Soliman, O.S., Rassem, A.: Correlation based feature selection using quantum bio inspired estimation of distribution algorithm. Technical report (2012)

Spolaôr, N., Lorena, A.C., Lee, H.D.: Multi-objective genetic algorithm evaluation in feature selection. In: Takahashi, R.H.C., Deb, K., Wanner, E.F., Greco, S. (eds.) EMO 2011. LNCS, vol. 6576, pp. 462–476. Springer, Heidelberg (2011). https://doi.org/10.1007/978-3-642-19893-9_32

Xue, B., Zhang, M., Browne, W.N.: Particle swarm optimization for feature selection in classification: a multi-objective approach. IEEE Trans. Cybern. 43(6), 1656–1671 (2013). https://doi.org/10.1109/TSMCB.2012.2227469

Xue, B., Zhang, M., Browne, W.N., Yao, X.: A survey on evolutionary computation approaches to feature selection. IEEE Trans. Evol. Comput. 20(4), 606–626 (2016). https://doi.org/10.1109/TEVC.2015.2504420

Zhang, Y., Gong, D., Gao, X., Tian, T., Sun, X.: Binary differential evolution with self-learning for multi-objective feature selection. Inf. Sci. 507, 67–85 (2020). https://doi.org/10.1016/J.INS.2019.08.040

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2024 The Author(s), under exclusive license to Springer Nature Switzerland AG

About this paper

Cite this paper

López, J.A., Morales-Osorio, F., Lara, M., Velasco, J., Sánchez, C.N. (2024). Bayesian Network-Based Multi-objective Estimation of Distribution Algorithm for Feature Selection Tailored to Regression Problems. In: Calvo, H., Martínez-Villaseñor, L., Ponce, H. (eds) Advances in Computational Intelligence. MICAI 2023. Lecture Notes in Computer Science(), vol 14391. Springer, Cham. https://doi.org/10.1007/978-3-031-47765-2_23

Download citation

DOI: https://doi.org/10.1007/978-3-031-47765-2_23

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-031-47764-5

Online ISBN: 978-3-031-47765-2

eBook Packages: Computer ScienceComputer Science (R0)