Abstract

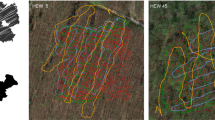

In this work we introduce the CitrusFarm dataset, a comprehensive multimodal sensory dataset collected by a wheeled mobile robot operating in agricultural fields. The dataset offers stereo RGB images with depth information, as well as monochrome, near-infrared and thermal images, presenting diverse spectral responses crucial for agricultural research. Furthermore, it provides a range of navigational sensor data encompassing wheel odometry, LiDAR, inertial measurement unit (IMU), and GNSS with Real-Time Kinematic (RTK) as the centimeter-level positioning ground truth. The dataset comprises seven sequences collected in three fields of citrus trees, featuring various tree species at different growth stages, distinctive planting patterns, as well as varying daylight conditions. It spans a total operation time of 1.7 h, covers a distance of 7.5 km, and constitutes 1.3 TB of data. We anticipate that this dataset can facilitate the development of autonomous robot systems operating in agricultural tree environments, especially for localization, mapping and crop monitoring tasks. Moreover, the rich sensing modalities offered in this dataset can also support research in a range of robotics and computer vision tasks, such as place recognition, scene understanding, object detection and segmentation, and multimodal learning. The dataset, in conjunction with related tools and resources, is made publicly available at https://github.com/UCR-Robotics/Citrus-Farm-Dataset.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

References

Ali, I., et al.: Finnforest dataset: a forest landscape for visual slam. Robot. Auton. Syst. 132, 103610 (2020)

Bechar, A., Vigneault, C.: Agricultural robots for field operations: concepts and components. Biosyst. Eng. 149, 94–111 (2016)

Bender, A., Whelan, B., Sukkarieh, S.: A high-resolution, multimodal data set for agricultural robotics: a ladybird’s-eye view of brassica. J. Field Robot. 37(1), 73–96 (2020)

Campbell, M., Dechemi, A., Karydis, K.: An integrated actuation-perception framework for robotic leaf retrieval: detection, localization, and cutting. In: IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), pp. 9210–9216 (2022)

Campbell, M., Ye, K., Scudiero, E., Karydis, K.: A portable agricultural robot for continuous apparent soil electrical conductivity measurements to improve irrigation practices. In: IEEE International Conference on Automation Science and Engineering (CASE), pp. 2228–2234 (2021)

Chebrolu, N., Lottes, P., Schaefer, A., Winterhalter, W., Burgard, W., Stachniss, C.: Agricultural robot dataset for plant classification, localization and mapping on sugar beet fields. Int. J. Robot. Res. 36(10), 1045–1052 (2017)

Chen, L., Sun, L., Yang, T., Fan, L., Huang, K., Xuanyuan, Z.: RGB-T SLAM: a flexible SLAM framework by combining appearance and thermal information. In: IEEE International Conference on Robotics and Automation (ICRA), pp. 5682–5687 (2017)

Furgale, P., Rehder, J., Siegwart, R.: Unified temporal and spatial calibration for multi-sensor systems. In: IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), pp. 1280–1286 (2013)

Güldenring, R., Van Evert, F.K., Nalpantidis, L.: Rumexweeds: a grassland dataset for agricultural robotics. J. Field Robot. 40(6), 1639–1656 (2023)

Kim, W.S., Lee, D.H., Kim, Y.J., Kim, T., Lee, W.S., Choi, C.H.: Stereo-vision-based crop height estimation for agricultural robots. Comput. Electron. Agric. 181, 105937 (2021)

Lu, Y., Young, S.: A survey of public datasets for computer vision tasks in precision agriculture. Comput. Electron. Agric. 178, 105760 (2020)

Pire, T., Mujica, M., Civera, J., Kofman, E.: The Rosario dataset: multisensor data for localization and mapping in agricultural environments. Int. J. Robot. Res. 38(6), 633–641 (2019)

Polvara, R., et al.: Bacchus long-term (BLT) data set: acquisition of the agricultural multimodal BLT data set with automated robot deployment. J. Field Robot. (2023)

Qin, T., Li, P., Shen, S.: VINS-Mono: a robust and versatile monocular visual-inertial state estimator. IEEE Trans. Rob. 34(4), 1004–1020 (2018)

Tsai, D., Worrall, S., Shan, M., Lohr, A., Nebot, E.: Optimising the selection of samples for robust lidar camera calibration. In: IEEE International Intelligent Transportation Systems Conference (ITSC), pp. 2631–2638 (2021)

Vougioukas, S.G.: Annual review of control, robotics, and autonomous systems. Agric. Robot. 2(1), 365–392 (2019)

Zhao, T., Stark, B., Chen, Y., Ray, A.L., Doll, D.: A detailed field study of direct correlations between ground truth crop water stress and normalized difference vegetation index (NDVI) from small unmanned aerial system (sUAS). In: IEEE International Conference on Unmanned Aircraft Systems (ICUAS), pp. 520–525 (2015)

Acknowledgments

We gratefully acknowledge the support of NSF CMMI-2046270, USDA-NIFA 2021-67022-33453, ONR N00014-18-1-2252 and Univ. of California UC-MRPI M21PR3417. Any opinions, findings, and conclusions or recommendations expressed in this material are those of the authors and do not necessarily reflect the views of the funding agencies.

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2023 The Author(s), under exclusive license to Springer Nature Switzerland AG

About this paper

Cite this paper

Teng, H., Wang, Y., Song, X., Karydis, K. (2023). Multimodal Dataset for Localization, Mapping and Crop Monitoring in Citrus Tree Farms. In: Bebis, G., et al. Advances in Visual Computing. ISVC 2023. Lecture Notes in Computer Science, vol 14361. Springer, Cham. https://doi.org/10.1007/978-3-031-47969-4_44

Download citation

DOI: https://doi.org/10.1007/978-3-031-47969-4_44

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-031-47968-7

Online ISBN: 978-3-031-47969-4

eBook Packages: Computer ScienceComputer Science (R0)