Abstract

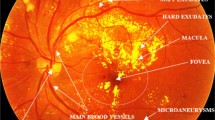

Using deep learning models pre-trained on Imagenet is the traditional solution for medical image classification to deal with data scarcity. Nevertheless, relevant literature supports that this strategy may offer limited gains due to the high dissimilarity between domains. Currently, the paradigm of adapting domain-specialized foundation models is proving to be a promising alternative. However, how to perform such knowledge transfer, and the benefits and limitations it presents, are under study. The CGI-HRDC challenge for Hypertensive Retinopathy diagnosis on fundus images introduces an appealing opportunity to evaluate the transferability of a recently released vision-language foundation model of the retina, FLAIR [42]. In this work, we explore the potential of using FLAIR features as starting point for fundus image classification, and we compare its performance with regard to Imagenet initialization on two popular transfer learning methods: Linear Probing (LP) and Fine-Tuning (FP). Our empirical observations suggest that, in any case, the use of the traditional strategy provides performance gains. In contrast, direct transferability from FLAIR model allows gains of \(\sim \)2.5%. When fine-tuning the whole network, the performance gap increases up to \(\sim \)4%. In this case, we show that avoiding feature deterioration via LP initialization of the classifier allows the best re-use of the rich pre-trained features. Although direct transferability using LP still offers limited performance, we believe that foundation models such as FLAIR will drive the evolution of deep-learning-based fundus image analysis.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

References

Azizpour, H., Razavian, A.S., Sullivan, J., Maki, A., Carlsson, S.: Factors of transferability for a generic convnet representation. In: CVPR Workshop: DeepVision, June 2014

Balyen, L., Peto, T.: Promising artificial intelligence-machine learning-deep learning algorithms in ophthalmology. Asia-Pac. J. Ophthalmol. 8, 264–272 (2019)

Bellemo, V., et al.: Artificial intelligence using deep learning to screen for referable and vision-threatening diabetic retinopathy in Africa: a clinical validation study. Lancet Digit. Health 1, e35–e44 (2019)

Butoi, V.I., Ortiz, J.J.G., Ma, T., Sabuncu, M.R., Guttag, J., Dalca, A.V.: Universeg: universal medical image segmentation. In: ArXiv Preprint, April 2023. http://arxiv.org/abs/2304.06131

Castillo Benítez, V.E., et al.: Dataset from fundus images for the study of diabetic retinopathy. Data Brief 36, 107068 (2021)

Cen, L.P., et al.: Automatic detection of 39 fundus diseases and conditions in retinal photographs using deep neural networks. Nat. Commun. 12, 4828 (2021)

Chandrasekaran, R., Loganathan, B.: Retinopathy grading with deep learning and wavelet hyper-analytic activations. Vis. Comput. 2741–2756 (2023)

Cheng, D., Qin, Z., Jiang, Z., Zhang, S., Lao, Q., Li, K.: Sam on medical images: a comprehensive study on three prompt modes. In: ArXiv Preprint (2023)

Deng, J., Dong, W., Socher, R., Li, L.J., Li, K., Fei-Fei, L.: Imagenet: a large-scale hierarchical image database. In: Proceedings of the IEEE Computer Society Conference on Computer Vision and Pattern Recognition (CVPR), pp. 1–8 (2009)

Deng, R., et al.: Segment anything model (SAM) for digital pathology: assess zero-shot segmentation on whole slide imaging. In: ArXiv Preprint (2023)

Erhan, D., Manzagol, P.A., Bengio, Y., Bengio, S., Vincent, P.: The difficulty of training deep architectures and the effect of unsupervised pre-training. In: Proceedings of the International Conference on Artificial Intelligence and Statistics (PMLR), pp. 153–160 (2009)

Giancardo, L., et al.: Exudate-based diabetic macular edema detection in fundus images using publicly available datasets. Med. Image Anal. 16, 216–226 (2012)

Hassan, T., Akram, M.U., Masood, M.F., Yasin, U.: Deep structure tensor graph search framework for automated extraction and characterization of retinal layers and fluid pathology in retinal SD-OCT scans. Comput. Biol. Med. 105, 112–124 (2019)

Hassan, T., Akram, M.U., Werghi, N., Nazir, M.N.: RAG-FW: a hybrid convolutional framework for the automated extraction of retinal lesions and lesion-influenced grading of human retinal pathology. IEEE J. Biomed. Health Inform. 25(1), 108–120 (2021)

He, K., Zhang, X., Ren, S., Sun, J.: Deep residual learning for image recognition. In: Proceedings of the Conference on Computer Vision and Pattern Recognition (CVPR), pp. 1–12, December 2016

Hoover, A.: Locating blood vessels in retinal images by piecewise threshold probing of a matched filter response. IEEE Trans. Med. Imaging 19, 203–210 (2000)

Hoover, A., Goldbaum, M.: Locating the optic nerve in a retinal image using the fuzzy convergence of the blood vessels. IEEE Trans. Med. Imaging 22, 951–958 (2003)

Huang, J.H., et al.: Deepopht: medical report generation for retinal images via deep models and visual explanation. In: Proceedings of the Winter Conference on Applications of Computer Vision (WACV), pp. 2442–2452 (2021)

Imran, A., Li, J., Pei, Y., Akhtar, F., Mahmood, T., Zhang, L.: Fundus image-based cataract classification using a hybrid convolutional and recurrent neural network. Vis. Comput. (2020)

Jia, C., et al.: Scaling up visual and vision-language representation learning with noisy text supervision. In: International Conference on Machine Learning, pp. 4904–4916 (2021)

Jin, K., et al.: FIVES: a fundus image dataset for artificial intelligence based vessel segmentation. Sci. Data 9, 475 (2022)

Kanavati, F., Tsuneki, M.: Partial transfusion: on the expressive influence of trainable batch norm parameters for transfer learning. In: MIDL (2021)

Kirillov, A., et al.: Segment anything. In: ArXiv Preprint (2023)

Kovalyk, O., et al.: PAPILA: dataset with fundus images and clinical data of both eyes of the same patient for glaucoma assessment. Sci. Data 9, 291 (2022)

Kumar, A., Raghunathan, A., Jones, R.M., Ma, T., Liang, P.: Fine-tuning can distort pretrained features and underperform out-of-distribution. In: International Conference on Learning Representations (ICLR) (2022)

Kumar, J.R., et al.: Chaksu: a glaucoma specific fundus image database. Sci. Data 10 (2023)

Li, L., Xu, M., Wang, X., Jiang, L., Liu, H.: Attention based glaucoma detection: a large-scale database and CNN model. In: Proceedings of the IEEE Computer Society Conference on Computer Vision and Pattern Recognition (CVPR), pp. 1–10 (2019)

Li, T., Gao, Y., Wang, K., Guo, S., Liu, H., Kang, H.: Diagnostic assessment of deep learning algorithms for diabetic retinopathy screening. Inf. Sci. 501, 511–522 (2019)

Lin, L., et al.: The SUSTech-SYSU dataset for automated exudate detection and diabetic retinopathy grading. Sci. Data 7 (2020)

Liu, J., et al.: Clip-driven universal model for organ segmentation and tumor detection. In: ArXiv Preprint, January 2023. http://arxiv.org/abs/2301.00785

Liu, R., et al.: TMM-Nets: transferred multi- to mono-modal generation for lupus retinopathy diagnosis. IEEE Trans. Med. Imaging 42, 1083–1094 (2023)

Liu, R., et al.: Deepdrid: diabetic retinopathy-grading and image quality estimation challenge. Patterns 3 (2022)

Lu, M.Y., et al.: Visual language pretrained multiple instance zero-shot transfer for histopathology images. In: Proceedings of the IEEE Computer Society Conference on Computer Vision and Pattern Recognition (CVPR), October 2023

Nakayama, L.F., et al.: A Brazilian multilabel ophthalmological dataset (BRSET). In: PhysioNet (2023)

Neyshabur, B., Sedghi, H., Zhang, C.: What is being transferred in transfer learning? In: Advances in Neural Information Processing Systems (NeurIPS), August 2020

Pachade, S., et al.: Retinal fundus multi-disease image dataset (RFMiD): a dataset for multi-disease detection research. Data 6, 1–14 (2021)

Pires, R., Jelinek, H.F., Wainer, J., Valle, E., Rocha, A.: Advancing bag-of-visual-words representations for lesion classification in retinal images. PLoS ONE 9 (2014)

Porwal, P., et al.: IDRiD: diabetic retinopathy – segmentation and grading challenge. Med. Image Anal. 59, 101561 (2020)

Radford, A., et al.: Learning transferable visual models from natural language supervision. In: ArXiv Preprint (2021)

Raghu, M., Zhang, C., Kleinberg, J., Bengio, S.: Transfusion: understanding transfer learning for medical imaging. In: Advances in Neural Information Processing Systems (NeurIPS) (2019)

Salam, A.A., Mahadevappa, M., Das, A., Nair, M.S.: RDD-Net: retinal disease diagnosis network: a computer-aided diagnosis technique using graph learning and feature descriptors. Vis. Comput. (2022)

Silva-Rodriguez, J., Chakor, H., Riadh, K., Dolz, J., Ayed, I.B.: A foundation language-image model of the retina (FLAIR): encoding expert knowledge in text supervision. ArXiv Preprint (2023)

Silva-Rodriguez, J., Dolz, J., Ayed, I.B.: Transductive few-shot adapters for medical image segmentation. arXiv Preprint (2023)

Srinivasan, V., Strodthoff, N., Ma, J., Binder, A., Müller, K.R., Samek, W.: To pretrain or not? A systematic analysis of the benefits of pretraining in diabetic retinopathy. PLoS ONE 17 (2022)

Takahashi, H., Tampo, H., Arai, Y., Inoue, Y., Kawashima, H.: Applying artificial intelligence to disease staging: deep learning for improved staging of diabetic retinopathy. PLoS ONE 12 (2017)

de Vente, C., et al.: AIROGS: artificial intelligence for robust glaucoma screening challenge. ArXiv preprint (2023)

Wang, Z., Wu, Z., Agarwal, D., Sun, J.: Medclip: contrastive learning from unpaired medical images and text. In: Empirical Methods in Natural Language Processing (EMNLP), October 2022

Acknowledgments

The work of J. Silva-Rodríguez was partially funded by the Fonds de recherche du Québec (FRQ) under the Postdoctoral Merit Scholarship for Foreign Students (PBEEE).

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2024 The Author(s), under exclusive license to Springer Nature Switzerland AG

About this paper

Cite this paper

Silva-Rodriguez, J. et al. (2024). Exploring the Transferability of a Foundation Model for Fundus Images: Application to Hypertensive Retinopathy. In: Sheng, B., Bi, L., Kim, J., Magnenat-Thalmann, N., Thalmann, D. (eds) Advances in Computer Graphics. CGI 2023. Lecture Notes in Computer Science, vol 14497. Springer, Cham. https://doi.org/10.1007/978-3-031-50075-6_33

Download citation

DOI: https://doi.org/10.1007/978-3-031-50075-6_33

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-031-50074-9

Online ISBN: 978-3-031-50075-6

eBook Packages: Computer ScienceComputer Science (R0)