Abstract

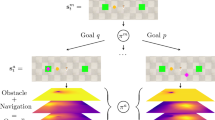

Explainable reinforcement learning has evolved rapidly over the years because transparency of the model’s decision-making process is crucial in some important domains. Differentiable decision trees have been applied to this field due to their performance and interpretability. However, the number of parameters per branch node of a differentiable decision tree is related to the state dimension. When the feature dimension of states increases, the number of states considered by the model in each branch node decision also increases linearly, which increases the difficulty of human understanding. This paper proposes a entroy-based differentiable decision tree, which can restrict each branch node to use as few features as possible to predict during the training process. After the training is completed, the parameters that have little impact on the output of the branch node will be blocked, thus significantly reducing the decision complexity of each branch node. Experiments in multiple environments demonstrate the significant interpretability advantage of our proposed approach.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

References

Atrey, A., Clary, K., Jensen, D.: Exploratory not explanatory: counterfactual analysis of saliency maps for deep reinforcement learning (2019)

Babbar, S.: Review - mastering the game of go with deep neural networks and tree search (2017)

Barbiero, P., Ciravegna, G., Giannini, F., Lió, P., Gori, M., Melacci, S.: Entropy-based logic explanations of neural networks (2021)

Bastani, O., Pu, Y., Solar-Lezama, A.: Verifiable reinforcement learning via policy extraction (2018)

Breiman, L.: Classification and regression trees. Routledge (2017)

Brodley, C.E., Utgoff, P.E.: Multivariate decision trees. Mach. Learn. 19, 45–77 (1995)

Clay-Williams, R., Colligan, L.: Back to basics: checklists in aviation and healthcare. BMJ Qual. Safety 24(7), 428–431 (2015)

Decelle, A., Martin-Mayor, V., Seoane, B.: learning a gauge symmetry with neural-networks (2019)

Doshi-Velez, F., Kim, B.: Towards a rigorous science of interpretable machine learning. arXiv (2017)

Ferreira, F., Nierhoff, T., Hutter, F.: Learning synthetic environments for reinforcement learning with evolution strategies (2021)

Frosst, N., Hinton, G.: Distilling a neural network into a soft decision tree. arXiv preprint arXiv:1711.09784 (2017)

Gawande, A.: Checklist manifesto, the (HB). Penguin Books India (2010)

Greydanus, S., Koul, A., Dodge, J., Fern, A.: Visualizing and understanding atari agents (2017)

Haynes, A.B., et al.: A surgical safety checklist to reduce morbidity and mortality in a global population. N. Engl. J. Med. 360(5), 491–499 (2009)

Heath, D., Kasif, S., Salzberg, S.: Induction of oblique decision trees. In: IJCAI. vol. 1993, pp. 1002–1007. Citeseer (1993)

Jhunjhunwala, A., Lee, J., Sedwards, S., Abdelzad, V., Czarnecki, K.: Improved policy extraction via online q-value distillation. In: 2020 International Joint Conference on Neural Networks (IJCNN)

Jordan, M.I., Jacobs, R.A.: Hierarchical mixtures of experts and the em algorithm. Neural Comput. 6(2), 181–214 (1994)

Kauffman, G., Holland, P., Andersen, R., Bergman, R., Huang, J.: Efficient bipedal robots based on passive-dynamic walkers (2005)

Li, H., Song, J., Xue, M., Zhang, H., Ye, J., Cheng, L., Song, M.: A survey of neural trees. arXiv preprint arXiv:2209.03415 (2022)

Li, J., Monroe, W., Ritter, A., Jurafsky, D., Gao, J.: Deep reinforcement learning for dialogue generation (2016)

Liu, G., Schulte, O., Zhu, W., Li, Q.: Toward interpretable deep reinforcement learning with linear model u-trees (2018)

Mnih, V., et al.: Playing atari with deep reinforcement learning. Computer Science (2013)

Murthy, S.K., Kasif, S., Salzberg, S.: A system for induction of oblique decision trees. J. Artif. Intell. Res. 2, 1–32 (1994)

Murthy, S.K., Kasif, S., Salzberg, S., Beigel, R.: Oc1: a randomized algorithm for building oblique decision trees. In: Proceedings of AAAI. vol. 93, pp. 322–327. Citeseer (1993)

Silva, A., Gombolay, M., Killian, T.W., Jimenez, I.D.J., Son, S.H.: Optimization methods for interpretable differentiable decision trees applied to reinforcement learning. PMLR (2020)

Silva, A., Gombolay, M.C.: Encoding human domain knowledge to warm start reinforcement learning. In: National Conference on Artificial Intelligence (2021)

Stromberg, J.E., Zrida, J., Isaksson, A.: Neural trees-using neural nets in a tree classifier structure. In: Acoustics, Speech, and Signal Processing, IEEE International Conference, pp. 137–140. IEEE Computer Society (1991)

Topin, N., Milani, S., Fang, F., Veloso, M.: Iterative bounding mdps: Learning interpretable policies via non-interpretable methods (2021)

Utgoff, P.E., Brodley, C.E.: An incremental method for finding multivariate splits for decision trees. In: Machine Learning Proceedings 1990, pp. 58–65. Elsevier (1990)

Zubkov, A.: Md-ace vs td3 in lunarlandercontinuous-v2 (2020)

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2024 IFIP International Federation for Information Processing

About this paper

Cite this paper

Liu, Y., Zhang, J., Li, Y. (2024). Entropy-Based Logic Explanations of Differentiable Decision Tree. In: Shi, Z., Torresen, J., Yang, S. (eds) Intelligent Information Processing XII. IIP 2024. IFIP Advances in Information and Communication Technology, vol 703. Springer, Cham. https://doi.org/10.1007/978-3-031-57808-3_6

Download citation

DOI: https://doi.org/10.1007/978-3-031-57808-3_6

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-031-57807-6

Online ISBN: 978-3-031-57808-3

eBook Packages: Computer ScienceComputer Science (R0)