Abstract

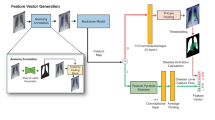

This study investigates utilizing chest X-ray (CXR) data from COVID-19 patients for classifying pneumonia severity, aiming to enhance prediction accuracy in COVID-19 datasets and achieve robust classification across diverse pneumonia cases. A novel CNN-Transformer hybrid network has been developed, leveraging position-aware features and Region Shared MLPs for integrating lung region information. This improves adaptability to different spatial resolutions and scores, addressing the subjectivity of severity assessment due to unclear clinical measurements. The model shows significant improvement in pneumonia severity classification for both COVID-19 and heterogeneous pneumonia datasets. Its adaptable structure allows seamless integration with various backbone models, leading to continuous performance improvement and potential clinical applications, particularly in intensive care units.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

References

Cohen, J.P., et al.: Predicting COVID-19 pneumonia severity on chest X-ray with deep learning. Cureus 12(7) (2020)

Rubin, G.D., et al.: The role of chest imaging in patient management during the COVID-19 pandemic: a multinational consensus statement from the Fleischner society. Radiology 296(1), 172–180 (2020)

Signoroni, A., et al.: BS-Net: learning COVID-19 pneumonia severity on a large chest X-ray dataset. Med. Image Anal. 71, 102046 (2021)

Toussie, D., et al.: Clinical and chest radiography features determine patient outcomes in young and middle-aged adults with COVID-19. Radiology 297(1), E197–E206 (2020)

Jaderberg, M., Simonyan, K., Zisserman, A.: Spatial transformer networks. In: Advances in Neural Information Processing Systems, vol. 28 (2015)

Finnveden, L., Jansson, Y., Lindeberg, T.: Understanding when spatial transformer networks do not support invariance, and what to do about it. In: 2020 25th International Conference on Pattern Recognition (ICPR). IEEE (2021)

Huang, G., et al.: Densely connected convolutional networks. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (2017)

Zhou, Z., Rahman Siddiquee, M.M., Tajbakhsh, N., Liang, J.: UNet++: a nested U-net architecture for medical image segmentation. In: Stoyanov, D., et al. (eds.) DLMIA/ML-CDS -2018. LNCS, vol. 11045, pp. 3–11. Springer, Cham (2018). https://doi.org/10.1007/978-3-030-00889-5_1

Dosovitskiy, A., et al.: An image is worth 16x16 words: transformers for image recognition at scale. arXiv preprint arXiv:2010.11929 (2020)

Vaswani, A., et al.: Attention is all you need. In: Advances in Neural Information Processing Systems, vol. 30 (2017)

Devlin, J., et al.: BERT: pre-training of deep bidirectional transformers for language understanding. arXiv preprint arXiv:1810.04805 (2018)

Rolnick, D., et al.: Deep learning is robust to massive label noise. arXiv preprint arXiv:1705.10694 (2017)

Radford, A., et al.: Learning transferable visual models from natural language supervision. In: International Conference on Machine Learning. PMLR (2021)

You, K., et al.: CXR-CLIP: toward large scale chest X-ray language-image pre-training. In: Greenspan, H., et al. (eds.) MICCAI 2023. LNCS, vol. 14221. Springer, Cham (2023). https://doi.org/10.1007/978-3-031-43895-0_10

Johnson, A.E.W., et al.: MIMIC-CXR, a de-identified publicly available database of chest radiographs with free-text reports. Sci. Data 6(1), 317 (2019)

Irvin, J., et al.: CheXpert: a large chest radiograph dataset with uncertainty labels and expert comparison. In: Proceedings of the AAAI Conference on Artificial Intelligence, vol. 33, no. 01 (2019)

Wang, X., et al.: ChestX-Ray8: hospital-scale chest X-ray database and benchmarks on weakly-supervised classification and localization of common thorax diseases. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (2017)

He, K., et al.: Deep residual learning for image recognition. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (2016)

Lin, T.-Y., et al.: Focal loss for dense object detection. In: Proceedings of the IEEE International Conference on Computer Vision (2017)

Van der Maaten, L., Hinton, G.: Visualizing data using t-SNE. J. Mach. Learn. Res. 9(11) (2008)

Chen, T., et al.: A simple framework for contrastive learning of visual representations. In: International Conference on Machine Learning. PMLR (2020)

Acknowledgments

This work was supported by Institute of Information & communications Technology Planning & Evaluation (IITP) grant funded by the Korea government(MSIT) [No. 2022-0-00641, XVoice: Multi-Modal Voice Meta Learning], [No. RS-2022-00155915, Artificial Intelligence Convergence Innovation Human Resources Development (Inha University)], the National Research Foundation of Korea(NRF) grant funded by the Korea government(MSIT) (No. NRF-2022R1F1A1071574).

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Ethics declarations

Disclosure of Interests

The authors declare that the research was conducted in the absence of any commercial or financial relationships that could be construed as potential conflicts of interest.

Rights and permissions

Copyright information

© 2024 The Author(s), under exclusive license to Springer Nature Switzerland AG

About this paper

Cite this paper

Lee, J.B., Kim, J.S., Lee, H.G. (2024). COVID19 to Pneumonia: Multi Region Lung Severity Classification Using CNN Transformer Position-Aware Feature Encoding Network. In: Linguraru, M.G., et al. Medical Image Computing and Computer Assisted Intervention – MICCAI 2024. MICCAI 2024. Lecture Notes in Computer Science, vol 15001. Springer, Cham. https://doi.org/10.1007/978-3-031-72378-0_44

Download citation

DOI: https://doi.org/10.1007/978-3-031-72378-0_44

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-031-72377-3

Online ISBN: 978-3-031-72378-0

eBook Packages: Computer ScienceComputer Science (R0)