Abstract

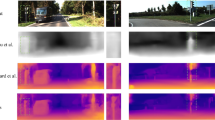

Learning a self-supervised Monocular Depth Estimation (MDE) model with great generalization remains significantly challenging. Despite the success of adversarial augmentation in the supervised learning generalization, naively incorporating it into self-supervised MDE models potentially causes over-regularization, suffering from severe performance degradation. In this paper, we conduct qualitative analysis and illuminate the main causes: (i) inherent sensitivity in the UNet-alike depth network and (ii) dual optimization conflict caused by over-regularization. To tackle these issues, we propose a general adversarial training framework, named Stabilized Conflict-optimization Adversarial Training (SCAT), integrating adversarial data augmentation into self-supervised MDE methods to achieve a balance between stability and generalization. Specifically, we devise an effective scaling depth network that tunes the coefficients of long skip connection and effectively stabilizes the training process. Then, we propose a conflict gradient surgery strategy, which progressively integrates the adversarial gradient and optimizes the model toward a conflict-free direction. Extensive experiments on five benchmarks demonstrate that SCAT can achieve state-of-the-art performance and significantly improve the generalization capability of existing self-supervised MDE methods.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

References

Geiger, A., Lenz, P., Urtasun, R.: Are we ready for autonomous driving? The kitti vision benchmark suite. In: IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), 2012, pp. 3354–3361 (2012)

Sakaridis, C., Dai, D., Van Gool, L.: Semantic foggy scene understanding with synthetic data. Int. J. Comput. Vis. 126(9), 973–992 (2018)

Eigen, D., Puhrsch, C., Fergus, R.: Depth map prediction from a single image using a multi-scale deep network. In: Advances in Neural Information Processing System (NeurIPS), 2014

Garg, R., B.G., V.K., Carneiro, G., Reid, I.: Unsupervised CNN for single view depth estimation: geometry to the rescue. In: Leibe, B., Matas, J., Sebe, N., Welling, M. (eds.) ECCV 2016. LNCS, vol. 9912, pp. 740–756. Springer, Cham (2016). https://doi.org/10.1007/978-3-319-46484-8_45

Zhou, T., Brown, M., Snavely, N., Lowe, D.G.: Unsupervised learning of depth and ego-motion from video. In: IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), 2017, pp. 1851–1858 (2017)

Godard, C., Aodha, O.M., Brostow, G.J.: Unsupervised monocular depth estimation with left-right consistency. In: IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), 2017, pp. 270–279 (2017)

Godard, C., Aodha, O.M., Firman, M., Brostow, G.J.: Digging into self-supervised monocular depth prediction. In: IEEE/CVF International Conference on Computer Vision (ICCV), 2019, pp. 3828–3838 (2019)

Yan, J., Zhao, H., Bu, P., Jin, Y.: Channel-wise attention-based network for self-supervised monocular depth estimation. In: IEEE International Conference on 3D Vision (3DV), 2021, pp. 464–473 (2021)

Lyu, X., et al.: Hr-depth: high resolution self-supervised monocular depth estimation. In: AAAI Conference on Artificial Intelligence (AAAI), 2021, pp. 2294–2301 (2021)

Zhou, H., Greenwood, D., Taylor, S.: Self-supervised monocular depth estimation with internal feature fusion. In: British Machine Vision Conference (BMVC), 2021

Zhao, C., et al.: MonoVit: self-supervised monocular depth estimation with a vision transformer. In: IEEE International Conference on 3D Vision (3DV), 2022

Pillai, S., Ambruş, R., Gaidon, A.: Superdepth: self-supervised, super-resolved monocular depth estimation. In: IEEE International Conference on Robotics and Automation (ICRA), 2019, pp. 9250–9256, 2019

Zhao, H., Gallo, O., Frosio, I., Kautz, J.: Loss functions for image restoration with neural networks. IEEE Trans. Comput. Imaging (TCI) 3(1), 47–57 (2016)

Wang, L., Sun, X., Jiang, W., Yang, J.: Drivingstereo: a large-scale dataset for stereo matching in autonomous driving scenarios. In: 2018 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS). IEEE, 2018, pp. 5554–5561 (2018)

Caesar, H., et al.: Nuscenes: a multimodal dataset for autonomous driving, arXiv preprint arXiv:1903.11027, 2019

Wang, Y., Chao, W.L., Garg, D., Hariharan, B., Campbell, M., Weinberger, K.Q.: Pseudo-lidar from visual depth estimation: bridging the gap in 3d object detection for autonomous driving. In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, 2019, pp. 8445–8453 (2019)

Dong, X., Garratt, M.A., Anavatti, S.G. and Abbass, H.A.: Towards real-time monocular depth estimation for robotics: a survey. IEEE Trans. Intell. Transp. Syst. 23(10), 16 940–16 961 (2022)

Cheng, Z., Liang, J., Tao, G., Liu, D., Zhang, X.: Adversarial training of self-supervised monocular depth estimation against physical-world attacks, arXiv preprint arXiv:2301.13487, 2023

Saunders, K., Vogiatzis, G., Manso, L. J.: Self-supervised monocular depth estimation: let’s talk about the weather, arXiv preprint arXiv:2307.08357, 2023

Hu, J., Ozay, M., Zhang, Y., Okatani, T.: Revisiting single image depth estimation: toward higher resolution maps with accurate object boundaries. In: 2019 IEEE Winter Conference on Applications of Computer Vision (WACV). IEEE, 2019, pp. 1043–1051 (2019)

Aleotti, F., Tosi, F., Poggi, M., Mattoccia, S.: Generative adversarial networks for unsupervised monocular depth prediction. In: Proceedings of the European Conference on Computer Vision (ECCV) Workshops, 2018

Luo, Y., et al.: Single view stereo matching. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, 2018, pp. 155–163 (2018)

Zhou, T., Brown, M., Snavely, N., Lowe, D.G.: Unsupervised learning of depth and ego-motion from video. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, 2017, pp. 1851–1858 (2017)

Wang, Z., Bovik, A.C., Sheikh, H.R., Simoncelli, E.P.: Image quality assessment: from error visibility to structural similarity. IEEE Trans. Image Process. 13(4), 600–612 (2004)

Bae, J., Moon, S., Im, S.: Deep digging into the generalization of self-supervised monocular depth estimation. In: Proceedings of the AAAI Conference on Artificial Intelligence, vol. 37, no. 1, pp. 187–196 (2023)

Rusak, E., et al.: A simple way to make neural networks robust against diverse image corruptions. In: Vedaldi, A., Bischof, H., Brox, T., Frahm, J.-M. (eds.) ECCV 2020. LNCS, vol. 12348, pp. 53–69. Springer, Cham (2020). https://doi.org/10.1007/978-3-030-58580-8_4

Kong, L., Xie, S., Hu, H., Ng, L.X., Cottereau, B., Ooi, W.T.: Robodepth: robust out-of-distribution depth estimation under corruptions. Adv. Neural Inf. Process. Syst. 36 (2024)

Liu, L., Song, X., Wang, M., Liu, Y., Zhang, L.: Self-supervised monocular depth estimation for all day images using domain separation. In: Proceedings of the IEEE/CVF International Conference on Computer Vision, 2021, pp. 12 737–12 746 (2021)

Gurram, A., Tuna, A.F., Shen, F., Urfalioglu, O., Lopez, A.M.: Monocular depth estimation through virtual-world supervision and real-world sfm self-supervision. IEEE Trans. Intell. Transp. Syst. 23(8), 12 738–12 751 (2021)

Wu, J., Zhang, C., Zhang, X., Zhang, Z., Freeman, W.T., Tenenbaum, J.B.: Learning shape priors for single-view 3d completion and reconstruction. In: Proceedings of the European Conference on Computer Vision (ECCV), 2018, pp. 646–662 (2018)

Yu, T., Kumar, S., Gupta, A., Levine, S., Hausman, K., Finn, C.: Gradient surgery for multi-task learning. Adv. Neural Inf. Process. Syst. 33, 5824–5836 (2020)

Ronneberger, O., Fischer, P., Brox, T.: U-Net: convolutional networks for biomedical image segmentation. In: Navab, N., Hornegger, J., Wells, W.M., Frangi, A.F. (eds.) MICCAI 2015. LNCS, vol. 9351, pp. 234–241. Springer, Cham (2015). https://doi.org/10.1007/978-3-319-24574-4_28

Liashchynskyi, P., Liashchynskyi, P.: Grid search, random search, genetic algorithm: a big comparison for nas, arXiv preprint arXiv:1912.06059, 2019

Liu, S., et al.: Efficient universal shuffle attack for visual object tracking. In: ICASSP 2022-2022 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP). IEEE, 2022, pp. 2739–2743 (2022)

Huang, Z., Zhou, P., Yan, S., Lin, L.: Scalelong: towards more stable training of diffusion model via scaling network long skip connection. Adv. Neural Inf. Process. Syst. 36 (2024)

Rice, L., Bair, A., Zhang, H., Kolter, J.Z.: Robustness between the worst and average case. Adv. Neural Inf. Process. Syst. 34, 27 840–27 851 (2021)

Wang, Y., et al.: Evaluating worst case adversarial weather perturbations robustness. In: NeurIPS ML Safety Workshop, 2022

Godard, C., Mac Aodha, O., Firman, M., Brostow, G.J.: Digging into self-supervised monocular depth estimation. In: Proceedings of the IEEE/CVF International Conference on Computer Vision, 2019, pp. 3828–3838 (2019)

Poggi, M., Tosi, F., Mattoccia, S.: Learning monocular depth estimation with unsupervised trinocular assumptions. In: 2018 International Conference on 3D Vision (3DV). IEEE, 2018, pp. 324–333 (2018)

Ramamonjisoa, M., Du, Y., Lepetit, V.: Predicting sharp and accurate occlusion boundaries in monocular depth estimation using displacement fields. In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, 2020, pp. 14 648–14 657 (2020)

Wong, A., Soatto, S.: Bilateral cyclic constraint and adaptive regularization for unsupervised monocular depth prediction. In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, 2019, pp. 5644–5653 (2019)

Zhang, S., Zhang, J., Tao, D.: Towards scale-aware, robust, and generalizable unsupervised monocular depth estimation by integrating IMU motion dynamics. In: Avidan, S., Brostow, G., Cisse, M., Farinella, G.M., Hassner, T. (eds.) Computer Vision – ECCV 2022. ECCV 2022. LNCS, vol. 13698, pp. 143–160. Springer, Cham (2022). https://doi.org/10.1007/978-3-031-19839-7_9

Han, W., Yin, J., Jin, X., Dai, X., Shen, J.: BRNet: exploring comprehensive features for monocular depth estimation. In: Avidan, S., Brostow, G., Cisse, M., Farinella, G.M., Hassner, T. (eds.) Computer Vision – ECCV 2022. ECCV 2022. LNCS, vol. 13698, pp. 586–602. Springer, Cham (2022). https://doi.org/10.1007/978-3-031-19839-7_34

Zhou, Z., Dong, Q.: Self-distilled feature aggregation for self-supervised monocular depth estimation. In: Avidan, S., Brostow, G., Cissé, M., Farinella, G.M., Hassner, T. (eds.) Computer Vision – ECCV 2022. ECCV 2022. LNCS, vol. 13661, pp. 709–726. Springer, Cham (2022). https://doi.org/10.1007/978-3-031-19769-7_41

Garg, D., Wang, Y., Hariharan, B., Campbell, M., Weinberger, K.Q., Chao, W.L.: Wasserstein distances for stereo disparity estimation. Adv. Neural Inf. Process. Syst. 33, 22 517–22 529 (2020)

Chen, X., Zhang, R., Jiang, J., Wang, Y., Li, G., Li, T.H.: Self-supervised monocular depth estimation: Solving the edge-fattening problem. In: Proceedings of the IEEE/CVF Winter Conference on Applications of Computer Vision, 2023, pp. 5776–5786 (2023)

Ma, J., Lei, X., Liu, N., Zhao, X., Pu, S.: Towards comprehensive representation enhancement in semantics-guided self-supervised monocular depth estimation. In: Avidan, S., Brostow, G., Cissé, M., Farinella, G.M., Hassner, T. (eds.) Computer Vision – ECCV 2022. ECCV 2022. LNCS, vol. 13661, pp. 304–321. Springer, Cham (2022). https://doi.org/10.1007/978-3-031-19769-7_18

Zhu, S., Brazil, G., Liu, X.: The edge of depth: explicit constraints between segmentation and depth. In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, 2020, pp. 13 116–13 125 (2020)

Chen, P.Y., Liu, A.H., Liu, Y.C., Wang, Y.C.F.,: Towards scene understanding: unsupervised monocular depth estimation with semantic-aware representation. In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, 2019, pp. 2624–2632 (2019)

Jung, H., Park, E., Yoo, S.: Fine-grained semantics-aware representation enhancement for self-supervised monocular depth estimation. In: Proceedings of the IEEE/CVF International Conference on Computer Vision, 2021, pp. 12 642–12 652 (2021)

Peng, R., Wang, R., Lai, Y., Tang, L., Cai, Y.: Excavating the potential capacity of self-supervised monocular depth estimation. In: Proceedings of the IEEE/CVF International Conference on Computer Vision, 2021, pp. 15 560–15 569 (2021)

Wang, K., et al.: Regularizing nighttime weirdness: efficient self-supervised monocular depth estimation in the dark. In: Proceedings of the IEEE/CVF International Conference on Computer Vision, 2021, pp. 16 055–16 064 (2021)

Vankadari, M., Golodetz, S., Garg, S., Shin, S., Markham, A., Trigoni, N.: When the sun goes down: repairing photometric losses for all-day depth estimation. In: Conference on Robot Learning. PMLR, 2023, pp. 1992–2003 (2023)

Spencer, J., Bowden, R., Hadfield, S.: Defeat-net: general monocular depth via simultaneous unsupervised representation learning. In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, 2020, pp. 14 402–14 413 (2020)

Atapour-Abarghouei, A., Breckon, T.P.: Real-time monocular depth estimation using synthetic data with domain adaptation via image style transfer. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, 2018, pp. 2800–2810 (2018)

Zhao, C., Tang, Y., Sun, Q.: Unsupervised monocular depth estimation in highly complex environments. IEEE Trans. Emerg. Top. Comput. Intell. 6(5), 1237–1246 (2022)

Carlini, N., Wagner, D.: Towards evaluating the robustness of neural networks. In: 2017 IEEE Symposium on Security and Privacy (SP). IEEE, 2017, pp. 39–57 (2017)

Zhang, H., Wang, J.: Towards adversarially robust object detection. In: Proceedings of the IEEE/CVF International Conference on Computer Vision, 2019, pp. 421–430 (2019)

Chen, X., Xie, C., Tan, M., Zhang, L., Hsieh, C.J., Gong, B.: Robust and accurate object detection via adversarial learning. In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, 2021, pp. 16 622–16 631 (2021)

Xu, X., Zhao, H., Jia, J.: Dynamic divide-and-conquer adversarial training for robust semantic segmentation. In: Proceedings of the IEEE/CVF International Conference on Computer Vision, 2021, pp. 7486–7495 (2021)

Hung, W.C., Tsai, Y.H., Liou, Y.T., Lin, Y.Y., Yang, M.H.: Adversarial learning for semi-supervised semantic segmentation, arXiv preprint arXiv:1802.07934, 2018

Carmon, Y., Raghunathan, A., Schmidt, L., Duchi, J.C., Liang, P.S.: Unlabeled data improves adversarial robustness. Adv. Neural Inf. Process. Syst. 32 (2019)

Alayrac, J.B., Uesato, J., Huang, P.S., Fawzi, A., Stanforth, R., Kohli, P.: Are labels required for improving adversarial robustness? Adv. Neural Inf. Process. Syst. 32 (2019)

Ho, C.H., Nvasconcelos, N.: Contrastive learning with adversarial examples. Adv. Neural Inf. Process. Syst. 33, 17 081–17 093 (2020)

Kim, M., Tack, J., Hwang, S.J.: Adversarial self-supervised contrastive learning. Adv. Neural Inf. Process. Syst. 33, 2983–2994 (2020)

Liu, S., et al.: Improving generalization in visual reinforcement learning via conflict-aware gradient agreement augmentation. In: Proceedings of the IEEE/CVF International Conference on Computer Vision, 2023, pp. 23 436–23 446 (2023)

Kong, L., et al.: The robodepth challenge: methods and advancements towards robust depth estimation, 2023

Acknowledgement

The research was supported by the National Natural Science Foundation of China (U23B2009).

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

1 Electronic supplementary material

Below is the link to the electronic supplementary material.

Rights and permissions

Copyright information

© 2025 The Author(s), under exclusive license to Springer Nature Switzerland AG

About this paper

Cite this paper

Yao, Y. et al. (2025). Improving Domain Generalization in Self-supervised Monocular Depth Estimation via Stabilized Adversarial Training. In: Leonardis, A., Ricci, E., Roth, S., Russakovsky, O., Sattler, T., Varol, G. (eds) Computer Vision – ECCV 2024. ECCV 2024. Lecture Notes in Computer Science, vol 15082. Springer, Cham. https://doi.org/10.1007/978-3-031-72691-0_11

Download citation

DOI: https://doi.org/10.1007/978-3-031-72691-0_11

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-031-72690-3

Online ISBN: 978-3-031-72691-0

eBook Packages: Computer ScienceComputer Science (R0)