Abstract

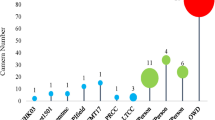

Wildlife ReID involves utilizing visual technology to identify specific individuals of wild animals in different scenarios, holding significant importance for wildlife conservation, ecological research, and environmental monitoring. Existing wildlife ReID methods are predominantly tailored to specific species, exhibiting limited applicability. Although some approaches leverage extensively studied person ReID techniques, they struggle to address the unique challenges posed by wildlife. Therefore, in this paper, we present a unified, multi-species general framework for wildlife ReID. Given that high-frequency information is a consistent representation of unique features in various species, significantly aiding in identifying contours and details such as fur textures, we propose the Adaptive High-Frequency Transformer model with the goal of enhancing high-frequency information learning. To mitigate the inevitable high-frequency interference in the wilderness environment, we introduce an object-aware high-frequency selection strategy to adaptively capture more valuable high-frequency components. Notably, we unify the experimental settings of multiple wildlife datasets for ReID, achieving superior performance over state-of-the-art ReID methods. In domain generalization scenarios, our approach demonstrates robust generalization to unknown species. Code is available at https://github.com/JigglypuffStitch/AdaFreq.git.

C. Li and S. Chen—Equal contributions.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

References

Ahmed, E., Jones, M., Marks, T.K.: An improved deep learning architecture for person re-identification. In: IEEE Conference Computing Visualization Pattern Recognition, pp. 3908–3916 (2015)

Bąk, S., Carr, P.: Deep deformable patch metric learning for person re-identification. IEEE Trans. Circuit Syst. Video Technol. 28(10), 2690–2702 (2017)

Bergamini, L., et al.: Multi-views embedding for cattle re-identification. In: International Conference on Signal Image Technology & Internet-based Systems, pp. 184–191. IEEE (2018)

Bouma, S., Pawley, M.D., Hupman, K., Gilman, A.: Individual common dolphin identification via metric embedding learning. In: Image and Vision Computing New Zealand, pp. 1–6. IEEE (2018)

Bruslund Haurum, J., Karpova, A., Pedersen, M., Hein Bengtson, S., Moeslund, T.B.: Re-identification of zebrafish using metric learning. In: IEEE Win. Conference on Application of Computing visualization Workshop, pp. 1–11 (2020)

Cheeseman, T., et al.: Advanced image recognition: a fully automated, high-accuracy photo-identification matching system for humpback whales. Mamm. Biol. 102(3), 915–929 (2022)

Chen, C., Ye, M., Qi, M., Du, B.: Sketchtrans: disentangled prototype learning with transformer for sketch-photo recognition. IEEE Trans. Pattern Anal. Mach. Intell. (2023)

Chen, S., Ye, M., Du, B.: Rotation invariant transformer for recognizing object in UAVs. In: ACM International Conference on Multimedia, pp. 2565–2574 (2022)

Choi, S., Kim, T., Jeong, M., Park, H., Kim, C.: Meta batch-instance normalization for generalizable person re-identification. In: IEEE Conference on Computing Vision Pattern Recognition, pp. 3425–3435 (2021)

Dosovitskiy, A., et al.: An image is worth 16x16 words: transformers for image recognition at scale. arXiv preprint arXiv:2010.11929 (2020)

Halloran, K.M., Murdoch, J.D., Becker, M.S.: Applying computer-aided photo-identification to messy datasets: a case study of t hornicroft’s giraffe (g iraffa camelopardalis thornicrofti). Afr. J. Ecol. 53(2), 147–155 (2015)

He, S., Luo, H., Wang, P., Wang, F., Li, H., Jiang, W.: Transreid: transformer-based object re-identification. In: International Conference on Computing Vision, pp. 15013–15022 (2021)

Holmberg, J., Norman, B., Arzoumanian, Z.: Estimating population size, structure, and residency time for whale sharks Rhincodon Typus through collaborative photo-identification. Endangered Species Research 7(1), 39–53 (2009)

Huang, W., Ye, M., Du, B.: Learn from others and be yourself in heterogeneous federated learning. In: IEEE Conference on Computing Vision Pattern Recognition (2022)

Huang, W., Ye, M., Shi, Z., Du, B.: Generalizable heterogeneous federated cross-correlation and instance similarity learning. IEEE Trans. Pattern Anal. Mach. Intell. (2023)

Huang, W., Ye, M., Shi, Z., Li, H., Du, B.: Rethinking federated learning with domain shift: a prototype view. In: IEEE Conference on Computing Vision Pattern Recognition (2023)

Huang, W., et al.: A federated learning for generalization, robustness, fairness: a survey and benchmark. IEEE Trans. Pattern Anal. Mach. Intell. (2024)

Jiao, B., et al.: Toward re-identifying any animal. In: Advances in Neural Information Processing Systems, vol. 36 (2024)

Konovalov, D.A., Hillcoat, S., Williams, G., Birtles, R.A., Gardiner, N., Curnock, M.I.: Individual MINKE whale recognition using deep learning convolutional neural networks. J. Geosci. Environ. Protect. 6, 25–36 (2018)

Korschens, M., Denzler, J.: Elpephants: a fine-grained dataset for elephant re-identification. In: International Conference on Computing Vision Workshop (2019)

Kuncheva, L.I., Williams, F., Hennessey, S.L., Rodríguez, J.J.: A benchmark database for animal re-identification and tracking. In: IEEE International Conference on Image Processing Applications and Systems, pp. 1–6. IEEE (2022)

Li, H., Ye, M., Wang, C., Du, B.: Pyramidal transformer with conv-patchify for person re-identification. In: ACM International Conference on Multimedia, pp. 7317–7326 (2022)

Li, S., Li, J., Tang, H., Qian, R., Lin, W.: ATRW: a benchmark for amur tiger re-identification in the wild. arXiv preprint arXiv:1906.05586 (2019)

Li, S., Sun, L., Li, Q.: Clip-reid: exploiting vision-language model for image re-identification without concrete text labels. In: AAAI, vol. 37, pp. 1405–1413 (2023)

Li, W., Zhao, R., Xiao, T., Wang, X.: DeepReID: deep filter pairing neural network for person re-identification. In: IEEE Conference Computing Vision Pattern Recognition, pp. 152–159 (2014)

Li, Y., He, J., Zhang, T., Liu, X., Zhang, Y., Wu, F.: Diverse part discovery: occluded person re-identification with part-aware transformer. In: IEEE Conference Computing Vision Pattern Recognition, pp. 2898–2907 (2021)

Liao, S., Hu, Y., Zhu, X., Li, S.Z.: Person re-identification by local maximal occurrence representation and metric learning. In: IEEE Conference on Computing Vision Pattern Recognition, pp. 2197–2206 (2015)

Lin, S., et al.: Deep frequency filtering for domain generalization. In: IEEE Conference on Computing Vision Pattern Recognition, pp. 11797–11807 (2023)

Matthé, M., et al.: Comparison of photo-matching algorithms commonly used for photographic capture-recapture studies. Ecol. Evol. 7(15), 5861–5872 (2017)

Moskvyak, O., Maire, F., Dayoub, F., Armstrong, A.O., Baktashmotlagh, M.: Robust re-identification of manta rays from natural markings by learning pose invariant embeddings. In: Digital Image Computing: Techniques and Applications, pp. 1–8. IEEE (2021)

Nepovinnykh, E., Chelak, I., Lushpanov, A., Eerola, T., Kälviäinen, H., Chirkova, O.: Matching individual Ladoga ringed seals across short-term image sequences. Mamm. Biol. 102(3), 957–972 (2022)

Nepovinnykh, E., et al.: SealID: Saimaa ringed seal re-identification dataset. Sensors 22(19), 7602 (2022)

Nepovinnykh, E., Eerola, T., Kalviainen, H.: Siamese network based pelage pattern matching for ringed seal re-identification. In: IEEE Win. Conference on Application of Computing Vision Workshop, pp. 25–34 (2020)

Norouzzadeh, M.S., et al.: Automatically identifying, counting, and describing wild animals in camera-trap images with deep learning. Proc. Natl. Acad. Sci. 115(25), E5716–E5725 (2018)

Papafitsoros, K., Adam, L., Čermák, V., Picek, L.: SeaTurtleID: a novel long-span dataset highlighting the importance of timestamps in wildlife re-identification. arXiv preprint arXiv:2211.10307 (2022)

Parham, J., Crall, J., Stewart, C., Berger-Wolf, T., Rubenstein, D.I.: Animal population censusing at scale with citizen science and photographic identification. In: AAAI Spring Symposium-Technical Report (2017)

Qu Yang, M.Y., Tao, D.: Synergy of sight and semantics: visual intention understanding with clip. In: European Conference on Computer Vision (2024)

Radford, A., et al.: Learning transferable visual models from natural language supervision. In: International Conference on Machine Learning, pp. 8748–8763. PMLR (2021)

Rao, Y., Chen, G., Lu, J., Zhou, J.: Counterfactual attention learning for fine-grained visual categorization and re-identification. In: International Conference on Computing Vision, pp. 1025–1034 (2021)

Wang, L., et al.: Giant panda identification. IEEE Trans. Image Process. 30, 2837–2849 (2021)

Wang, T., Liu, H., Song, P., Guo, T., Shi, W.: Pose-guided feature disentangling for occluded person re-identification based on transformer. In: AAAI, vol. 36, pp. 2540–2549 (2022)

Weideman, H., et al.: Extracting identifying contours for African elephants and humpback whales using a learned appearance model. In: IEEE Win. Conference on Application of Computing Vision, pp. 1276–1285 (2020)

Weideman, H.J., et al.: Integral curvature representation and matching algorithms for identification of dolphins and whales. In: International Conference on Computing Vision Workshop, pp. 2831–2839 (2017)

Xiong, F., Gou, M., Camps, O., Sznaier, M.: Person re-identification using kernel-based metric learning methods. In: Fleet, D., Pajdla, T., Schiele, B., Tuytelaars, T. (eds.) ECCV 2014. LNCS, vol. 8695, pp. 1–16. Springer, Cham (2014). https://doi.org/10.1007/978-3-319-10584-0_1

Yang, Q., Ye, M., Cai, Z., Su, K., Du, B.: Composed image retrieval via cross relation network with hierarchical aggregation transformer. IEEE Trans. Image Process. 32, 4543–4554 (2023). https://doi.org/10.1109/TIP.2023.3299791

Yang, Q., Ye, M., Du, B.: EmoLLM: multimodal emotional understanding meets large language models (2024). https://arxiv.org/abs/2406.16442

Ye, M., Chen, S., Li, C., Zheng, W.S., Crandall, D., Du, B.: Transformer for object re-identification: a survey. arXiv preprint arXiv:2401.06960 (2024)

Ye, M., Shen, J., Lin, G., Xiang, T., Shao, L., Hoi, S.C.H.: Deep learning for person re-identification: a survey and outlook. IEEE Trans. Pattern Anal. Mach. Intell. 44(6), 2872–2893 (2022)

Ye, M., Shen, J., Zhang, X., Yuen, P.C., Chang, S.F.: Augmentation invariant and instance spreading feature for softmax embedding. IEEE Trans. Pattern Anal. Mach. Intell. 44(2), 924–939 (2020)

Ye, M., Wu, Z., Chen, C., Du, B.: Channel augmentation for visible-infrared re-identification. IEEE Trans. Pattern Anal. Mach. Intell. (2023)

Zhang, G., Zhang, Y., Zhang, T., Li, B., Pu, S.: PHA: patch-wise high-frequency augmentation for transformer-based person re-identification. In: IEEE Conference on Computing Vision Pattern Recognition, pp. 14133–14142 (2023)

Zhang, G., Zhang, P., Qi, J., Lu, H.: Hat: hierarchical aggregation transformers for person re-identification. In: ACM International Conference on Multimedia, pp. 516–525 (2021)

Zhang, T., Zhao, Q., Da, C., Zhou, L., Li, L., Jiancuo, S.: Yakreid-103: a benchmark for yak re-identification. In: IEEE International Joint Conference on Biometrics, pp. 1–8. IEEE (2021)

Zhao, R., Ouyang, W., Wang, X.: Learning mid-level filters for person re-identification. In: IEEE Conference on Computing Vision Pattern Recognition, pp. 144–151 (2014)

Zhu, H., Ke, W., Li, D., Liu, J., Tian, L., Shan, Y.: Dual cross-attention learning for fine-grained visual categorization and object re-identification. In: IEEE Conference on Computing Vision Pattern Recognition, pp. 4692–4702 (2022)

Acknowledgments

This work is supported by the National Natural Science Foundation of China under Grant (62176188, 62361166629) and the Special Fund of Hubei Luojia Laboratory (220100015). The numerical calculations in this paper have been done on the supercomputing system in the Supercomputing Center of Wuhan University.

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2025 The Author(s), under exclusive license to Springer Nature Switzerland AG

About this paper

Cite this paper

Li, C., Chen, S., Ye, M. (2025). Adaptive High-Frequency Transformer for Diverse Wildlife Re-identification. In: Leonardis, A., Ricci, E., Roth, S., Russakovsky, O., Sattler, T., Varol, G. (eds) Computer Vision – ECCV 2024. ECCV 2024. Lecture Notes in Computer Science, vol 15102. Springer, Cham. https://doi.org/10.1007/978-3-031-72784-9_17

Download citation

DOI: https://doi.org/10.1007/978-3-031-72784-9_17

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-031-72783-2

Online ISBN: 978-3-031-72784-9

eBook Packages: Computer ScienceComputer Science (R0)