Abstract

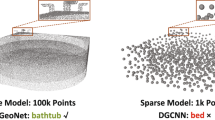

Despite the significant advancements in pre-training methods for point cloud understanding, directly capturing intricate shape information from irregular point clouds without reliance on external data remains a formidable challenge. To address this problem, we propose GPSFormer, an innovative Global Perception and Local Structure Fitting-based Transformer, which learns detailed shape information from point clouds with remarkable precision. The core of GPSFormer is the Global Perception Module (GPM) and the Local Structure Fitting Convolution (LSFConv). Specifically, GPM utilizes Adaptive Deformable Graph Convolution (ADGConv) to identify short-range dependencies among similar features in the feature space and employs Multi-Head Attention (MHA) to learn long-range dependencies across all positions within the feature space, ultimately enabling flexible learning of contextual representations. Inspired by Taylor series, we design LSFConv, which learns both low-order fundamental and high-order refinement information from explicitly encoded local geometric structures. Integrating the GPM and LSFConv as fundamental components, we construct GPSFormer, a cutting-edge Transformer that effectively captures global and local structures of point clouds. Extensive experiments validate GPSFormer’s effectiveness in three point cloud tasks: shape classification, part segmentation, and few-shot learning. The code of GPSFormer is available at https://github.com/changshuowang/GPSFormer.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

References

Chen, B., Xia, Y., Zang, Y., Wang, C., Li, J.: Decoupled local aggregation for point cloud learning. arXiv preprint arXiv:2308.16532 (2023)

Chen, G., Wang, M., Yang, Y., Yu, K., Yuan, L., Yue, Y.: PointGPT: auto-regressively generative pre-training from point clouds. arXiv preprint arXiv:2305.11487 (2023)

Engel, N., Belagiannis, V., Dietmayer, K.: Point transformer. IEEE Access 9, 134826–134840 (2021)

Fang, X., Hu, Y., Zhou, P., Wu, D.: ANIMC: a soft approach for autoweighted noisy and incomplete multiview clustering. IEEE Trans. Artif. Intell. 3(2), 192–206 (2021)

Fang, X., Hu, Y., Zhou, P., Wu, D.O.: V3H: view variation and view heredity for incomplete multiview clustering. IEEE Trans. Artif. Intell. 1(3), 233–247 (2020)

Fang, X., Hu, Y., Zhou, P., Wu, D.O.: Unbalanced incomplete multi-view clustering via the scheme of view evolution: weak views are meat; strong views do eat. IEEE Trans. Emerg. Top. Comput. Intell. 6(4), 913–927 (2021)

Fang, X., et al.: Annotations are not all you need: a cross-modal knowledge transfer network for unsupervised temporal sentence grounding. In: Findings of the Association for Computational Linguistics: EMNLP 2023, pp. 8721–8733 (2023)

Fang, X., et al.: Fewer steps, better performance: efficient cross-modal clip trimming for video moment retrieval using language. In: Proceedings of the AAAI Conference on Artificial Intelligence, pp. 1735–1743 (2024)

Fang, X., Liu, D., Zhou, P., Hu, Y.: Multi-modal cross-domain alignment network for video moment retrieval. IEEE Trans. Multimedia 25, 7517–7532 (2022)

Fang, X., Liu, D., Zhou, P., Nan, G.: You can ground earlier than see: an effective and efficient pipeline for temporal sentence grounding in compressed videos. In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, pp. 2448–2460 (2023)

Fang, X., Liu, D., Zhou, P., Xu, Z., Li, R.: Hierarchical local-global transformer for temporal sentence grounding. IEEE Trans. Multimedia 26, 3263–3277 (2023)

Goyal, A., Law, H., Liu, B., Newell, A., Deng, J.: Revisiting point cloud shape classification with a simple and effective baseline. In: International Conference on Machine Learning, pp. 3809–3820. PMLR (2021)

Guo, M.H., Cai, J.X., Liu, Z.N., Mu, T.J., Martin, R.R., Hu, S.M.: PCT: point cloud transformer. Comput. Vis. Media 7, 187–199 (2021)

Hamdi, A., Giancola, S., Ghanem, B.: MVTN: multi-view transformation network for 3D shape recognition. In: ICCV, pp. 1–11 (2021)

Jiang, L., Wang, C., Ning, X., Yu, Z.: LTTPoint: a MLP-based point cloud classification method with local topology transformation module. In: 2023 7th Asian Conference on Artificial Intelligence Technology (ACAIT), pp. 783–789. IEEE (2023)

Kasneci, E., et al.: ChatGPT for good? On opportunities and challenges of large language models for education. Learn. Individ. Differ. 103, 102274 (2023)

Komarichev, A., Zhong, Z., Hua, J.: A-CNN: annularly convolutional neural networks on point clouds. In: CVPR, pp. 7421–7430 (2019)

Li, L., Zhu, S., Fu, H., Tan, P., Tai, C.L.: End-to-end learning local multi-view descriptors for 3D point clouds. In: CVPR, pp. 1919–1928 (2020)

Li, Y., Bu, R., Sun, M., Wu, W., Di, X., Chen, B.: PointCNN: convolution on X-transformed points. In: NeurIPS, vol. 31 (2018)

Liu, Y., Tian, B., Lv, Y., Li, L., Wang, F.Y.: Point cloud classification using content-based transformer via clustering in feature space. IEEE/CAA J. Autom. Sin. 11(1), 231–239 (2023)

Liu, Y., Chen, C., Wang, C., King, X., Liu, M.: Regress before construct: regress autoencoder for point cloud self-supervised learning. In: ACMMM, pp. 1738–1749 (2023)

Liu, Y., Fan, B., Xiang, S., Pan, C.: Relation-shape convolutional neural network for point cloud analysis. In: CVPR, pp. 8895–8904 (2019)

Ma, X., Qin, C., You, H., Ran, H., Fu, Y.: Rethinking network design and local geometry in point cloud: a simple residual MLP framework. arXiv preprint arXiv:2202.07123 (2022)

Maturana, D., Scherer, S.: VoxNet: a 3D convolutional neural network for real-time object recognition. In: 2015 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), pp. 922–928. IEEE (2015)

Moody, J., Darken, C.J.: Fast learning in networks of locally-tuned processing units. Neural Comput. 1(2), 281–294 (1989)

Muzahid, A., Wan, W., Sohel, F., Wu, L., Hou, L.: CurveNet: curvature-based multitask learning deep networks for 3D object recognition. IEEE/CAA J. Autom. Sin. 8(6), 1177–1187 (2020)

Pang, Y., Wang, W., Tay, F.E., Liu, W., Tian, Y., Yuan, L.: Masked autoencoders for point cloud self-supervised learning. In: Avidan, S., Brostow, G., Cissé, M., Farinella, G.M., Hassner, T. (eds.) ECCV 2022. LNCS, vol. 13662, pp. 604–621. Springer, Cham (2022). https://doi.org/10.1007/978-3-031-20086-1_35

Park, J., Lee, S., Kim, S., Xiong, Y., Kim, H.J.: Self-positioning point-based transformer for point cloud understanding. In: CVPR, pp. 21814–21823 (2023)

Qi, C.R., Su, H., Mo, K., Guibas, L.J.: PointNet: deep learning on point sets for 3D classification and segmentation. In: CVPR, pp. 652–660 (2017)

Qi, C.R., Yi, L., Su, H., Guibas, L.J.: PointNet++: deep hierarchical feature learning on point sets in a metric space. arXiv preprint arXiv:1706.02413 (2017)

Qian, G., et al.: PointNeXt: revisiting PointNet++ with improved training and scaling strategies. In: NeurIPS, vol. 35, pp. 23192–23204 (2022)

Qiu, S., Anwar, S., Barnes, N.: Dense-resolution network for point cloud classification and segmentation. In: Proceedings of the IEEE/CVF Winter Conference on Applications of Computer Vision, pp. 3813–3822 (2021)

Qiu, S., Anwar, S., Barnes, N.: Geometric back-projection network for point cloud classification. TMM 24, 1943–1955 (2021)

Ran, H., Liu, J., Wang, C.: Surface representation for point clouds. In: CVPR, pp. 18942–18952 (2022)

Rao, Y., Lu, J., Zhou, J.: Global-local bidirectional reasoning for unsupervised representation learning of 3D point clouds. In: CVPR, pp. 5376–5385 (2020)

Ren, J., Rao, A., Lindorfer, M., Legout, A., Choffnes, D.: ReCon: revealing and controlling PII leaks in mobile network traffic. In: Proceedings of the 14th Annual International Conference on Mobile Systems, Applications, and Services, pp. 361–374 (2016)

Riegler, G., Osman Ulusoy, A., Geiger, A.: OctNet: learning deep 3D representations at high resolutions. In: CVPR, pp. 3577–3586 (2017)

Rosenblatt, F.: The perceptron: a probabilistic model for information storage and organization in the brain. Psychol. Rev. 65(6), 386 (1958)

Su, H., Maji, S., Kalogerakis, E., Learned-Miller, E.: Multi-view convolutional neural networks for 3D shape recognition. In: ICCV, pp. 945–953 (2015)

Sun, P., et al.: Scalability in perception for autonomous driving: waymo open dataset. In: CVPR, pp. 2446–2454 (2020)

Thomas, H., Qi, C.R., Deschaud, J.E., Marcotegui, B., Goulette, F., Guibas, L.J.: KPConv: flexible and deformable convolution for point clouds. In: ICCV, pp. 6411–6420 (2019)

Uy, M.A., Pham, Q.H., Hua, B.S., Nguyen, T., Yeung, S.K.: Revisiting point cloud classification: a new benchmark dataset and classification model on real-world data. In: ICCV, pp. 1588–1597 (2019)

Wang, C., Ning, X., Li, W., Bai, X., Gao, X.: 3D person re-identification based on global semantic guidance and local feature aggregation. IEEE Trans. Circ. Syst. Video Technol. 34(6), 4698–4712 (2024)

Wang, C., Ning, X., Sun, L., Zhang, L., Li, W., Bai, X.: Learning discriminative features by covering local geometric space for point cloud analysis. IEEE Trans. Geosci. Remote Sens. 60, 1–15 (2022)

Wang, C., Wang, H., Ning, X., Shengwei, T., Li, W.: 3D point cloud classification method based on dynamic coverage of local area. J. Softw. 34(4), 1962–1976 (2022)

Wang, C., Samari, B., Siddiqi, K.: Local spectral graph convolution for point set feature learning. In: ECCV, pp. 52–66 (2018)

Wang, Y., Sun, Y., Liu, Z., Sarma, S.E., Bronstein, M.M., Solomon, J.M.: Dynamic graph CNN for learning on point clouds. ACM Trans. Graph. (ToG) 38(5), 1–12 (2019)

Wei, X., Yu, R., Sun, J.: View-GCN: view-based graph convolutional network for 3D shape analysis. In: CVPR, pp. 1850–1859 (2020)

Wu, J., Zhang, C., Xue, T., Freeman, B., Tenenbaum, J.: Learning a probabilistic latent space of object shapes via 3D generative-adversarial modeling. In: NeurIPS, vol. 29 (2016)

Wu, W., Qi, Z., Fuxin, L.: PointConv: deep convolutional networks on 3D point clouds. In: CVPR, pp. 9621–9630 (2019)

Wu, Z., et al.: 3D ShapeNets: a deep representation for volumetric shapes. In: CVPR, pp. 1912–1920 (2015)

Xu, M., Ding, R., Zhao, H., Qi, X.: PAConv: position adaptive convolution with dynamic kernel assembling on point clouds. In: CVPR, pp. 3173–3182 (2021)

Xue, L., et al.: ULIP: learning a unified representation of language, images, and point clouds for 3D understanding. In: CVPR, pp. 1179–1189 (2023)

Yan, X., Zheng, C., Li, Z., Wang, S., Cui, S.: PointASNL: robust point clouds processing using nonlocal neural networks with adaptive sampling. In: CVPR, pp. 5589–5598 (2020)

Yang, Y., Feng, C., Shen, Y., Tian, D.: FoldingNet: point cloud auto-encoder via deep grid deformation. In: CVPR, pp. 206–215 (2018)

Yang, Z., Wang, L.: Learning relationships for multi-view 3D object recognition. In: ICCV, pp. 7505–7514 (2019)

Yi, L., et al.: A scalable active framework for region annotation in 3D shape collections. ACM Trans. Graph. (ToG) 35(6), 1–12 (2016)

Yu, X., Tang, L., Rao, Y., Huang, T., Zhou, J., Lu, J.: Point-BERT: pre-training 3D point cloud transformers with masked point modeling. In: CVPR, pp. 19313–19322 (2022)

Yu, Z., Li, L., Xie, J., Wang, C., Li, W., Ning, X.: Pedestrian 3D shape understanding for person re-identification via multi-view learning. IEEE Trans. Circ. Syst. Video Technol. 34(7), 5589–5602 (2024)

Zha, Y., et al.: Towards compact 3D representations via point feature enhancement masked autoencoders. arXiv preprint arXiv:2312.10726 (2023)

Zhang, C., Wan, H., Shen, X., Wu, Z.: PVT: point-voxel transformer for point cloud learning. arXiv preprint arXiv:2108.06076 (2021)

Zhang, H., et al.: Deep learning-based 3D point cloud classification: a systematic survey and outlook. Displays 79, 102456 (2023)

Zhang, H., Wang, C., Yu, L., Tian, S., Ning, X., Rodrigues, J.: PointGT: a method for point-cloud classification and segmentation based on local geometric transformation. IEEE Trans. Multimedia 26, 8052–8062 (2024)

Zhang, R., Wang, L., Wang, Y., Gao, P., Li, H., Shi, J.: Parameter is not all you need: starting from non-parametric networks for 3D point cloud analysis. arXiv preprint arXiv:2303.08134 (2023)

Zhao, H., Jiang, L., Jia, J., Torr, P.H., Koltun, V.: Point transformer. In: ICCV, pp. 16259–16268 (2021)

Zhou, H., Feng, Y., Fang, M., Wei, M., Qin, J., Lu, T.: Adaptive graph convolution for point cloud analysis. In: ICCV, pp. 4965–4974 (2021)

Zhu, X.X., Shahzad, M.: Facade reconstruction using multiview spaceborne TomoSAR point clouds. IEEE Trans. Geosci. Remote Sens. 52(6), 3541–3552 (2013)

Acknowledgment

This work was supported in part by NTU-DESAY SV Research Program under Grant 2018-0980; and in part by the Ministry of Education, Singapore, under its Academic Research Fund Tier 1, under Grant RG78/21. The computational work for this article was partially performed on resources of the National Supercomputing Centre, Singapore (https://www.nscc.sg).

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

1 Electronic supplementary material

Below is the link to the electronic supplementary material.

Rights and permissions

Copyright information

© 2025 The Author(s), under exclusive license to Springer Nature Switzerland AG

About this paper

Cite this paper

Wang, C. et al. (2025). GPSFormer: A Global Perception and Local Structure Fitting-Based Transformer for Point Cloud Understanding. In: Leonardis, A., Ricci, E., Roth, S., Russakovsky, O., Sattler, T., Varol, G. (eds) Computer Vision – ECCV 2024. ECCV 2024. Lecture Notes in Computer Science, vol 15066. Springer, Cham. https://doi.org/10.1007/978-3-031-73242-3_5

Download citation

DOI: https://doi.org/10.1007/978-3-031-73242-3_5

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-031-73241-6

Online ISBN: 978-3-031-73242-3

eBook Packages: Computer ScienceComputer Science (R0)