Abstract

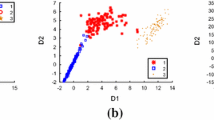

Dimensionality reduction is a fundamental yet active research topic in pattern recognition and machine learning. On the other hand, Gaussian Mixture Model (GMM), a famous model, has been widely used in various applications, e.g., clustering and classification. For high-dimensional data, previous research usually performs dimensionality reduction first, and then inputs the reduced features to other available models, e.g., GMM. In particular, there are very few investigations or discussions on how dimensionality reduction could be interactively and systematically conducted together with the important GMM. In this paper, we study the problem how unsupervised dimensionality reduction could be performed together with GMM and if such joint learning could lead to improvement in comparison with the traditional unsupervised method. Specifically, we engage the Mixture of Factor Analyzers with the assumption that a common factor loading exist for all the components. Such setting exactly optimizes a dimensionality reduction together with the parameters of GMM. We compare the joint learning approach and the separate dimensionality reduction plus GMM method on both synthetic data and real data sets. Experimental results show that the joint learning significantly outperforms the comparison method in terms of three criteria for supervised learning.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Preview

Unable to display preview. Download preview PDF.

Similar content being viewed by others

References

Baek, J., McLachlan, G.J., Flack, L.K.: Mixtures of factor analyzers with common factor loadings: Applications to the clustering and visualization of high-dimensional data. IEEE Transactions on Pattern Analysis and Machine Intelligence 32(7), 1298–1309 (2010)

Figueiredo, M., Jain, A.K.: Unsupervised learning of finite mixture models. IEEE Transaction on Pattern Analysis and Machine Intelligence 24(3), 381–396 (2002)

Huang, K., King, I., Lyu, M.R.: Finite mixture model of bound semi-naive bayesian network classifier. In: Kaynak, O., Alpaydın, E., Oja, E., Xu, L. (eds.) ICANN 2003 and ICONIP 2003. LNCS, vol. 2714, pp. 115–122. Springer, Heidelberg (2003)

Huang, K., Yang, H., King, I., Lyu, M.R.: Machine Learning: Modeling Data Locally and Gloablly. Springer (2008) ISBN 3-5407-9451-4

Mclanchlan, G.J., Peel, D., Bean, R.W.: Modelling high-dimensional data by mixtures of factor analyzers. Computational Statistics & Data Analysis 41, 379–388 (2003)

B. Xu, K. Huang, and C.-L. Liu. Maxi-min discriminant analysis via online learning. Neural Networks, 34:56–64, 2012.

Author information

Authors and Affiliations

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2014 Springer International Publishing Switzerland

About this paper

Cite this paper

Yang, X., Huang, K., Zhang, R. (2014). Unsupervised Dimensionality Reduction for Gaussian Mixture Model. In: Loo, C.K., Yap, K.S., Wong, K.W., Teoh, A., Huang, K. (eds) Neural Information Processing. ICONIP 2014. Lecture Notes in Computer Science, vol 8835. Springer, Cham. https://doi.org/10.1007/978-3-319-12640-1_11

Download citation

DOI: https://doi.org/10.1007/978-3-319-12640-1_11

Publisher Name: Springer, Cham

Print ISBN: 978-3-319-12639-5

Online ISBN: 978-3-319-12640-1

eBook Packages: Computer ScienceComputer Science (R0)