Abstract

In order to provide support for the construction of MCQs, there have been recent efforts to generate MCQs with controlled difficulty from OWL ontologies. Preliminary evaluation suggests that automatically generated questions are not field ready yet and highlight the need for further evaluations. In this study, we have presented an extensive evaluation of automatically generated MCQs. We found that even questions that adhere to guidelines are subject to the clustering of distractors. Hence, the clustering of distractors must be realised as this could affect the prediction of difficulty.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Notes

- 1.

- 2.

Downloaded from: http://nlp.stanford.edu/software/tagger.shtml.

- 3.

This is a grammatical correction that is manually added to the question.

References

Alsubait, T., Parsia, B., Sattler, U.: Generating multiple choice questions from ontologies: lessons learnt. In: OWLED, Chicago, pp. 73–84 (2014)

Alsubait, T., Parsia, B., Sattler, U.: Generating multiple choice questions from ontologies: how far can we go? In: Lambrix, P., Hyvönen, E., Blomqvist, E., Presutti, V., Qi, G., Sattler, U., Ding, Y., Ghidini, C. (eds.) EKAW 2014. LNCS (LNAI), vol. 8982, pp. 66–79. Springer, Cham (2015). doi:10.1007/978-3-319-17966-7_7

Haladyna, T.M., Downing, S.M., Rodriguez, M.C.: A review of multiple-choice item-writing guidelines for classroom assessment. Appl. Measur. Educ. 15(3), 309–333 (2002)

Pho, V.-M., Andre, T., Ligozat, A.-L., Grau, B., Illouz, G., Francois, T., et al.: Multiple choice question corpus analysis for distractor characterization. In: LREC, pp. 4284–4291, Reykjavik (2014)

Acknowledgments

The authors would like to thank Tahani Alsubait for sharing the MCQ generator code.

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Appendices

Appendix

A Question Categories

B Example Questions

Syntactic Clues

Examples of the form (SK)

-

Stem: State Transition Network ...:

Examples of the form (SD)

-

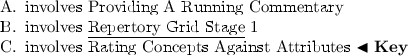

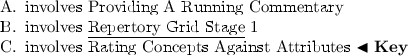

Stem: Repertory Grid Stage 2 ...

-

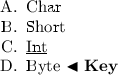

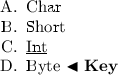

Stem: Which of the following terms can be defined by “a Java keyword used to declare a variable that holds an 8 bit signed integer”?

Examples of the form (ANT)

-

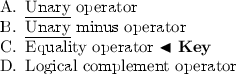

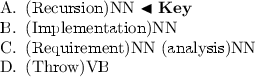

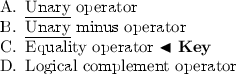

Stem: Which of the following is [a]Footnote 3 Binary Operator?

Syntactic Consistency

-

Stem: What is [a] Book?

-

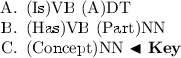

Stem: Which of the following is [a] Java Language Feature?

Note that in the previous example, although (D) is inconsistent with the key, it is indeed a Java language feature.

-

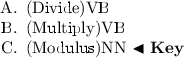

Stem: Which of the following terms can be defined by “A binary remainder operator that produces a pure value that is the remainder from an implied division of its operands”?

In this example, both distractors (A) and (B) are inconsistent with the key but they are both plausible. By investigating the ontology, we found that this issue resulted from the inconsistent naming of concepts.

Semantic homogeneity

-

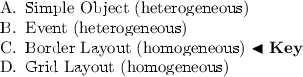

Stem: Which of the following terms can be defined by “A layout manager that allows subcomponents to be added in up to five places specified by constants NORTH, SOUTH, EAST, WEST and CENTER”?

It is clearly deduced from the previous question that the expected answer is a layout manager. As can be seen, distractors (A) and (B) are heterogeneous in relation to the key type while option (D) is homogeneous.

Clustered Distractors

-

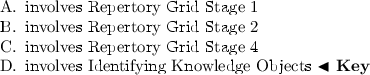

Stem: Which of the following terms can be defined by “A stage in the software development process where customer needs are translated into how it could be implemented”?

Distractors (A) and (B) are clustered since knowing that the answer is not testing will allow the elimination of all types of testing.

-

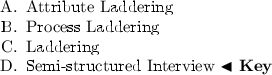

Stem: Protocol Analysis Technique ...

-

Stem: Which of the following is produces some Protocol?

Rights and permissions

Copyright information

© 2017 Springer International Publishing AG

About this paper

Cite this paper

Kurdi, G., Parsia, B., Sattler, U. (2017). An Experimental Evaluation of Automatically Generated Multiple Choice Questions from Ontologies. In: Dragoni, M., Poveda-Villalón, M., Jimenez-Ruiz, E. (eds) OWL: Experiences and Directions – Reasoner Evaluation. OWLED ORE 2016 2016. Lecture Notes in Computer Science(), vol 10161. Springer, Cham. https://doi.org/10.1007/978-3-319-54627-8_3

Download citation

DOI: https://doi.org/10.1007/978-3-319-54627-8_3

Publisher Name: Springer, Cham

Print ISBN: 978-3-319-54626-1

Online ISBN: 978-3-319-54627-8

eBook Packages: Computer ScienceComputer Science (R0)