Abstract

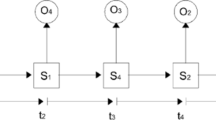

The analysis of surgical motion has received a growing interest with the development of devices allowing their automatic capture. In this context, the use of advanced surgical training systems make an automated assessment of surgical trainee possible. Automatic and quantitative evaluation of surgical skills is a very important step in improving surgical patient care. In this paper, we present a novel approach for the discovery and ranking of discriminative and interpretable patterns of surgical practice from recordings of surgical motions. A pattern is defined as a series of actions or events in the kinematic data that together are distinctive of a specific gesture or skill level. Our approach is based on the discretization of the continuous kinematic data into strings which are then processed to form bags of words. This step allows us to apply discriminative pattern mining technique based on the word occurrence frequency. We show that the patterns identified by the proposed technique can be used to accurately classify individual gestures and skill levels. We also present how the patterns provide a detailed feedback on the trainee skill assessment. Experimental evaluation performed on the publicly available JIGSAWS dataset shows that the proposed approach successfully classifies gestures and skill levels.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

References

Tsuda, S., Scott, D., Doyle, J., Jones, D.B.: Surgical skills training and simulation. Curr. Probl. Surg. 46(4), 271–370 (2009)

Forestier, G., Petitjean, F., Riffaud, L., Jannin, P.: Optimal sub-sequence matching for the automatic prediction of surgical tasks. In: Holmes, J.H., Bellazzi, R., Sacchi, L., Peek, N. (eds.) AIME 2015. LNCS (LNAI), vol. 9105, pp. 123–132. Springer, Cham (2015). doi:10.1007/978-3-319-19551-3_15

Dlouhy, B.J., Rao, R.C.: Surgical skill and complication rates after bariatric surgery. N. Engl. J. Med. 370(3), 285 (2014)

Senin, P., Malinchik, S.: SAX-VSM: interpretable time series classification using SAX and vector space model. In: IEEE International Conference on Data Mining, pp. 1175–1180 (2013)

Lin, J., Keogh, E., Wei, L., Lonardi, S.: Experiencing SAX: a novel symbolic representation of time series. Data Min. Knowl. Discov. 15(2), 107 (2007)

Salton, G., Wong, A., Yang, C.S.: A vector space model for automatic indexing. Commun. ACM 18(11), 613–620 (1975)

Gao, Y., Vedula, S.S., Reiley, C.E., Ahmidi, N., Varadarajan, B., Lin, H.C., Tao, L., Zappella, L., Béjar, B., Yuh, D.D., et al.: JHU-ISI gesture and skill assessment working set (JIGSAWS): a surgical activity dataset for human motion modeling. In: Modeling and Monitoring of Computer Assisted Interventions (M2CAI)-MICCAI Workshop, pp. 1–10 (2014)

Reiley, C.E., Hager, G.D.: Decomposition of robotic surgical tasks: an analysis of subtasks and their correlation to skill. In: Modeling and Monitoring of Computer Assisted Interventions (M2CAI) – MICCAI Workshop (2009)

Reiley, C.E., Plaku, E., Hager, G.D.: Motion generation of robotic surgical tasks: learning from expert demonstrations. In: IEEE International Conference on Engineering in Medicine and Biology Society, pp. 967–970 (2010)

Béjar Haro, B., Zappella, L., Vidal, R.: Surgical gesture classification from video data. In: Ayache, N., Delingette, H., Golland, P., Mori, K. (eds.) MICCAI 2012. LNCS, vol. 7510, pp. 34–41. Springer, Heidelberg (2012). doi:10.1007/978-3-642-33415-3_5

Zappella, L., Béjar, B., Hager, G., Vidal, R.: Surgical gesture classification from video and kinematic data. Med. Image Anal. 17(7), 732–745 (2013)

Zia, A., Sharma, Y., Bettadapura, V., Sarin, E.L., Ploetz, T., Clements, M.A., Essa, I.: Automated video-based assessment of surgical skills for training and evaluation in medical schools. Int. J. Comput. Assist. Radiol. Surg. 11(9), 1623–1636 (2016)

Reiley, C.E., Hager, G.D.: Task versus subtask surgical skill evaluation of robotic minimally invasive surgery. In: Yang, G.-Z., Hawkes, D., Rueckert, D., Noble, A., Taylor, C. (eds.) MICCAI 2009. LNCS, vol. 5761, pp. 435–442. Springer, Heidelberg (2009). doi:10.1007/978-3-642-04268-3_54

Despinoy, F., Bouget, D., Forestier, G., Penet, C., Zemiti, N., Poignet, P., Jannin, P.: Unsupervised trajectory segmentation for surgical gesture recognition in robotic training. IEEE Trans. Biomed. Eng. 63(6), 1280–1291 (2015)

Gao, Y., Vedula, S.S., Lee, G.I., Lee, M.R., Khudanpur, S., Hager, G.D.: Unsupervised surgical data alignment with application to automatic activity annotation. In: IEEE International Conference on Robotics and Automation, pp. 4158–4163 (2016)

Zhou, Y., Ioannou, I., Wijewickrema, S., Bailey, J., Kennedy, G., O’Leary, S.: Automated segmentation of surgical motion for performance analysis and Feedback. In: Navab, N., Hornegger, J., Wells, W.M., Frangi, A.F. (eds.) MICCAI 2015 Part I. LNCS, vol. 9349, pp. 379–386. Springer, Cham (2015). doi:10.1007/978-3-319-24553-9_47

Kowalewski, T.M., White, L.W., Lendvay, T.S., Jiang, I.S., Sweet, R., Wright, A., Hannaford, B., Sinanan, M.N.: Beyond task time: automated measurement augments fundamentals of laparoscopic skills methodology. J. Surg. Res. 192(2), 329–338 (2014)

Reiley, C.E., Lin, H.C., Varadarajan, B., Vagvolgyi, B., Khudanpur, S., Yuh, D., Hager, G.: Automatic recognition of surgical motions using statistical modeling for capturing variability. Stud. Health Technol. Inform. 132, 396 (2008)

Tao, L., Elhamifar, E., Khudanpur, S., Hager, G.D., Vidal, R.: Sparse hidden Markov models for surgical gesture classification and skill evaluation. In: Abolmaesumi, P., Joskowicz, L., Navab, N., Jannin, P. (eds.) IPCAI 2012. LNCS, vol. 7330, pp. 167–177. Springer, Heidelberg (2012). doi:10.1007/978-3-642-30618-1_17

Tao, L., Zappella, L., Hager, G.D., Vidal, R.: Surgical gesture segmentation and recognition. In: Mori, K., Sakuma, I., Sato, Y., Barillot, C., Navab, N. (eds.) MICCAI 2013. LNCS, vol. 8151, pp. 339–346. Springer, Heidelberg (2013). doi:10.1007/978-3-642-40760-4_43

Höppner, F.: Time series abstraction methods-a survey. In: GI Jahrestagung, pp. 777–786 (2002)

Patel, P., Keogh, E., Lin, J., Lonardi, S.: Mining motifs in massive time series databases. In: IEEE International Conference on Data Mining, pp. 370–377 (2002)

Moskovitch, R., Shahar, Y.: Classification-driven temporal discretization of multivariate time series. Data Min. Knowl. Disc. 29(4), 871–913 (2015)

Manning, C.D., Raghavan, P., Schütze, H., et al.: Introduction to Information Retrieval, vol. 1. Cambridge University Press, Cambridge (2008)

Ahmidi, N., Tao, L., Sefati, S., Gao, Y., Lea, C., Bejar, B., Zappella, L., Khudanpur, S., Vidal, R., Hager, G.D.: A dataset and benchmarks for segmentation and recognition of gestures in robotic surgery. IEEE Trans. Biomed. Eng. (2017)

Acknowledgement

This work was supported by the Australian Research Council under award DE170100037. This material is based upon work supported by the Air Force Office of Scientific Research, Asian Office of Aerospace Research and Development (AOARD) under award number FA2386-16-1-4023.

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2017 Springer International Publishing AG

About this paper

Cite this paper

Forestier, G., Petitjean, F., Senin, P., Despinoy, F., Jannin, P. (2017). Discovering Discriminative and Interpretable Patterns for Surgical Motion Analysis. In: ten Teije, A., Popow, C., Holmes, J., Sacchi, L. (eds) Artificial Intelligence in Medicine. AIME 2017. Lecture Notes in Computer Science(), vol 10259. Springer, Cham. https://doi.org/10.1007/978-3-319-59758-4_15

Download citation

DOI: https://doi.org/10.1007/978-3-319-59758-4_15

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-319-59757-7

Online ISBN: 978-3-319-59758-4

eBook Packages: Computer ScienceComputer Science (R0)