Abstract

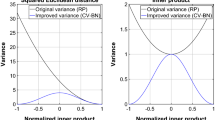

Control variates are used as a variance reduction technique in Monte Carlo integration, making use of positively correlated variables to bring about a reduction of variance for estimated data. By storing the marginal norms of our data, we can use control variates to reduce the variance of random projection estimates. We demonstrate the use of control variates in estimating the Euclidean distance and inner product between pairs of vectors, and give some insight on our control variate correction. Finally, we demonstrate our variance reduction through experiments on synthetic data and the arcene, colon, kos, nips datasets. We hope that our work provides a starting point for other control variate techniques in further random projection applications.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

References

Achlioptas, D.: Database-friendly random projections: Johnson-Lindenstrauss with binary coins. J. Comput. Syst. Sci. 66(4), 671–687 (2003). https://doi.org/10.1016/S0022-0000(03)00025-4

Ailon, N., Chazelle, B.: The fast Johnson-Lindenstrauss transform and approximate nearest neighbors. SIAM J. Comput. 39(1), 302–322 (2009). https://doi.org/10.1137/060673096

Alon, U., Barkai, N., Notterman, D., Gish, K., Ybarra, S., Mack, D., Levine, A.: Broad patterns of gene expression revealed by clustering analysis of tumor and normal colon tissues probed by oligonucleotide arrays. Proc. Nat. Acad. Sci. 96(12), 6745–6750 (1999)

Boutsidis, C., Gittens, A.: Improved matrix algorithms via the subsampled randomized hadamard transform. CoRR abs/1204.0062 (2012). http://arxiv.org/abs/1204.0062

Boutsidis, C., Zouzias, A., Drineas, P.: Random projections for k-means clustering. In: Lafferty, J.D., Williams, C.K.I., Shawe-Taylor, J., Zemel, R.S., Culotta, A. (eds.) Advances in Neural Information Processing Systems 23, pp. 298–306. Curran Associates, Inc. (2010). http://papers.nips.cc/paper/3901-random-projections-for-k-means-clustering.pdf

Fern, X.Z., Brodley, C.E.: Random projection for high dimensional data clustering: a cluster ensemble approach, pp. 186–193 (2003)

Guyon, I., Gunn, S., Ben-Hur, A., Dror, G.: Result analysis of the NIPS 2003 feature selection challenge. In: Saul, L.K., Weiss, Y., Bottou, L. (eds.) Advances in Neural Information Processing Systems 17, pp. 545–552. MIT Press (2005). http://papers.nips.cc/paper/2728-result-analysis-of-the-nips-2003-feature-selection-challenge.pdf

Kang, K., Hooker, G.: Random projections with control variates. In: Proceedings of ICPRAM, February 2016

Li, P., Church, K.W.: A sketch algorithm for estimating two-way and multi-way associations. Comput. Linguist. 33(3), 305–354 (2007). https://doi.org/10.1162/coli.2007.33.3.305

Li, P., Hastie, T.J., Church, K.W.: Improving random projections using marginal information. In: Lugosi, G., Simon, H.U. (eds.) COLT 2006. LNCS (LNAI), vol. 4005, pp. 635–649. Springer, Heidelberg (2006). https://doi.org/10.1007/11776420_46. http://dblp.uni-trier.de/db/conf/colt/colt2006.html#LiHC06

Li, P., Hastie, T.J., Church, K.W.: Very sparse random projections. In: Proceedings of the 12th ACM SIGKDD International Conference on Knowledge Discovery and Data Mining, KDD 2006, pp. 287–296. ACM, New York (2006). https://doi.org/10.1145/1150402.1150436

Liberty, E., Ailon, N., Singer, A.: Dense fast random projections and lean walsh transforms. In: Goel, A., Jansen, K., Rolim, J.D.P., Rubinfeld, R. (eds.) APPROX/RANDOM 2008. LNCS, vol. 5171, pp. 512–522. Springer, Heidelberg (2008). https://doi.org/10.1007/978-3-540-85363-3_40. http://dblp.uni-trier.de/db/conf/approx/approx2008.html#LibertyAS08

Lichman, M.: UCI machine learning repository (2013). http://archive.ics.uci.edu/ml

Mardia, K.V., Kent, J.T., Bibby, J.M.: Multivariate Analysis. Academic Press, Cambridge (1979)

Paul, S., Boutsidis, C., Magdon-Ismail, M., Drineas, P.: Random projections for support vector machines. CoRR abs/1211.6085 (2012). http://arxiv.org/abs/1211.6085

Perrone, V., Jenkins, P.A., Spano, D., Teh, Y.W.: Poisson random fields for dynamic feature models (2016). arXiv e-prints: arXiv:1611.07460

Ross, S.M.: Simulation, 4th edn. Academic Press Inc., Orlando (2006)

Vempala, S.S.: The Random Projection Method. DIMACS Series in Discrete Mathematics and Theoretical Computer Science, vol. 65, pp. 101–105. American Mathematical Society, Providence (2004). http://opac.inria.fr/record=b1101689

Author information

Authors and Affiliations

Corresponding authors

Editor information

Editors and Affiliations

Appendix

Appendix

We use the following lemma for ease of computation of first and second moments.

Lemma 2

Suppose we have a sequence of terms \(\{t_i\}_{i=1}^p = \{a_ir_i\}_{i=1}^p\) for \(\mathbf{a} = (a_1,a_2,\ldots , a_p)\), \(\{s_i\}_{i=1}^p = \{b_ir_i\}_{i=1}^p\) for \(\mathbf{b} = (b_1,b_2,\ldots , b_p)\) and \(r_i\) i.i.d. random variables with \(\mathbb E[r_i] = 0\), \(\mathbb E[r_i^2] = 1\) and finite third, and fourth moments, denoted by \(\mu _3, \mu _4\) respectively. Then:

The motivation for this lemma is that we do expansion of terms of the above four forms to prove our theorems.

Rights and permissions

Copyright information

© 2018 Springer International Publishing AG, part of Springer Nature

About this paper

Cite this paper

Kang, K., Hooker, G. (2018). Control Variates as a Variance Reduction Technique for Random Projections. In: De Marsico, M., di Baja, G., Fred, A. (eds) Pattern Recognition Applications and Methods. ICPRAM 2017. Lecture Notes in Computer Science(), vol 10857. Springer, Cham. https://doi.org/10.1007/978-3-319-93647-5_1

Download citation

DOI: https://doi.org/10.1007/978-3-319-93647-5_1

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-319-93646-8

Online ISBN: 978-3-319-93647-5

eBook Packages: Computer ScienceComputer Science (R0)