Abstract

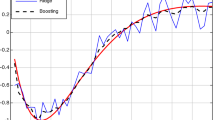

Various forms of boosting techniques have been popularly used in many data mining and machine learning related applications. Inspite of their great success, boosting algorithms still suffer from a few open-ended problems that require closer investigation. The efficiency of any such ensemble technique significantly relies on the choice of the weak learners and the form of the loss function. In this paper, we propose a novel multi-resolution approach for choosing the weak learners during additive modeling. Our method applies insights from multi-resolution analysis and chooses the optimal learners at multiple resolutions during different iterations of the boosting algorithms. We demonstrate the advantages of using this novel framework for classification tasks and show results on different real-world datasets obtained from the UCI machine learning repository. Though demonstrated specifically in the context of boosting algorithms, our framework can be easily accommodated in general additive modeling techniques.

Chapter PDF

Similar content being viewed by others

Keywords

These keywords were added by machine and not by the authors. This process is experimental and the keywords may be updated as the learning algorithm improves.

References

Allwein, E., Schapire, R., Singer, Y.: Reducing multiclass to binary: a unifying approach for margin classifiers. J. Machine Learning Research 1, 113–141 (2001)

Blake, C.L., Merz, C.J.: UCI repository of machine learning databases. University of California, Irvine, Dept. of Information and Computer Sciences (1998), http://www.ics.uci.edu/~mlearn/MLRepository.html

Breiman, L.: Arcing classifiers. The Annals of Statistics 26(3), 801–849 (1998)

Dietterich, T.G.: Ensemble methods in machine learning. In: First International Workshop on Multiple Classifier Systems, pp. 1–15 (2000)

Freund, Y., Schapire, R.E.: Experiments with a new boosting algorithm. In: International Conference on Machine Learning, pp. 148–156 (1996)

Friedman, J.H., Hastie, T., Tibshirani, R.: Additive logistic regression: A statistical view of boosting. Annals of Statistics 28(2), 337–407 (2000)

Graps, A.L.: An introduction to wavelets. IEEE Computational Sciences and Engineering 2(2), 50–61 (1995)

Hastie, T., Tibshirani, R., Friedman, J.: Boosting and Additive Trees. In: The Elements of Statistical Learning. Data Mining, Inference, and Prediction. Springer, New York (2001)

Hong, P., Liu, X.S., Zhou, Q., Lu, X., Liu, J.S., Wong, W.H.: A boosting approach for motif modeling using chip-chip data. Bioinformatics 21(11), 2636–2643 (2005)

Park, J.-H., Reddy, C.K.: Scale-space based weak regressors for boosting. In: Kok, J.N., Koronacki, J., Lopez de Mantaras, R., Matwin, S., Mladenič, D., Skowron, A. (eds.) ECML 2007. LNCS, vol. 4701, pp. 666–673. Springer, Heidelberg (2007)

Schapire, R., Singer, Y., Singhal, A.: Boosting and rocchio applied to text filtering. In: Proceedings of ACM SIGIR, pp. 215–223 (1998)

Viola, P.A., Jones, M.J.: Robust real-time face detection. International Journal of Computer Vision 57(2), 137–154 (2004)

Author information

Authors and Affiliations

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2009 Springer-Verlag Berlin Heidelberg

About this paper

Cite this paper

Reddy, C.K., Park, JH. (2009). Multi-resolution Boosting for Classification and Regression Problems. In: Theeramunkong, T., Kijsirikul, B., Cercone, N., Ho, TB. (eds) Advances in Knowledge Discovery and Data Mining. PAKDD 2009. Lecture Notes in Computer Science(), vol 5476. Springer, Berlin, Heidelberg. https://doi.org/10.1007/978-3-642-01307-2_20

Download citation

DOI: https://doi.org/10.1007/978-3-642-01307-2_20

Publisher Name: Springer, Berlin, Heidelberg

Print ISBN: 978-3-642-01306-5

Online ISBN: 978-3-642-01307-2

eBook Packages: Computer ScienceComputer Science (R0)