Abstract

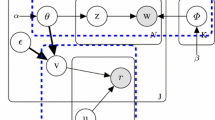

Because users hardly have patience of affording enough labeled data, personalized filter is expected to converge much faster. Topic model based dimension reduction can minimize the structural risk with limited training data. In this paper, we propose a novel supervised dual-PLSA which estimate topics with many kinds of observable data, i.e. labeled and unlabeled documents, supervised information about topics. c-w PLSA model is first proposed, in which word and class are observable variables and topic is latent. Then, two generative models, c-w PLSA and typical PLSA, are combined to share observable variables in order to utilize other observed data. Furthermore, supervised information about topic is employed. This is supervised dual-PLSA. Experiments show the dual-PLSA has a very fast convergence. Within 100 gold standard feedback, dual-PLSA’s cumulative error rate drops to 9%. Its total error rate is 6.94%, which is the lowest among all the filters.

Preview

Unable to display preview. Download preview PDF.

Similar content being viewed by others

References

Sebastiani, F.: Machine Learning in Automated Text Categorization. ACM computing Surveys, 11–12, 32–33 (2002)

Deerwester, S., Dumais, S.T., Furnas, G.W., Landauer, T.K., Harshman, R.: Indexing By Latent Semantic Analysis. Journal of the American Society for Information Science and Technology, 391–407 (1990)

Hofmann, T.: Probabilistic Latent Semantic Indexing. In: The 22st Annual International ACM SIGIR Conference, pp. 50–57. ACM Press, New York (1999)

Lee, D.D., Sebastian Seung, H.: Learning the parts of objects by non-negative matrix factorization. Nature, 788–791 (1999)

Blei, D.M., Ng, A.Y., Jordan, M.I.: Latent Dirichlet allocation. Journal of Machine Learning Research, 993–1022 (2003)

Vapnik, V.N.: The Nature of Statistical Learning Theory. Springer, Heidelberg (2000)

Xue, G.-R., Dai, W., Yang, Q., Yu, Y.: Topic-bridged PLSA for cross-domain text classification. In: The 31st Annual International ACM SIGIR Conference, pp. 627–634. ACM Press, New York (2008)

Blei, D., McAuliffe, J.: Supervised topic models. In: Platt, J., Koller, D., Singer, Y., Roweis, S. (eds.) Advances in Neural Information Processing Systems 20. MIT Press, Cambridge (2008)

Lacoste-Julien, S., Sha, F., Jordan, M.: DiscLDA: Discriminative Learning for Dimensionality Reduction and Classification. In: Twenty-Second Annual Conference on Neural Information Processing Systems, Vancouver, British Columbia, pp. 897–1005 (2008)

Li, T., Ding, C., Zhang, Y., Shao, B.: Knowledge Transformation from Word Space to Document Space. In: The 31st Annual International ACM SIGIR Conference, pp. 187–194. ACM Press, New York (2008)

Ji, X., Xu, W., Zhu, S.: Document Clustering with Prior Knowledge. In: The 29st Annual International ACM SIGIR Conference, pp. 405–412. ACM Press, New York (2006)

Sindhwani, V., Keerthi, S.S.: Large Scale Semi-supervised Linear SVMs. In: The 29st Annual International ACM SIGIR Conference, pp. 477–484. ACM Press, New York (2006)

Raghavan, H., Allan, J.: An InterActive Algorithm for Asking and Incorporating Feature Feedback into Support Vector Machines. In: The 30st Annual International ACM SIGIR Conference, pp. 79–86. ACM Press, New York (2007)

Lu, Y., Zhai, C.: Opinion Integration Through Semi-supervised Topic Modeling. In: Proceeding of the 17th international conference on World Wide Web, pp. 121–130 (2008)

TREC 2007 Spam Track Overview, TREC 2007 (2007), http://trec.nist.gov

Author information

Authors and Affiliations

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2009 Springer-Verlag Berlin Heidelberg

About this paper

Cite this paper

Xu, Wr., Liu, Dx., Guo, J., Cai, Yc., Hu, Rl. (2009). Supervised Dual-PLSA for Personalized SMS Filtering. In: Lee, G.G., et al. Information Retrieval Technology. AIRS 2009. Lecture Notes in Computer Science, vol 5839. Springer, Berlin, Heidelberg. https://doi.org/10.1007/978-3-642-04769-5_22

Download citation

DOI: https://doi.org/10.1007/978-3-642-04769-5_22

Publisher Name: Springer, Berlin, Heidelberg

Print ISBN: 978-3-642-04768-8

Online ISBN: 978-3-642-04769-5

eBook Packages: Computer ScienceComputer Science (R0)