Abstract

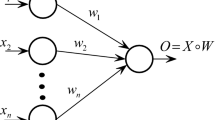

A novel hybrid or separable recursive training strategies are de rived for the training of feedforward neural networks which incoporates a switching module. This new technique for updating weights combines non linear recursive training algorithms for the optimization of nonlinear weights with recursive least square type algorithms for the training of linear weights in one integrated routine. The proposed new variant of hybrid weight update includes switching mechanism based on the condition of input data to the system (correlated or noncorrelated). Simulation results demonstrate the im provement of the new proposed switching mode training scheme.

Preview

Unable to display preview. Download preview PDF.

Similar content being viewed by others

References

Asirvadam, V.S., McLoone, S.F., Irwin, G.W.: Separable Recursive Training Algorithms for Feedforward Neural Networks. In: IEEE World Congress on Computational Intelligence, Honolulu, Hawaii, May 12-17, pp. 1212–1217 (2002)

Asirvadam, V.S., Musab, E.J.O.: Wireless System Identification for Linear Networks. In: The 5th International Colloquium on Signal Processing and its Applications CSPA 2009, Kuala Lumpur, Malaysia, March 6-8 (2009)

Bruls, J., Chou, C.T., Verhaegan, M.: Linear and non-linear system identification using separable least square. In: SYSID 1997, vol. 2, pp. 715–720 (1997)

Chen, S., Billings, S.A., Grant, P.M.: Nonlinear system identification using neural networks. Int. Journal of Control 51(6), 1191–1214 (1990)

Cybenko, G.: Approximation by superpositions of sigmoidal function. Mathematics of Signals and Systems 2, 303–314 (1989)

Huang, G.B., Zhu, Q.-Y., Siew, C.-K.: Extreme Learning Machine: A New Learning Scheme of Feedforward Neural Networks. In: 2004 International Joint Conference on Neural Networks (IJCNN 2004), Budapest, Hungary, July 25-29 (2004)

McLoone, S., Brown, M., Irwin, G., Lightbody, G.: A Hybrid linear/nonlinear training algorithm for feedforward neural network. IEEE Transaction on Neural Networks 9(4), 669–684 (1998)

Lester, N.S.H., Sjöberg, J.: Efficient training of neural nets for nonlinear adaptive filtering using a recursive Levenberg-Marquardt algorithm. IEEE Transactions on Signal Processing 48(7), 1915–1927 (2000)

Rumelhart, D.E., Hinton, G.E., Williams, R.J.: Learning internal representations by error propagation. In: Rumelhart, D.E., McClelland, J.L. (eds.) Parallel Distributed Processing: Exploration in the Microstructure of Cognition, vol. 1. MIT Press, Cambridge (1986)

Sjöberg, J., Mats, V.: Separable Non-Linear Least Squares Minimization- Possible Improvements for Neural Net Fitting. In: Proceeding of IEEE Workshop in Neural Networks for Signal Processing, Amelia Island Plantation, Florida, September 24-26, pp. 64–72 (1997)

Kim, T.-H., Li, J., Manry, M.T.: Evaluation and improvement of two training algorithms. In: Thirty-Sixth Asilomar Conference on Signals, Systems and Computers, November 3-6, vol. 2, pp. 1019–1023 (2002)

Author information

Authors and Affiliations

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2009 Springer-Verlag Berlin Heidelberg

About this paper

Cite this paper

Asirvadam, V.S. (2009). Separable Recursive Training Algorithms with Switching Module. In: Leung, C.S., Lee, M., Chan, J.H. (eds) Neural Information Processing. ICONIP 2009. Lecture Notes in Computer Science, vol 5863. Springer, Berlin, Heidelberg. https://doi.org/10.1007/978-3-642-10677-4_14

Download citation

DOI: https://doi.org/10.1007/978-3-642-10677-4_14

Publisher Name: Springer, Berlin, Heidelberg

Print ISBN: 978-3-642-10676-7

Online ISBN: 978-3-642-10677-4

eBook Packages: Computer ScienceComputer Science (R0)