Abstract

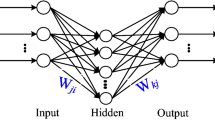

We will examine the various modifications of backpropagation through time algorithm (BPTT) done by stochastic update in the recurrent neural networks (RCNN) including the influence of the different numbers of recurrent neurons. The general introduction involving the stochasticity into neural network was provided by Salvetti and Wilamowski in 1994 in order to improve probability of convergence and speed of convergence. The implementation is simple for arbitrary network topology. In stochastic update scenario, constant number of weights and neurons (neurons selected before starting learning phase) are randomly selected and updated. This is in contrast to classical ordered update, where always all weights or neurons are updated. Stochastic update is suitable to replace classical ordered update without any penalty on implementation complexity and with good chance without penalty on quality of convergence. We have provided first experiments with stochastic modification on backpropagation algorithm (BP) used for artificial feed-forward neural network (FFNN) in detail described in our paper [1]. We will present experiment results on simple toy-task data of time shifted and skewed signal as a verification of our implementation of different algorithm modifications.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Preview

Unable to display preview. Download preview PDF.

Similar content being viewed by others

References

Koščák, J., Jakša, R., Sinčák, P.: Stochastic weight update in the backpropagation algorithm on feed-forward neural networks. In: The 2010 International Joint Conference on Neural Networks, IJCNN (2010)

Salvetti, A., Wilamowski, B.M.: Introducing stochastic processes within the backpropagation algorithm for improved convergence. In: Dagli, C., Fernández, B., Ghosh, J., Kumara, R. (eds.) ANNIE 1994 - Artificial Neural Networks in Engineering (Intelligent Engineering Systems Through Artificial Neural Networks), vol. 4, pp. 205–209. ASME PRESS, New York (1994)

Silva, F., Almeida, L.: Acceleration Technique for the Backpropagation Algorithm. In: Almeida, L.B., Wellekens, C. (eds.) EURASIP 1990. LNCS, vol. 412, pp. 110–119. Springer, Heidelberg (1990)

Werbos, P.: The Roots of Backpropagation: From Ordered Derivation to Neural Networks and Political Forecasting. John Wiley and Sons, Inc. (February 1994), ISBN 0-471-59897-6

Jaeger, H.: Gmd report 148, the ”echo state” approach to analysing and training recurrent neural networks. GMD - German National Research Institute for Computer Science, Tech. Rep. (2001)

Koščak, J., Jakša, R., Sincák, P.: Prediction of temperature daily profile by stochastic update of backpropagation through time algorithm. In: ISF 2011, pp. 1–8 (2011)

Koščak, J.: Stochastic weight selection for backpropagation through time learning (theoretical study of stochastic neural networks learning). Subscribed Doctoral Dissertation Proposal (2012)

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2013 Springer-Verlag Berlin Heidelberg

About this paper

Cite this paper

Koščák, J., Jakša, R., Sinčák, P. (2013). Influence of Number of Neurons in Time Delay Recurrent Networks with Stochastic Weight Update on Backpropagation Through Time. In: Zelinka, I., Rössler, O., Snášel, V., Abraham, A., Corchado, E. (eds) Nostradamus: Modern Methods of Prediction, Modeling and Analysis of Nonlinear Systems. Advances in Intelligent Systems and Computing, vol 192. Springer, Berlin, Heidelberg. https://doi.org/10.1007/978-3-642-33227-2_16

Download citation

DOI: https://doi.org/10.1007/978-3-642-33227-2_16

Publisher Name: Springer, Berlin, Heidelberg

Print ISBN: 978-3-642-33226-5

Online ISBN: 978-3-642-33227-2

eBook Packages: EngineeringEngineering (R0)