Abstract

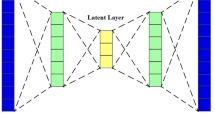

Biomedical prediction is vital to the modern scientific view of life, but it is a challenging task due to high-dimensionality, limited-sample size (also known as HDLSS problem), non-linearity, and data types tend are complex. A large number of dimensionality reduction techniques developed, but, unfortunately, not efficient with small-sample (observation) size dataset. To overcome the pitfalls of the sample-size and dimensionality this study employed variational autoencoder (VAE), which is a powerful framework for unsupervised learning in recent years. The aim of this study is to investigate a reliable biomedical diagnosis method for HDLSS dataset with minimal error. Hence, to evaluate the strength of the proposed model six genomic microarray datasets from Kent Ridge Repository were applied. In the experiment, several choices of dimensions were selected for data preprocessing. Moreover, to find a stable and suitable classifier, different popular classifiers were applied. The experimental results found that the VAE can provide superior performance compared to the traditional methods such as PCA, fastICA, FA, NMF, and LDA.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

References

Clarke, R., et al.: The properties of high-dimensional data spaces: implications for exploring gene and protein expression data. Nat. Rev. Cancer 8(1), 37–49 (2008)

Friedman, J., Hastie, T., Tibshirani, R.: The Elements of Statistical Learning. Springer Series in Statistics, 2nd edn. Springer, New York (2008). https://doi.org/10.1007/978-0-387-84858-7

Köppen, M.: The curse of dimensionality. In: 5th Online World Conference on Soft Computing in Industrial Applications (WSC5) (2000)

Yeung, K.Y., Ruzzo, W.L.: Principal component analysis for clustering gene expression data. Bioinformatics 17(9), 763–774 (2001)

Dai, J.J., Lieu, L., Rocke, D.: Dimension reduction for classification with gene expression microarray data. Stat. Appl. Genet. Mol. Biol. 5(1), 1–21 (2006)

Mishra, D., Dash, R., Rath, A.K., Acharya, M.: Feature selection in gene expression data using principal component analysis and rough set theory. Adv. Exp. Med. Biol. 696, 91–100 (2011)

Jolliffe, I.: Principal Component Analysis, 2nd edn. Springer, New York (2002). https://doi.org/10.1007/b98835

Islam, M.Z.: EXPLORE: a novel decision tree classification algorithm. In: MacKinnon, L.M. (ed.) BNCOD 2010. LNCS, vol. 6121, pp. 55–71. Springer, Heidelberg (2012). https://doi.org/10.1007/978-3-642-25704-9_7

Islam, M.Z., Giggins, H.: Knowledge discovery through SysFor: a systematically developed forest of multiple decision trees. In: Proceedings of the Ninth Australasian Data Mining Conference (AusDM 2011), Ballarat, Australia. CRPIT, vol. 121 (2011)

Adnan, M.N., Islam, M.Z.: Forest PA: constructing a decision forest by penalizing attributes used in previous trees. Expert. Syst. Appl. (ESWA) 89, 389–403 (2017)

Siers, M.J., Islam, M.Z.: Novel algorithms for cost-sensitive classification and knowledge discovery in class imbalanced datasets with an application to NASA software defects. Inf. Sci. 459, 53–70 (2018)

Adnan, M.N., Islam, M.Z.: Optimizing the number of trees in a decision forest to discover a subforest with high ensemble accuracy using a genetic algorithm. Knowl. Based Syst. 110, 86–97 (2016). ISSN 0219-1377

Rahman, M.A., Islam, M.Z.: AWST: A novel attribute weight selection technique for data clustering. In: Proceedings of the 13th Australasian Data Mining Conference (AusDM 2015) (2015)

Gupta, A., Wang, H., Ganapathiraju, M.: Learning structure in gene expression data using deep architectures with an application to gene clustering. In: IEEE International Conference on Bioinformatics and Biomedicine (BIBM) (2015)

Berry, M.W., Brown, M., Langville, A.N., Paucac, P., Plemmons, R.J.: Algorithms and applications for the nonnegative matrix factorization. Comput. Stat. Data Anal. 52(1), 55–173 (2007)

Pascual-Montano, A., Carmona-Saez, P., Chagoyen, M., Tirado, F., Carazo, J.M., Pascual-Marqui, R.D.: bioNMF: a versatile tool for nonnegative matrix factorization in biology. BMC Bioinform. 7, 366 (2006)

Gao, Y., Church, G.: Improving molecular cancer class discovery through sparse non-negative matrix factorization. Bioinformatics 21(21), 3970–3975 (2005)

Liu, W., Kehong, Y., Datian, Y.: Reducing microarray data via nonnegative matrix factorization for visualization and clustering analysis. J. Biomed. Inform. 41, 602–606 (2008)

Zhao, W., Zou, W., Chen, J.J.: Topic modeling for cluster analysis of large biological and medical datasets. BMC Bioinform. 15, S11 (2014)

Lu, H.M., Wei, C.P., Hsiao, F.Y.: Modeling healthcare data using multiple-channel latent Dirichlet allocation. J. Biomed. Inform. 60, 210–223 (2016)

Kho, S.J., Yalamanchili, H.B., Raymer, M.L., Sheth, A.P.: A novel approach for classifying gene expression data using topic modeling. In: Proceedings of the 8th ACM International Conference on Bioinformatics, Computational Biology, and Health Informatics (2017)

Tan, J., Ung, M., Cheng, C., Greene, C.S.: Unsupervised feature construction and knowledge extraction from genome-wide assays of breast cancer with denoising autoencoders. Pac. Symp. Biocomput. 20, 132–143 (2015)

Danaee, P., Ghaeini, R., Hendrix, D.A.: A deep learning approach for cancer detection and relevant gene identification. Pac. Symp. Biocomput. 22, 219–229 (2017)

Smialowski, P., Frishman, D., Kramer, S.: Pitfalls of supervised feature selection. Bioinformatics 26(3), 440–443 (2010)

Diciotti, S., Ciulli, S., Mascalchi, M., Giannelli, M., Toschi, N.: The ‘peeking’ effect in supervised feature selection on diffusion tensor imaging data. Am. J. Neuroradiol. 34(9), E107 (2013)

Kingma, D.P., Welling, M.: Auto-encoding variational Bayes. In: International Conference on Learning Representations (2014)

Rezende, D.J., Mohamed, S., Wierstra, D.: Stochastic backpropagation and approximate inference in deep generative models. In: Proceedings of the 31st International Conference on Machine Learning, vol. 32(2), pp. 1278–1286 (2014)

Witten, L.H., Frank, E.: Data Mining: Practical Machine Learning Tools and Techniques, 2nd edn. Morgan Kaufmann, San Francisco (2005)

Tipping, M.E., Bishop, C.M.: Mixtures of probabilistic principal component analysers. Neural Comput. 11(2), 443–482 (1999)

Hyvarinen, A., Oja, E.: Independent component analysis: algorithms and applications. Neural Netw. 13(4–5), 411–430 (2000)

Barber, D.: Bayesian Reasoning and Machine Learning, Algorithm 21.1. Cambridge University Press, Cambridge (2012)

Hoffman, M.D., Blei, D.M., Bach, F.: Online learning for latent Dirichlet allocation. In: Proceedings of the 23rd International Conference on Neural Information Processing Systems, vol. 1, pp. 856–864 (2010)

Mairal, J., Bach, F., Ponce, J., Sapiro, G.: Online dictionary learning for sparse coding. In: Proceedings of the 26th Annual International Conference on Machine Learning (2009)

Cichocki, A., Phan, A.H.: Fast local algorithms for large scale nonnegative matrix and tensor factorizations. IEICE Trans. Fundam. Electron. Commun. Comput. Sci. 92(3), 708–721 (2009)

Zhu, J., Zou, H., Rosset, S., Hastie, T.: Multi-class AdaBoost. Stat. Interface 2, 349–360 (2009)

Breiman, L., Friedman, J., Olshen, R., Stone, C.: Classification and Regression Trees. CRC Press, Boca Raton (1984)

Manning, C.D., Raghavan, P., Schuetze, H.: Introduction to Information Retrieval. Cambridge University Press, Cambridge (2008)

Rasmussen, C.E., Williams, C.: Gaussian Processes for Machine Learning. MIT Press, Cambridge (2006)

Altman, N.S.: An introduction to kernel and nearest-neighbor nonparametric regression. Am. Stat. 46(3), 175–185 (1992)

Yu, H.F., Huang, F.L., Lin, C.J.: Dual coordinate descent methods for logistic regression and maximum entropy models. Mach. Learn. 85(1–2), 41–75 (2011)

Hinton, G.E.: Connectionist learning procedures. Artif. Intell. 40(1), 185–234 (1989)

Breiman, L.: Random forests. Mach. Learn. 45(1), 5–32 (2001)

Wu, T.F., Lin, C.J., Weng, R.C.: Probability estimates for multi-class classification by pairwise coupling. J. Mach. Learn. Res. 5, 975–1005 (2004)

Acknowledgments

This research is funded in part by the National Natural Science Foundations of China (Grant No. 61472258 and 61473194) and the Shenzhen-Hong Kong Technology Cooperation Foundation (Grant No. SGLH20161209101100926).

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2019 Springer Nature Singapore Pte Ltd.

About this paper

Cite this paper

Mahmud, M.S., Fu, X., Huang, J.Z., Masud, M.A. (2019). High-Dimensional Limited-Sample Biomedical Data Classification Using Variational Autoencoder. In: Islam, R., et al. Data Mining. AusDM 2018. Communications in Computer and Information Science, vol 996. Springer, Singapore. https://doi.org/10.1007/978-981-13-6661-1_3

Download citation

DOI: https://doi.org/10.1007/978-981-13-6661-1_3

Published:

Publisher Name: Springer, Singapore

Print ISBN: 978-981-13-6660-4

Online ISBN: 978-981-13-6661-1

eBook Packages: Computer ScienceComputer Science (R0)