Abstract

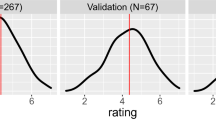

With continuous innovation and a persistent attempt to achieve natural human machine interactions, user interfaces have evolved into the current phase of Voice User Interfaces. Voice User Interfaces augmented with visual information are becoming prominent in a variety of devices with different form-factors. Developing user interfaces for such a wide range of display configurations is a challenging task. This paper puts forward an approach to dynamically compositing such user interfaces without compromising on User Experience. This work examines details of devising a neural network model to automate user interface layout composition based on aesthetics and saliency. A 40000 dataset was created for this work with eight trained annotators. Ground truth is estimated from the above annotations using Expectation Maximization algorithm. Experiments show that users are highly satisfied with the model results. This model is deployed in Lock Screen auto layout available in latest Samsung Android phones.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

References

Hassenzahl, M., Tractinsky, N.: User experience - a research agenda. Behav. Inf. Technol. 25(2), 91–97 (2006)

Eisenstein, J., Vanderdonckt, J., Puerta A.: Adapting to mobile contexts with user-interface modeling. In: Proceedings Third IEEE Workshop on Mobile Computing Systems and Applications, pp. 83–92 (2000)

Weld, D., Anderson, C., Domingos, P., Etzioni, O., Gajos, K., Lau, T., Wolfman, S.: Automatically personalizing user interfaces. In: Proceedings of the International Joint Conference on Artificial Intelligence, pp. 910–950, Acapulco, Mexico (2003)

Tractinsky, N.: The Encyclopedia of Human-Computer Interaction, 2nd Ed. (2016).

Here are all the Samsung Good Lock 2018 features and what they do https://www.androidauthority.com/samsung-good-lock-2018-878036/

Gajos, K., Weld, D.: SUPPLE: automatically generating user interfaces. In: Proceedings of the 9th International Conference on Intelligent User Interfaces, pp. 93–100 (2004)

Nichols, J.: Automatically generating high-quality user interfaces for appliances. In: CHI ’03 Extended Abstracts on Human Factors in Computing Systems, pp. 624–625 (2003).

Duan, P., Wierzynski, C., Nachman, L.: Optimizing user interface layouts via gradient descent. In: CHI '20: Proceedings of the 2020 CHI Conference on Human Factors in Computing Systems, p. 12 NY, USA (2020)

Zheng, X., Qiao, X., Cao, Y., Lau, R.: Content-aware generative modeling of graphic design layouts. ACM Trans. Graph. 38(4), 1–5 (2019)

Kang, L., Ye, P., Li, Y., Doermann, D.: Convolutional neural networks for no-reference image quality assessment. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 1733–1740 (2014)

Bosse, S., Maniry, D., Wiegand, T., Samek, W.: A deep neural network for image quality assessment. In: ICIP, pp. 3773–3777 (2016)

Talebi, H., Milanfar, P.: Nima: Neural image assessment. TIP 27(8), 3998–4011 (2018)

Zhang, L., Shen, Y., Li, H.: VSI: A visual saliency-induced index for perceptual image quality assessment. IEEE Trans. Image Process. 23(10), 4270–4281 (2014)

Biancardi, A., Jirapatnakul, A., Reeves, A.: A comparison of ground truth estimation methods. In: International Journal of Computer Assisted Radiology and Surgery, pp. 295–305 (2010)

Raykar, V., et al.: Learning from crowds. J. Mach. Learn. Res. 11, 1297–1322 (2010)

Whitehill, J., Wu, T., Bergsma, J., Movellan, J., Ruvolo, P.: Whose vote should count more: optimal integration of labels from labelers of unknown expertise. In: Advances in Neural Information Processing Systems, pp. 2035–2043 (2009)

Artstein, R., Poesio, M.: Inter-Coder Agreement for Computational Linguistics. In: Computational Linguistics, pp. 555–596 (2008).

App Widget Design Guidelines. https://developer.android.com/guide/practices/ui_guidelines/widget_design.htm

Ivan, K., Duerig, T., Alldrin, N., Ferrari, V., Abu-El-Haija, S., Kuznetsova, A., Rom, H.: OpenImages: A public dataset for large-scale multi-label and multi-class image classification. Dataset: https://storage.googleapis.com/openimages/web/index.html (2017)

Iandola, F., Han, S., Moskewicz, M., Ashraf, K., Dally, W., Keutzer, K.: SqueezeNet: AlexNet-level accuracy with 50x fewer parameters and< 0.5 MB model size. arXiv preprint arXiv:1602.07360 (2016)

Mingxing, T., Le, Q.: Efficientnet: Rethinking model scaling for convolutional neural networks. arXiv preprint arXiv:1905.11946 (2019)

Sandler, M., Howard, A., Zhu, M., Zhmoginov, A., Chen, L.: Mobilenetv2: Inverted residuals and linear bottlenecks. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 4510–4520 (2018)

Author information

Authors and Affiliations

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2021 Springer Nature Singapore Pte Ltd.

About this paper

Cite this paper

Kumar, R., Natarajan, S., Shariff, M.A.U., Mani, P.V. (2021). Dynamic User Interface Composition. In: Singh, S.K., Roy, P., Raman, B., Nagabhushan, P. (eds) Computer Vision and Image Processing. CVIP 2020. Communications in Computer and Information Science, vol 1377. Springer, Singapore. https://doi.org/10.1007/978-981-16-1092-9_18

Download citation

DOI: https://doi.org/10.1007/978-981-16-1092-9_18

Published:

Publisher Name: Springer, Singapore

Print ISBN: 978-981-16-1091-2

Online ISBN: 978-981-16-1092-9

eBook Packages: Computer ScienceComputer Science (R0)