Abstract

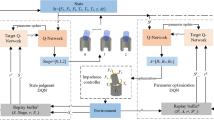

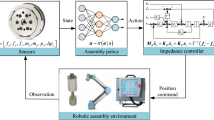

Hole-peg assembly using robot is widely used to validate the abilities of autonomous assembly task. Currently, the autonomous assembly is mainly depended on the high precision of position, force measurement and the compliant control method. The assembly process is complicated and the ability in unknown situations is relatively low. In this paper, a kind of assembly strategy based on deep reinforcement learning is proposed using the TD3 reinforcement learning algorithm based on DDPG and an adaptive annealing guide is added into the exploration process which greatly accelerates the convergence rate of deep reinforcement learning. The assembly task can be finished by the intelligent agent based on the measurement information of force-moment and the pose. In this paper, the training and verification of assembly verification is realized on the V-rep simulation platform and the UR5 manipulator.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

References

Quillen, D., Jang, E., Nachum, O., et al.: Deep reinforcement learning for vision-based robotic grasping: a simulated comparative evaluation of off-policy methods (2018). arXiv Robot

Zeng, A., Song, S., Welker, S., et al.: Learning synergies between pushing and grasping with self-supervised deep reinforcement learning. Intell. Robots Syst. 4238–4245 (2018)

Gu, S., Holly, E., Lillicrap, T., et al.: Deep reinforcement learning for robotic manipulation with asynchronous off-policy updates. In: International Conference on Robotics and Automation, pp. 3389–3396 (2017)

De Andres, M.O., Ardakani, M.M., Robertsson, A., et al.: Reinforcement learning for 4-finger-gripper manipulation. In: International Conference on Robotics and Automation, pp. 1–6 (2018)

Yang, P., Huang, J.: TrackDQN: visual tracking via deep reinforcement learning. In: 2019 IEEE 1st International Conference on Civil Aviation Safety and Information Technology (ICCASIT). IEEE (2019)

Kenzo, LT., Francisco, L., Javier, R.D.S.: Visual navigation for biped humanoid robots using deep reinforcement learning. IEEE Robot. Autom. Lett. 3, 1–1 (2018)

Yang, Y., Bevan, M.A., Li, B.: Efficient navigation of colloidal robots in an unknown environment via deep reinforcement learning. Adv. Intell. Syst. (2019)

Zeng, J., Ju, R., Qin, L., et al.: Navigation in unknown dynamic environments based on deep reinforcement learning. Sensors 19(18), 3837 (2019)

Fan, Y., Luo, J., Tomizuka, M., et al.: A learning framework for high precision industrial assembly. In: International Conference on Robotics and Automation, pp. 811–817 (2019)

Xu, J., Hou, Z., Wang, W., et al.: Feedback deep deterministic policy gradient with fuzzy reward for robotic multiple peg-in-hole assembly tasks. IEEE Trans. Ind. Informat. 15(3), 1658–1667 (2019)

Roveda, L., Pallucca, G., Pedrocchi, N., et al.: Iterative learning procedure with reinforcement for high-accuracy force tracking in robotized tasks. IEEE Trans Ind. Informat. 14(4), 1753–1763 (2018)

Chang, W., Andini, D.P., Pham, V., et al.: An implementation of reinforcement learning in assembly path planning based on 3D point clouds. In: International Automatic Control Conference (2018)

Inoue, T., De Magistris, G., Munawar, A. et al.: Deep reinforcement learning for high precision assembly tasks. In: Intelligent Robots and Systems, pp. 819–825 (2017)

Vecerik, M., Hester, T., Scholz, J., et al.: leveraging demonstrations for deep reinforcement learning on robotics problems with sparse rewards (2017). arXiv: Artificial Intelligence

Luo, J., Solowjow, E., Wen, C., et al.: Reinforcement learning on variable impedance controller for high-precision robotic assembly. In: International Conference on Robotics and Automation, pp. 3080–3087 (2019)

Lillicrap, T., Hunt, J.J., Pritzel, A., et al.: Continuous control with deep reinforcement learning (2015). arXiv Learning

Mnih, V., Kavukcuoglu, K., Silver, D., et al.: Playing Atari with deep reinforcement learning (2013). arXiv Learning

Fujimoto, S., Van Hoof, H., Meger, D. et al.: Addressing function approximation error in actor-critic methods (2018) . arXiv: Artificial Intelligence

Author information

Authors and Affiliations

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2021 Springer Nature Singapore Pte Ltd.

About this paper

Cite this paper

Zhang, X., Ding, P. (2021). Hole-Peg Assembly Strategy Based on Deep Reinforcement Learning. In: Sun, F., Liu, H., Fang, B. (eds) Cognitive Systems and Signal Processing. ICCSIP 2020. Communications in Computer and Information Science, vol 1397. Springer, Singapore. https://doi.org/10.1007/978-981-16-2336-3_2

Download citation

DOI: https://doi.org/10.1007/978-981-16-2336-3_2

Published:

Publisher Name: Springer, Singapore

Print ISBN: 978-981-16-2335-6

Online ISBN: 978-981-16-2336-3

eBook Packages: Computer ScienceComputer Science (R0)