Abstract

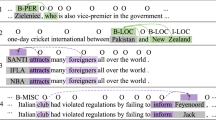

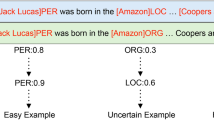

Named entity recognition (NER) is a task of identifying both types and spans in the sentences. Previous works always assume that the NER datasets are correctly annotated. However, not all samples help with generalization. There are many noisy samples from a variety of sources (e.g., weak, pseudo, or distant annotations). Meanwhile existing methods are prone to cause error propagation in self-training process because of ignoring the overfitting, and becomes particularly challenging. In this paper, we propose a robust Selective Review Learning (NSRL) framework for NER task with noisy labels. Specifically, we design a Status Loss Function (SLF) which helps the model review the previous knowledge continuously when learning new knowledge, and prevents model from overfitting noisy samples in self-training process. In addition, we propose a novel Confidence Estimate Mechanism (CEM), which utilizes the difference between logit values to identify positive samples. Experiments on four distant supervision datasets and two real-world datasets show that the NSRL significantly outperforms previous methods.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

References

Arpit, D., et al.: A closer look at memorization in deep networks. In: International Conference on Machine Learning. pp. 233–242. PMLR (2017)

Balasuriya, D., Ringland, N., Nothman, J., Murphy, T., Curran, J.R.: Named entity recognition in wikipedia. In: Proceedings of the 2009 Workshop on The People’s Web Meets NLP: Collaboratively Constructed Semantic Resources (People’s Web). pp. 10–18 (2009)

Cao, Y., Hu, Z., Chua, T.S., Liu, Z., Ji, H.: Low-resource name tagging learned with weakly labeled data. In: EMNLP-IJCNLP 2019. pp. 261–270. ACL (2020)

Derczynski, L., Nichols, E., van Erp, M., Limsopatham, N.: Results of the wnut2017 shared task on novel and emerging entity recognition. In: Proceedings of the 3rd Workshop on Noisy User-generated Text, pp. 140–147 (2017)

Devlin, J., Chang, M.W., Lee, K., Toutanova, K.: Bert: pre-training of deep bidirectional transformers for language understanding. In: Proceedings of the 2019 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies, Vol. 1, pp. 4171–4186 (2019)

Ghosh, A., Kumar, H., Sastry, P.: Robust loss functions under label noise for deep neural networks. In: Proceedings of the Thirty-First AAAI Conference on Artificial Intelligence, pp. 1919–1925 (2017)

Godin, F., Vandersmissen, B., De Neve, W., Van de Walle, R.: Multimedia lab@ acl wnut ner shared task: Named entity recognition for twitter microposts using distributed word representations. In: Proceedings of the Workshop on Noisy User-Generated Text, pp. 146–153 (2015)

Han, B., et al.: Co-teaching: robust training of deep neural networks with extremely noisy labels. In: Proceedings of the 32nd International Conference on Neural Information Processing Systems, pp. 8536–8546 (2018)

Lange, L., Hedderich, M.A., Klakow, D.: Feature-dependent confusion matrices for low-resource ner labeling with noisy labels. In: EMNLP-IJCNLP-2019, pp. 3545–3550 (2019)

Li, X., Sun, X., Meng, Y., Liang, J., Wu, F., Li, J.: Dice loss for data-imbalanced nlp tasks. In: Proceedings of the 58th Annual Meeting of the Association for Computational Linguistics, pp. 465–476 (2020)

Liang, C., et al.: Bond: Bert-assisted open-domain named entity recognition with distant supervision. In: Proceedings of the 26th ACM SIGKDD International Conference on Knowledge Discovery & Data Mining, pp. 1054–1064 (2020)

Liu, K., et al.: Noisy-labeled ner with confidence estimation. arXiv preprint arXiv:2104.04318 (2021)

Liu, S., Niles-Weed, J., Razavian, N., Fernandez-Granda, C.: Early-learning regularization prevents memorization of noisy labels. Adv. Neural Inf. Process. Syst. 33 (2020)

Liu, Y., et al.: Roberta: a robustly optimized bert pretraining approach. arXiv preprint arXiv:1907.11692 (2019)

Loshchilov, I., Hutter, F.: Fixing weight decay regularization in adam (2018)

Nooralahzadeh, F., Lønning, J.T., Øvrelid, L.: Reinforcement-based denoising of distantly supervised ner with partial annotation. In: Proceedings of the 2nd Workshop on Deep Learning Approaches for Low-Resource NLP (DeepLo 2019), pp. 225–233 (2019)

Peng, N., Dredze, M.: Named entity recognition for Chinese social media with jointly trained embeddings. In: Proceedings of the 2015 Conference on Empirical Methods in Natural Language Processing, pp. 548–554 (2015)

Pleiss, G., Zhang, T., Elenberg, E.R., Weinberger, K.Q.: Identifying mislabeled data using the area under the margin ranking. arXiv preprint arXiv:2001.10528 (2020)

Ratinov, L., Roth, D.: Design challenges and misconceptions in named entity recognition. In: Proceedings of the Thirteenth Conference on Computational Natural Language Learning (CoNLL-2009), pp. 147–155 (2009)

Reed, S.E., Lee, H., Anguelov, D., Szegedy, C., Erhan, D., Rabinovich, A.: Training deep neural networks on noisy labels with bootstrapping. In: ICLR (Workshop) (2015)

Reiss, F., Xu, H., Cutler, B., Muthuraman, K., Eichenberger, Z.: Identifying incorrect labels in the CoNLL-2003 corpus. In: Proceedings of the 24th Conference on Computational Natural Language Learning, pp. 215–226 (2020)

Sang, E.F., De Meulder, F.: Introduction to the CoNLL-2003 shared task: Language-independent named entity recognition. arXiv preprint cs/0306050 (2003)

Shang, J., Liu, L., Ren, X., Gu, X., Ren, T., Han, J.: Learning named entity tagger using domain-specific dictionary. arXiv preprint arXiv:1809.03599 (2018)

Szegedy, C., Vanhoucke, V., Ioffe, S., Shlens, J., Wojna, Z.: Rethinking the inception architecture for computer vision. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 2818–2826 (2016)

Tanaka, D., Ikami, D., Yamasaki, T., Aizawa, K.: Joint optimization framework for learning with noisy labels. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 5552–5560 (2018)

Wang, X., Hua, Y., Kodirov, E., Robertson, N.M.: Imae for noise-robust learning: mean absolute error does not treat examples equally and gradient magnitude’s variance matters. arXiv preprint arXiv:1903.12141 (2019)

Wang, Z., Shang, J., Liu, L., Lu, L., Liu, J., Han, J.: Crossweigh: training named entity tagger from imperfect annotations. In: EMNLP-IJCNLP, pp. 5157–5166 (2019)

Yang, Y., Chen, W., Li, Z., He, Z., Zhang, M.: Distantly supervised NER with partial annotation learning and reinforcement learning. In: Proceedings of the 27th International Conference on Computational Linguistics, pp. 2159–2169 (2018)

Yi, K., Wu, J.: Probabilistic end-to-end noise correction for learning with noisy labels. In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, pp. 7017–7025 (2019)

Yu, X., Han, B., Yao, J., Niu, G., Tsang, I., Sugiyama, M.: How does disagreement help generalization against label corruption? In: International Conference on Machine Learning, pp. 7164–7173. PMLR (2019)

Zhang, C., Bengio, S., Hardt, M., Recht, B., Vinyals, O.: Understanding deep learning requires rethinking generalization. arXiv preprint arXiv:1611.03530 (2016)

Zhang, Z., Sabuncu, M.R.: Generalized cross entropy loss for training deep neural networks with noisy labels. In: Proceedings of the 32nd International Conference on Neural Information Processing Systems, pp. 8792–8802 (2018)

Acknowledge

This work is supported by the National Natural Science Foundation of China (No.61976211, No.61806201). This work is supported by Beijing Academy of Artificial Intelligence (BAAI2019QN0301) and the Key Research Program of the Chinese Academy of Sciences (Grant NO. ZDBS-SSW-JSC006). This work is also supported by a grant from Huawei Technologies Co., Ltd.

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2021 Springer Nature Singapore Pte Ltd.

About this paper

Cite this paper

Huang, X., Chen, Y., Liu, K., Xie, Y., Sun, W., Zhao, J. (2021). NSRL: Named Entity Recognition with Noisy Labels via Selective Review Learning. In: Qin, B., Jin, Z., Wang, H., Pan, J., Liu, Y., An, B. (eds) Knowledge Graph and Semantic Computing: Knowledge Graph Empowers New Infrastructure Construction. CCKS 2021. Communications in Computer and Information Science, vol 1466. Springer, Singapore. https://doi.org/10.1007/978-981-16-6471-7_12

Download citation

DOI: https://doi.org/10.1007/978-981-16-6471-7_12

Published:

Publisher Name: Springer, Singapore

Print ISBN: 978-981-16-6470-0

Online ISBN: 978-981-16-6471-7

eBook Packages: Computer ScienceComputer Science (R0)